Topics

-

AI News from ET - TSMC boss upbeat on outlook as AI boom shows no sign of easing

Chief Executive C.C. Wei, speaking at TSMC's annual shareholders' meeting in the northern Taiwanese city of Hsinchu, added that its customers continue to express a positive outlook for the AI industry. View the full article

Chief Executive C.C. Wei, speaking at TSMC's annual shareholders' meeting in the northern Taiwanese city of Hsinchu, added that its customers continue to express a positive outlook for the AI industry. View the full article -

AI News from ET - Indian VCs expand US footprint to tap AI boom early

Indian tech investors are increasingly establishing a presence in the US, particularly in the Bay Area, to tap into the booming AI sector. This strategic move aims to foster closer relationships with innovative startups and secure a competitive edge in a market dominated by AI advancements. View the full article

Indian tech investors are increasingly establishing a presence in the US, particularly in the Bay Area, to tap into the booming AI sector. This strategic move aims to foster closer relationships with innovative startups and secure a competitive edge in a market dominated by AI advancements. View the full article

Leaderboard

-

Mayank Gupta

Members21Points679Posts -

Vishwadeep Khatri

Administrators19Points6,093Posts -

Sargun Diwan

Members17Points10Posts -

Jess Balmaceda

Members16Points12Posts

Popular Content

Showing content with the highest reputation since 06/04/2025 in all areas

-

Bias in, Bias out: How Do We Break the Cycle?

At our e-commerce product company, we have an AI powered search and recommendation engine feature. It can be configured on each customer project to leverage multiple data sources (ERP, e-commerce, PIM, purchase history) to personalize search and product recommendations. Personalization features include adjusting results based on purchase history, brand preference, and customer profiles. Our learning has been The recommendation engine can personalize shop assortment for different customer segments. While designing customer flows for this feature, we must ensure that the engine does not unintentionally limit catalog visibility or surface exclusive categories disproportionately. If historical purchase data, browsing patterns, or segment profiles reflect societal biases (e.g., preferences along gender, age, ethnicity, or socioeconomic lines), the algorithms can and will replicate and propagate these biases—such as recommending certain products less to some demographic groups or showing limited assortments. Segment-based catalog restriction could reinforce silos and limit choices for certain customer groups, mirroring or reinforcing pre-existing marketplace or data biases. Customizing algorithmic weighting based on customer profiling without scrutiny could favor or disadvantage groups. We had a real example of a sports attire retailer using our product where we experienced that “Inclusive Sizing” (sizes beyond standard American XS–XL, such as plus sizes or petite/tall fit) appeared in only about 10% of products in a given search result. The dynamic facets logic tended to omit these size attribute from the filters entirely. As a result: Customers seeking inclusive sizes were unable to filter effectively. The represented bias favoured mainstream size ranges, thus marginalizing niche segments. The system then further skewed visibility toward products that align with majority sizing, and had potential to worsening representation over time. Some real world complains from users were - "I can never find anything smart with a good price in my size unless they are your top-of-the-line products" - "I see models wearing new designs in the ads but I can't find enough trendy but age-appropriate colours on the website" Additionally, one real risk that was evaluated was that our model/engine might consistently push popular products from high-traffic regions, while under-representing niche or emerging markets. This not only skews visibility but may also limit growth opportunities for less dominant segments. Some steps that we have attempted to apply Design Phase - Curate diverse and representative data inputs - Allow manual overrides for known critical attributes and for attributes deemed socially or commercially significant (e.g., inclusive sizing, accessibility features) were treated as “defined facets,” ensuring consistent visibility regardless of prevalence. - Ethical guardrails in personalization logic: Forbid certain features (like region or size) from driving recommendation weighting unless justified. Testing Phase - Synthetic Test Profiles across demographics - Manual Testing to find if the engine is developing such biases Monitor and Audit Facet Presentation - Track which facets are consistently hidden across queries and evaluate whether they represent systematically underrepresented groups or product lines - Before releasing compliance review is emphasized on Legal, Privacy(GDPR), Security & Accessibility These proactive steps are now taken on early and help ensure our AI serves all buyers fairly, avoiding the “bias in, bias out” trap in new implementation projects.6 points

-

What Happens When an AI Solution Solves the Wrong Problem?

In 2022, Klarna launched a full-speed AI deployment automating most of its processes using AI solution and realized cost savings equivalent to 700 FTE. One of the processes they automated was their Customer Service Support. After a while, customer complaints and dissatisfaction ballooned. Customers claimed that AI responses were too generic and unhelpful when dealing with real-life problems. While AI solution like chatbots can handle simple and repetitive queries, emotions or complex issues were not addressed. Klarna realized that while AI solutions promise speed and cost savings, it can compromise service quality and customer satisfaction. Klarna decided to rehire employees to address poor service quality and customer complaints. This is a testament that AI solution isn’t about replacing humans, but rather, enhancing the human workforce with smarter tools and better support system. As an MBB, following were my recommendation: 1. Use VOC to identify critical customer requirements (CCR) where complex issues and customers needing to talk to human to solve their concerns will surface. 2. AI solution aims to enhance customer experience leveraging on personalized interaction for higher engagement. This was not apparent in case of Klarna. It is recommended to take advantage on Deep Learning capabilities of AI solution. Such model can identify complex patterns, making it suitable in image recognition, voice recognition, and natural language processing. 3. Lastly, while drawing the to-be process map, HILT (human-in-the loop) principle is recommended. In cases of complex customer concern, AI can escalate the concern to its human counterpart to further assess the given concern and provide necessary resolution.4 points

-

What Should AI Governance Look Like in a Business Excellence-Driven Organization?

According to what VK noted under his forum questions, “Some people seem to be using AI platforms to find forum answers. This is a risky approach as AI responses are error-prone..” AI is created by humans who are prone to error. We must always remember this and be diligent to make sure AI will make the best decisions. “Making sure” will ALWAYS to be and, I believe, will forever be, a human responsibility. I can’t ever imagine anyone shirking their responsibility and pointing at the AI solution and saying “It’s the AI’s fault that we lost revenue”. Yes. It might have been that we trusted the AI agent to make the decision but ONLY after we allowed it to make that decision. So, the real accountability still falls back to a human. Knowing that AI is prone to make errors, just as humans have done to mitigate making our own errors, we created guardrails to increase proper decision making and better outcomes – ergo, Business Excellence. Think of AI as another person. But now you are responsible for the decisions and actions of that person. It will need oversight, accountability, and transparency to make sure AI is making the right decisions on our behalf. Here are some of the elements that I think could be included in a governance framework to ensure responsible, high-impact use of AI in a process-driven organization. Creating a governance team or committee to oversee all AI solutions. This team would comprise people from IT, the business, legal, risk management and defining each role and responsibility throughout the AI development, deployment and maintenance. For transparency and accountability, conducting regular impact assessments to identify potential risks, biases and consequences of AI-driven decision. Also, implementing techniques that can provide insights into the how AI is making its decisions, such as feature attribution or model interpretability methods. Lastly, performing audit trails that let us see the data inputs, processing and outputs the AI used to make its decision. For agility and control, using agile development methodologies to allow for rapid iterations and deployment. Using change management to capture the all the changes made throughout the development which can easily be reviewed, tested, and validated. Lastly, establish access controls to prevent unauthorized changes to the AI system or data.4 points

-

Are Your Metrics Ready for an AI-Enabled Organization?

Proposed Business Excellence Metrics for the AI Era - The use of AI in the core business processes is reshaping how value is created and delivered by organizations. Subsequently, the traditional KPI metrics we have used to measure performance in areas like quality, cost, and efficiency are becoming insufficient and redundant. Using these old metrics in an AI-driven environment can be misleading, causing organizations to optimize for the wrong behaviors and not reap ROI on their technology investments. Let us begin by assessing the Traditional metrics and their shortcomings in an AI driven environment. 1. Assessment of Traditional Metrics Metric 1: First Call Resolution (FCR) It has long been a primary KPI to monitor contact center efficiency and customer satisfaction, indicating a low effort experience for the customer and low cost for the business. In an AI-Driven Environment: Using AI-powered chatbots, IVRs, and self-service portals to manage simple, high-volume, transactional queries is an attempt to give the “Easy” Calls today to machines instead of humans. These were precisely the calls that used to be FCR wins for human agents. By filtering simple issues, AI is ensuring that the only calls reaching human agents are the complex, emotionally charged, or multi-faceted problems that the AI could not solve. And it turns out that these problems are more difficult to solve in one phone conversation. Following these developments, a high FCR rate might actually be a concern! It could potentially indicate that the AI is not being effectively used to screen issues, or human agents bring complex problems to a premature close just to attain an outdated target. While a lower FCR could signify that agents are appropriately handling the highly complex issues that require follow-up, research, and collaboration. Metric 2: Average Handle Time (AHT) AHT measures the average duration of customer interaction. It has been a pivotal metric in gauging operational efficiency, used for staffing models and cost control. The goal has always been to reduce AHT. In an AI-Driven Environment: Since the calls that are able to reach human agents as mentioned above are likely to be important ones. We shouldn't be obsessed with how soon the agent can get the customer of the phone but rather with what quality and value is one giving. A complex issue, high-value customer retention or an upsell opportunity might require a longer AHT. Stressing agents to cut AHT on complex calls can have detrimental effect not only with regards to poor outcomes, customer churn, and repeat calls (which negatively impact other metrics). The AHT metric also disregards entirely the time customers may have already spent interacting with an AI chatbot, rendering the “AHT” only a partial — and potentially misleading — view of the overall customer journey effort. 2. Proposed New Metrics In order to track performance in an AI-driven setting, we need new metrics capturing proactive problem-solving, and the utility of human-AI interaction. Proposed New Metric 1: Proactive Resolution Rate (PRR) PRR is the ratio of potential customer issues that are identified and resolved proactively by the AI system before the customer initiates contact. PRR Logic o The AI tracks customer journey data, usage patterns, and system logs for anomalies that indicate there is a problem in the process (e.g., missed payment, delayed delivery, odd user behavior in a software application). o The AI then initiates an automated resolution using the SOP’s, FAQ’s and KB updates to assist the customer (e.g., retries the missed payment, informs the logistics partner, proactively sends a "how-to" guide, or sends a system alert to the user). o PRR Calculation: (AI-initiated Proactive Resolutions / Total potential issues detected) x 100 · This metric, most importantly, switches the mindset away from reactive service and illustrates the value of preventative excellence. It captures a measure of the organization's ability to avoid problems, which is a far stronger indicator of operational excellence and customer-centricity than how effectively it cleans up messes. Proposed New Metric 2: Human-Assisted Value-Add (HAVA) · HAVA Score is a metric for evaluating the efficacy and efficiency of human agents involved in complex situations escalated by AI. The HAVA Score replaces the use of simplified metrics like AHT and FCR for these high-value encounters. · HAVA Logic: The HAVA Score is calculated after the interaction and based on a weighted calculation of the following: Problem Resolution Success (40%): Was the customer's issue ultimately resolved? (Binary: Yes/No, or a scaled rating). Customer Sentiment Analysis (30%): AI parses the text or audio of the communication to measure customer sentiment levels (i.e., measuring if the customer's levels of frustration decreased, positive language increased, etc.) Customer Lifetime Value (CLV) Impact (20%): Did the interaction led to customer retention, a new purchase, or an upgrade, this can be done by mapping the service interaction to CRM data. Knowledge Base Contribution (10%): Did the agent record the solution for this unique problem, so it could be used for training the AI in the future? (thus helping avoid similar escalations). · HAVA provides a path away from basic efficiency and instead reflects the true value of the human agent in the world of AI. HAVA rewards agents to be thorough and empathetic problem-solvers. HAVA also promotes a learning cycle in which the agent is incentivized to make the AI smarter through KB updates, contributing to the improvement of the system over time. 3. Linkage to Business Excellence These proposed metrics are directly aligned with the core principles of Business Excellence. Business Excellence Principle How Proposed Metrics Align Customer Centricity PRR is a measure of an organization’s ability to solve problems before the customer even knows about them, it is the most efficient form of customer-centricity and true commitment to an effortless experience. The HAVA Score ensures that when customers do need to talk to a human, the focus is all about solving their complex needs and maintaining the relationship that impacts their perception of value and care. Operational Excellence & Quality Improvement PRR actively measures the quality of operational processes. A high PRR means that the underlying systems and processes that are driving the standard approach we work towards, are efficient, intelligent and self-healing, which is an essential component of modern operational excellence. The HAVA Score assists and develops an environment for continuous improvement. Agents are rewarded for contributing to a knowledge base, ensuring human knowledge is captured, and then used to build up the overall human-ai capability to get smarter and smarter, and to be able to do more at scale over time. Employee Engagement & Empowerment HAVA, also enhances the human agent's role from "call handling" to "resolution expert or relationship builder." It enables and rewards them for spending time in solving complex issues whilst creating value - leading to higher job satisfaction and lower turnover. It recognizes and rewards the value of empathy, creativity and complex problem solving that are inherent to being human. Value-Driven Leadership With these metrics available to leaders, they can get a clearer and more informative view of their business performance. Instead of managing counterproductive metrics, they can focus on the real priorities: stopping customer issues before they occur, getting the most value for each human engagement, and designing a learning system that continuously improves with every transaction.4 points

-

Bias in, Bias out: How Do We Break the Cycle?

Is AI solution biased? Well before asking this question, let us dwell more into human nature, is human response or process building biased, it has to be, it forms the basis of selecting criteria, a baseline on which the entire process is set or supposed to operate. Similarly, when we create an AI agent there will be a bias in AI-enabled customer service processes, especially in banking—can have serious consequences, from unfair treatment of customers to regulatory violations. Let’s break this down using your example of a third-party contact center handling banking queries, such as Annual Maintenance Charges (AMC) or unauthorized UPI transactions, and explore how bias can creep in and how to mitigate it. What Bias Can Appear in Banking Customer Service and Where? 1. Case Prioritization Risk of bias: AI may prioritize cases based on customer profile (e.g., high-value customers), potentially delaying resolution for others. E.g: AMC-related queries from senior citizens may be deprioritized if the model learns they are less likely to escalate. 2. Action Recommendations Bias possibility: AI may suggest refunds or escalations based on historical patterns that reflect biased decisions. Example: UPI fraud cases from Tier-2 cities may be less likely to get recommended for escalation due to historical underreporting. 3. Response Generation Bias Risk: Regional models may respond taking into consideration the tone of voice, choice of words, AI agent will respond differently given the tone, politeness and choice of words for customers based in northern part of India versus the same AI agent might find the customer’s similar language or choice of words as rude or condescending and might deny service in southern part of India. Language models may respond differently based on customer name, language, or tone. Example: A polite query may get a more helpful response than an agitated one, even if both are valid. 4. Billing Model Influence Bias Risk: If billing is based on connect minutes, agents may be incentivized to prolong calls. If based on call count, they may rush. Example: AMC queries may be wrapped up quickly without full resolution under a per-call billing model. So, what do we do to minimize bias in Design, Testing, and Monitoring A. Design Phase Diversify Training Data Be it low income customers or high rollers, you might want to include varied customer profiles, geographical regions of customers, languages, net worth of customers, and complaint types. Low amount frauds or frauds based on a certain amount should not matter when a customer is complaining of an unauthorized transaction by a merchant. There is a possibility of bias setting in based on a low or high amount transaction, AI might prioritize only high amount unauthorized transaction cases. We must ensure representation of certain vulnerable groups (e.g.,low income, senior citizens, rural customers). Provide clear objectives that kill bias Design AI models with fairness constraints (e.g., equal resolution rates across demographics). Avoid optimizing solely for efficiency metrics like AHT (Average Handling Time). Human-in-the-Loop Keep humans involved in sensitive decisions (e.g., refund approvals, fraud escalations). B. Testing Phase Inclusion of Bias Audits Test model outputs across different customer segments. Use synthetic data to simulate edge cases (e.g., same query from different regions). Scenario-Based Testing Create test cases for AMC and UPI queries with varying tones, languages, and urgency levels. Check for consistency in response quality and resolution. Metric Diversification Track fairness metrics alongside performance metrics (e.g., resolution equity, escalation parity). C. Monitoring Phase Set up real-time dashboards Monitor call outcomes by customer segment, query type, and agent behavior. Flag anomalies (e.g., unusually short calls for UPI fraud cases). VOC : Feedback Collect customer feedback post-call and correlate with AI decisions. Use feedback to retrain models and adjust flows. Billing Model Alignment Ensure billing models don’t incentivize biased behavior. Consider hybrid models (e.g., quality-adjusted call count) to balance efficiency and fairness. How do we break the “Bias In, Bias Out” Cycle Continuous Learning: Regularly update models with new, unbiased data and feedback. Make it transparent: Make AI decision-making explainable to agents and supervisors. Assign ownership: as a check mechanism, assign accountability for bias monitoring and remediation. Cross-Functional Collaboration: Involve friendly customer base, compliance team, QA team, and customer experience teams in AI governance.3 points

-

Keeping Track: Version Control for AI Flows & Prompts

Here's a methodical and useful way to keep track of versions, make sure performance is good, and produce clear documentation for AI processes and prompts that vary over time: 1. Make a formal versioning system Think about AI processes and prompts as code instead of making arbitrary changes: You can save your prompt and flow definitions as text files (JSON, YAML, Markdown) in Git or a program like it. Semantic Versioning makes it easy to communicate about changes: Major: A substantial alteration in the design's purpose or flow. Minor: New features or better prompts. Patch: Fixes or small modifications. Add commit messages that say what the change is meant to do and why it was made. Put both the prompt text and the evaluation/test cases in the same repository so that you can observe both the inputs and the outcomes over time. 2. Make a registry for Prompt and store information about it. Keep a well-organized register (this might be a spreadsheet, a Notion database, or an internal tool) that has: ID of the version Date of Release Writer/Owner Changes Explained Results of tests that are connected Cost, accuracy, latency, and satisfaction are measured/ indicates performance. Rollback Reference - to the previous version This registry is your traceability source to/whether you compare or go back. 3. Check Before You Start To make sure that upgrades are useful and not harmful: Use fake and real test cases from the past to execute the new flow/prompt in a sandbox environment. A/B Testing: Send a small quantity of traffic to the new version and see how it compares to the baseline version. Regression Checks—Check that crucial KPIs don't go down for scenarios that are known to be good. When you can, automate tests by generating a list of queries and expected outputs ahead of time and running them on both old and new versions. 4. Document errors/problems with corresponding causes If you change something, be sure to add: The problem statement, such - users didn't understand step 3 in the flow. The theory, like - making the language easier should lead to more people finishing. The proof after deployment, such as - the recall rate improved from 72% to 84%. You or another developer will be glad know what was wrong when you look at older versions again. 5. Be ready to go back Make sure that the last stable version is always straightforward to install. Make it easy to roll back your deployment process, ideally with only one click or command. Write down when and why rollbacks occurred. They can be just as useful as changes that happen in the future. 6. Find a way to blend stability with new ideas. The Innovation Track is an experimental branch, where you may test new techniques to get engineers to work without putting the stability of production at risk. Stable Track: Flows that are ready for use and only get revisions after a lot of testing. Changes from innovation should only be merged to stable when the metrics/performance are fine. This is basically a two-speed paradigm for development: fast testing and slow release. An example of a workflow Create a new prompt in any AI tool. Make your commitment clear: Make step 3 clearer to cut down on drop-offs. Do automated testing and have people look at old cases. Send 10% of traffic to A/B testing. If the metrics improve, merge into the main branch and change the version. Put notes and numbers in the Prompt Registry. Conclusion Managing different versions of AI flows and prompts requires the same amount of attention as building software. The best method to do this is to put together: Git and semantic versioning are examples of structured version control. Centralized Documentation (a registry with performance logs and other information that is easy to access) Strong testing and rollbacks, such sandboxing, A/B testing, and automated regression checks Two-speed development means having a solid track for production and an innovation track for testing. This makes sure that every change can be logged, tested, and undone, which helps teams come up with new ideas quickly while keeping things stable. In short, always have a way back, write down the why, and test the what.3 points

-

Keeping Track: Version Control for AI Flows & Prompts

When we first started using AI to track production downtime patterns, I built a simple flow that pulled operator inputs and generated quick insights for the shift leads. At one point, I decided to tweak the prompt that asked operators to describe the issue, just to make issues clearer and easy to understand by the technical team. I thought it was an improvement. A week later, my phone was buzzing during a site visit because the reports coming out of the system suddenly had big gaps. Turns out my “clarity” change made operators give shorter answers that didn’t have enough detail for the analysis to work. Since then, I’ve treated AI flows exactly the way I treat any process change in manufacturing: I save every version before I touch it. Not just the file but a quick note on what I changed and why. I run the new version in a controlled test with a small team, not the whole plant. If it performs better on the KPIs we care about like accuracy, speed, usability, then it graduates to live. If it doesn’t, I roll it back in minutes because the last good version is sitting in my folder. I also keep two environments: the stable one for what’s proven, and a “playground” for experiments. That way, I can test bold ideas without worrying about disrupting a live process. It’s the same mindset I use in CI projects: measure first, change deliberately, and always keep the option to go back. With AI flows, that discipline makes the difference between steady improvement and a messy guessing game.3 points

-

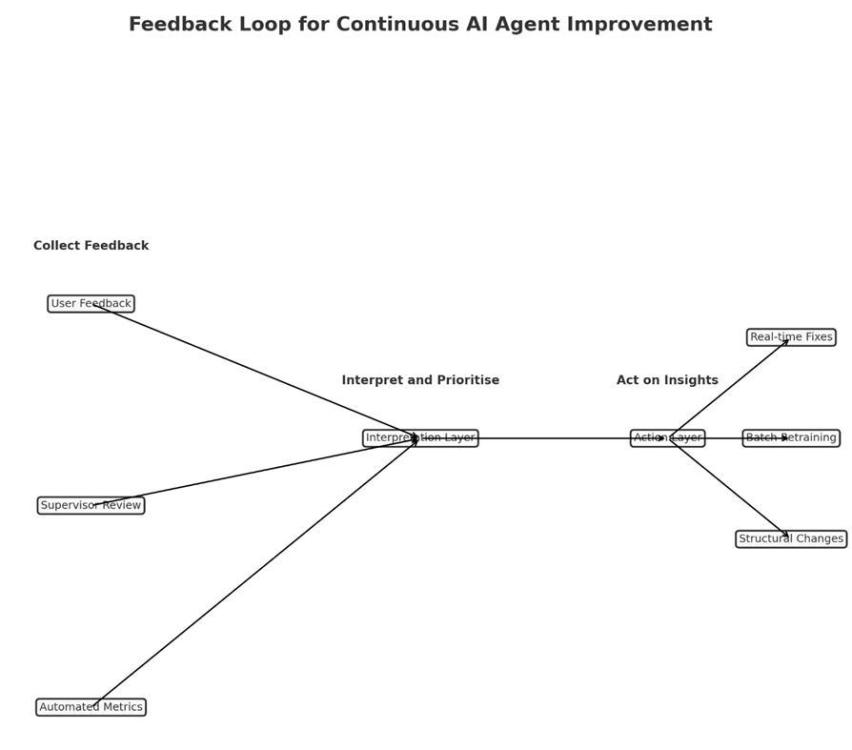

How Should Your AI Agent Learn From Real-World Feedback?

How I Would Build a Feedback System for an AI Customer Service Agent? It’s like hiring a new customer service rep. - you would not throw them in front of customers on the first day and hope for the best, instead you would watch how they perform, collect feedback from customers and supervisors, and help them improve. An AI agent needs the same kind of ongoing training. Three Ways to Collect Feedback Ask Customers Directly but Keep It Simple: After the AI helps with a real question, show three quick buttons: thumbs up, neutral face, or thumbs down. Include a small text box so customers can add a quick note such as “Did not understand my mortgage question” or “Gave me the right answer but sounded robotic.” The key is to ask only after meaningful conversations, so customers are not continuously prompted after every single interaction. Have Human Experts Check the AI’s Work Once a week, experienced supervisors can review a sample of conversations, focusing on ones with poor ratings, long resolution times, or high-stakes topics like compliance. They will spot details that metrics miss, such as “The AI gave correct information but did not recognise that the customer was frustrated about a fee.” Reviewing a sample, rather than every conversation, keeps the process manageable. Track the Numbers Monitor essential metrics such as first-time resolution, the number of cases escalated to human agents, and average resolution time for each case. Occasionally, you may send test questions where you already know the correct answer to ensure the AI is still performing well. Making Sense of the Feedback Collecting feedback is easy, making it useful takes work. Start by grouping similar issues together, such as “Does not understand regional accents,” “Too formal when customers are upset,” or “Provides incorrect information.” Prioritise by severity. A calculation error is far more serious than sounding overly formal. Look for patterns, for example, whether accuracy drops on Mondays when there is a backlog from the weekend. Three Speeds of Improvement 1. Quick fixes can be made in a day or two, such as updating outdated information. 2. Regular updates can happen once a month, retraining the AI on the most common issues identified in the feedback. 3. Big changes, such as adding advanced document-reading capabilities such as OCR, will take longer and require more planning. Avoiding Feedback Overload Too much feedback can overwhelm the team; focus on the interactions that reveal the most. Address urgent issues immediately and save routine improvements for the monthly review. Once an issue has been resolved and stays fixed for a few months, stop monitoring it closely and turn your attention to new challenges. Keep People Involved Let customers and employees know their feedback matters. If you improve the AI’s ability to answer product questions based on someone’s suggestion, say so: “We have improved how our AI handles product inquiries based on your feedback.” When employees see that their input leads to real improvements, they will continue offering valuable suggestions. The Bottom Line Maintaining an AI agent is like maintaining a car. You make small adjustments as needed, schedule regular check-ups, and only conduct major repairs when something fundamental needs to change. The goal is steady improvement, so the AI gets better every week without frustrating customers or overwhelming the team.3 points

-

How Should Your AI Agent Learn From Real-World Feedback?

Let's look at a real-world scenario to see how to construct a strong and valuable feedback loop for improving an AI agent after it has been put into operation. For instance, an AI customer service person that works for a company that provides financial services. This assistant helps people who have inquiries about how to manage their accounts, make purchases, and receive support with items. A Look at Feedback Loop Design There would be three stages of the feedback loop: Feedback that the user begins (Explicit) Feedback that the system gives you (implicit) Human (Supervisor or Lead) in the loop (HITL) should check it out. A centralized feedback processing pipeline receives feedback from each layer, sorts it, rates it, and sends it to either Automated learning modules for modifications that aren't too risky People look at significant or private issues in lineups Ways to collect feedbacks or comments 1. Clear feedback from users After each communication, you can give them a thumbs up or down or a star rating. Inline modifications or recommendations, like "That's not what I meant," start the process of capturing the intended revision. Short surveys after each session to get qualitative feedback Design tip: Keep it light and optional. Only ask for help after a big interaction or when a task is finished or not. 2. Implicit Feedback on Behavior: When a user quits a chat in the middle of it, they are giving feedback on their behavior. Asking the same inquiry over and over or getting a human agent involved Latency or hesitation (the user takes a long time to respond or suddenly changes the subject) To locate places where people are having problems interacting, these signals are marked and given a score. 3. Comments from the supervisor and the audit There are notes about human agent escalations, such as "AI got the request wrong." Random encounters are scored and grouped by quality during periodic audits (for example, tone mismatch or outdated information). Tagging for compliance, especially in sensitive areas like delivering financial advice Feedback that has been marked by a boss is more important. Getting criticism and learning from it Tiered Processing Pipeline: Automatically tagging and grouping similar problems, such "tone issues" and "entity mismatches," using heuristics and NLP classifiers. Making a decision based on risk assessment: Is it possible for the model to fix itself by retraining? Do you need to update the template or prompt? Or should this go to human developers? Routing Feedback: Adjusting the prompt or retraining on grouped samples automatically applies low-risk fixes. A person must look over and approve high-risk fixes before they may be added. How to Avoid Getting Too Much Feedback: Threshold-based Sampling: Only reveal feedback when there is a pattern, such when five or more people complain about the same item. A way to put feedback in order: Impact (frustration score) twice Frequency is the same as Priority Score Digest of the Day: Dashboards for teams that illustrate the most significant issues, possible solutions, and plans for putting them into action. Feedback Archiving Windows: Old feedback that has been dealt with is put away so it doesn't happen again. Finding Tone Mismatches: An Example in Action Users give the bot a "rude" rating in more than 10 sessions when it responds to late payments. A high pace of escalation in those negotiations is an implicit sign. The supervisor says that three interactions are "too formal." The system puts these together and offers a prompt modification to soften the tone: You haven't paid yet. Please repair this right now. To: "It looks like your payment is late. Let's work together to make it better! Used through A/B testing, watched, and proved that it got better Summary: Why This Works Practical: in the Real World Uses real signals (both implicit and explicit), automates low-risk tasks, and gets people involved when they need to be. Relevant: directly applicable to areas such as healthcare, HR support, financial services, and others. Balanced: teams are always getting better without too much stress, and there are built-in safety safeguards and human oversight.3 points

-

Control Phase

3 pointsWhy do those wins sometimes slip away in the Control phase? There are various reasons why solution to improved process slip away in the Control Phase. Here are some: 1. Thinking that identifying a solution is enough. Oftentimes, organization and project team missed to establish controls to sustain and ensure consistency of the injected solution. Testing of impact and feasibility of the solution is missed, only to find out that local staff implementing the solution on their day-to-day task find it difficult to sustain. 2. Poor communication of intended changes. Formal handover of improved process to its process owner/local management is essential, more importantly to its local staffs who will deal with day-to-day work where improvement took place. A better understanding of what was the problem and why improvement was made must be clear and aligned with the local team. Buy-in and ownership of local team is vital to sustain the solution. This means making them involve from the start and all throughout the project’s phase. 3. Inadequate training in new condition. Lack of enablement of local management and staff who’ll be implementing the solution and who’ll be using it daily will surely make successful implementation fail. Conducting training and enabling local staff before full implementation of solution will provide knowledge, familiarity, build confidence, and likely ensure success of implementation. Adequate training puts the local staff in a controlled environment where learning curve is monitored and supervised. Under scrutiny, old behaviors will be guided and replaced with new intended behavior tied up with the change throughout the training process. Local management’s involvement in training brings alignment, trust, and confidence with local staffs. 4. New process not captured in written procedure. Procedure provides guide and clarity to the doers. It should contain the process details, working sequence broken down into elements, with hints and tips how to perform an activity or task. Updated written procedure of the improved process is an essential supplement to the enablement of local staff and management alike. Procedure should be crafted in such a way not bounded to misinterpretation in order to avoid human error. 5. No monitoring to check that solution is working as intended. Local management’s involvement in the handover of the improved process is essential. Management oversight alongside with tools and best practices such as Control Plan, Process Control Chart, Gemba Kaizen, and process audit should be understood and taught to them by the project team. Having a KPI metric that shows process performance where improvement was made is of equal importance for sustainable management oversight. What tools or techniques do you rely on to keep things on track and make sure the improvement sticks for good? Handover process management is crucial to sustain the successful process improvement in long term. Process documentation such as to-be process flow chart, training & enablement plan, process aids/visual references, control plan, process control chart, updated procedure, FMEA (whenever applicable), and implementation plan should form part of the handover process to local management. This is a structured way to provide clarity on how the improved process works and how it should be monitored to sustain the gain of its financial and non-financial benefits. Lastly, involvement of process improvement team or at least its leader in KPI monitoring and random process audit for the next three to six months upon handover is another key on sustainability of the improvement made.3 points

-

Can AI Be Trained to Learn from Continuous Improvement?

When we talk about Continuous improvement, as a concept, we refer to a constant WIP mode of innovation, enhancement and incremental progress. Take all possible learnings and loop it back an input to further refine a product or process. VOC, VOB, error types, new data or new pattern or behavior of a certain process or a machine that can be studied, performance monitoring. Getting RCAs. Feeding it back into the system and closing the loop of a continuous self-learning improvement process with the help of Artificial Intelligence. Natural language programing Reinforcement Learning : AI agents can learn by interacting with end users and learning best practices from online forums and receiving feedback (rewards or penalties). Over a period of time, AI models or AI agents can improve their decision-making based on outcomes. Example: AI in customer service optimizing responses based on customer satisfaction scores. Also, in an AI agent environment if a customer rates a low score or not resolved on a survey. AI can ask customer if he or she wants to get redirected to additional support Utilize online libraries: System based or conventional training methods have a focused content and is periodically reviewed once or twice a year. Unlike traditional models trained once on a fixed dataset, online learning allows models to update continuously as new data arrives. Can prove to be extremely useful in dynamic environments like fraud detection system that can improve itself whenever a new fraud pattern emerges or email classification or query categorization. Optimized Human-in-the-Loop (HITL): AI backend can incorporate VOC of output or a human feedback to refine and improve performance. On a continuous basis which a key component of continuous improvement For example, customer service agents correcting AI-generated email drafts helps the model learn better phrasing, grammar, formatting, and tone. Use concepts like A/B Testing and Feedback Loops: A tried and tested AI system can test different strategies (e.g., email templates) and learn which ones perform better. Manual or online VOC and Feedback loops help the system adapt to changing customer behavior or business goals. e.g. In a Banking Email Customer Service Context: AI can learn from: VOC (NPS scores, complaints and RCA) Agent corrections to AI-suggested replies, check of all queries are answered in a multi query email. Frequency and Escalation patterns (e.g., which types of queries lead to dissatisfaction) Compliance checks (to avoid regulatory violations) Though there will be challenges to guarantee a continuous improvement on AI based models, if we study it enough it can be overcome. Challenges like Propagation of systematic Bias. For e.g. an AI model might be more favorable to a certain type of machine or high-performance shift timing, or certain region in terms of Sales etc. Distribution or pattern shift. Or drifting of parameters, Real world the situation changes dynamically so AI will have to be trained to Adapt. Failing which it will follow a fixed pattern and might not necessarily be effective. In manufacturing or Healthcare sector or if we speak from a Six sigma perspective AI can Conduct SPC if we feed it in initial stage. Analyze process deviations If we find some points or processes out of control, we will implement solutions to get the process in control, AI agent can learn from such corrective actions It can also Suggest process changes to reduce defects depending upon previous corrective actions taken by us or information available online. Would be better poised to predict process output or future failures or improvement opportunities3 points

-

What Happens When an AI Solution Solves the Wrong Problem?

MBBs have deep expertise in process optimization and structured problem-solving, and their role in structured problem-framing approach is paramount, let’s understand this especially in AI solution implementations in high-touch environments like Contact Centers. The problems of Mis-framing leading to ineffective Solutions Contact Center Chatbot Deployment Scenario: A contact center faces long customer waiting times. To quickly reduce Average Speed of Answer (ASA), leadership launches an AI chatbot to handle frequently asked inquiries, aiming to ease pressure on live agents and thereby reducing ASA. Surface Level Problem statement: The project team stated the problem as “We have long wait times because our agents are overwhelmed. Let’s implement a chatbot to handle FAQ’s and reduce wait times by 50%.” While investing 100,000’s of dollars to develop an AI chatbot, train it on FAQ’s, and deploying it as a FPOC for all customer inquiries. The chatbot in itself was technically proficient, using NLP and ML algorithms to interpret customer requests. What was missed: The team did not perform a thorough root cause analysis. Key problems included understaffing staffing during peak hours (only 60% of required agents), inadequate training programs that left agents unprepared for complex product inquiries, fragmented knowledge management systems that forced agents to search multiple databases, and high employee churn (45% annually) from workplace stress and limited career advancement opportunities. The Effects of Mis-Framing on AI Performance Following the chatbot deployment, AI gave generic responses to complex customer issues, causing greater frustration among those needing detailed technical support. Instead of reducing call volume, the chatbot generated additional calls from customers seeking clarification on the AI’s responses or requested immediate escalation to human agents. Findings based on BSI Analysis: The pre-implementation baseline, calculated with the Bottleneck Severity Index formula (BSI = Volume × Cycle Time × (1 - First Time Right%) × Severity), showed: • Volume: 1,200 calls per day • Cycle Time: 8.5 minutes average handle time • First Time Right: 65% • Severity: 3.2 (scale of 1-5) • Baseline BSI: 11,424 Post-chatbot implementation revealed: • Volume: 1,350 calls per day (increased due to chatbot escalations) • Cycle Time: 11.2 minutes (longer due to frustrated customers) • First Time Right: 58% (decreased due to inadequate agent preparation) • Severity: 3.8 (higher customer frustration) • New BSI: 20,365 (78% increase) The AI solution made matters worse: with customer complaints increased, call deflection remained below 15%, and net promoter score (NPS) declined further, and the organization having to face increased operational costs due to higher call volumes and longer resolution times. In addition to the above consequences, wastage of resources and loss of stakeholder trusts add to the negative impact of mis-framing on AI effectiveness. Suggested Practical strategies for MBBs to improve problem framing in AI projects a. Engaging in structure problem statement development using LSS thinking and tools o Use SIPOC and VOC to clarify process boundaries and understand demand drivers o Defining CTQ’s and linking them to customer pain points rather than convenience metrics like ASA. b. Apply BSI for comprehensive bottleneck assessment o Train the project teams in evaluating each BSI component Component Key MBB Questions Volume Is the call volume avoidable or failure demand (e.g., repeat issues, unclear policies)? Cycle Time Are agents slowed down due to poor tools or unclear procedures? First Time Right % What’s the root cause of low FTR? Training, systems, or information gaps? Severity Are we prioritizing automation for high-impact or low-impact queries? o Trend Analysis: Ongoing BSI monitoring to spot patterns and predict bottlenecks before they become critical. This enables teams to address root causes proactively instead of reacting to symptoms. o Use Pareto analysis of BSI to identify Top drivers and guide the AI strategy accordingly. c. Facilitating structured problem definition workshops and fostering stakeholder engagement o Run problem framing workshops that bring together diverse perspectives and stakeholders (operations, IT, HR, training and customer experience.) o Use tools like affinity diagrams and root cause analysis techniques to identify underlying issues that may not be apparent to any single stakeholder group and before confirming the need for AI. o Translating insights into well-structured problem statements (what is wrong, where, when, to what extent and impact on CTQ.) o Making use of the RACI matrix to ensure comprehensive problem understanding. • Inform: Keep executive leadership aware of project progress and findings • Consult: Gather input from frontline agents, customers, and IT teams • Responsible: Include customer service managers, training coordinators along with operations teams and customer experience specialists in problem definition sessions • Accountable: Work closely with the project sponsor on the project approvals. d. Deploy Control Measures Before Automating o Test hypotheses through small-scale pilots that test technical functionality and business impact of the proposed solution before scaling AI. o The pilots need to monitor impact on Leading Indicators (FTR, Escalation Rate, Post-Chat Survey Scores) to validate alignment of proposed solution with identified root causes. Hence the mis-framing of problems in AI initiatives may lead to technically accurate but operationally ineffective solutions, wherein MBBs are mandated with the task of diagnosis with discipline. Using BSI as a key metric identifies real process friction points and thereby guiding the organization to ask the right questions before investing in AI, and ensuring the final solution addresses the true constraints, improve customer experience, and deliver sustainable business value.3 points

-

What Happens When an AI Solution Solves the Wrong Problem?

I have not been trained or certified as an MBB but I can apply what I have learned in this course. Here's an example of an AI solution technically is working as it should but has become a part of the problem. Consider a business who has a customer service center and their customers are experiencing long wait times. In an effort to decrease the long wait times, they create a Chat Bot. After implementing this AI solution they certainly can see the the call wait times has significantly decreased because the Chat Bot can "answer" them quickly. So, technically, this AI solution is a success. Wait times have drastically decreased. But the company begins to hear from their customers how angry and frustrated they are, even more so than when they had to deal with long wait times. The business failed to understand that what they should have been really trying to solve was increasing customer satisfaction, not merely the symptom of addressing long call wait times. The Chat Bot caused greater unsatisfaction because customers now have to make repeated calls (even though they don't have to wait) because the many "simple" calls are often precursors to more complex issues and the Bot could not handle these, thus forcing customers to start over with an agent, which leads to more frustration. Also, agents may now have to deal with more calls from customers because the Chat Bot did not properly diagnose the underlying problem. This situation wasn't created by the Chat Bot, but by those who didn't have the foresight to really understand how they should have created the Chat Bot. At the end of the day, technology or technical solutions, such as AI, will not be blamed for these problems that arise. Those who created the AI solution will be. You don't want to be that person. Back to the original thought of creating an AI solution. The business thought it was to merely solve lowering long wait call times. But the real root of their issue was customer frustration and dissatisfaction. Their "AI solution" was focused on the wrong thing and it even caused a deeper problem for them How could this have been prevented? Using the FRT process and documentation which captures the Desired Effects (DE), the Undesired Effects (UDE), and the Negative Injections (NI) of any AI project and solution. FRTs can help to envision the ideal future state of an AI solution but also proactively identify negative consequences BEFORE a dime gets spent on creating the solution. The FRT would have captured the root cause by addressing and thinking through the UDEs and also creating NIs to create answers for these UDEs. Utilizing the FRT process and documentation, along with creating a very thorough and thoughtful BRD, would have greatly increased a proper AI solution that result not only in lowering call wait times, but mor importantly, raising customer satisfaction.3 points

-

What Should AI Governance Look Like in a Business Excellence-Driven Organization?

AI Governance Framework for Business Excellence AI integration is transforming how decision-making, and operations are performed in organizations. As AI automates more business functions, strong governance becomes essential for responsible deployment. An effective and well-structured governance framework builds trust, reduces risks, and aligns AI advancements with organizational goals, regulations, and stakeholder expectations while maintaining competitive agility. A. Proposed AI Governance Framework Elements - Ethical Guidelines o These are a set of clear, non-negotiable principles that guide all AI initiatives, translating company values into technical requirements. o Defining acceptable use cases and explicitly prohibiting any unethical or biased applications. o Using reference frameworks including – EU AI Act / NIST AI RMF etc to translate these principles into policies and decision logs to ensure how each AI solution meets the guidelines. - AI Governance Structure and Oversight committee o A council of senior executives with cross-functional representation responsible for strategic AI direction and policy approvals o The panel reviews AI projects not only for business objectives but also for ethical standards and societal impact o Conducts periodic audits and model validations including ad-hoc sessions for urgent issues - Data Management Guardrails o Its imperative to maintain an AI repository with the details of the AI models, training data sources and intended usage o Monitoring data quality, lineage and privacy controls to ensure compliance with the adopted guidelines frameworks and the existing data-governance policies - Risk assessment and mitigation o It covers categorizing potential risks into – Operational, reputational, legal and ethical headers with their respective mitigation strategies o A Tiered framework for risk assessment (Low, medium, high) allows for agility by matching the level of oversight to the potential impact of the AI projects, thereby, ensuring low-risk projects aren’t affected by unnecessary governance whereas the high-risk projects receive intense scrutiny o The protocol also covers the real-time tracking of AI performance metrics, bias emergence and unexpected outcomes with incident response procedures for addressing AI system issues - Stakeholder engagement and communication o It involves including the employees / end-users, customers and the external advisors in the loop during design and post deployment of AI projects, to ensure that development and deployment of AI are not done in silo o Comprehensive training for teams to understand AI capabilities, limitations and their role in governance o Publish the explanation of the AI models purpose, performance and disclosures to build trust with customers and partners - Performance and accountability mechanisms o Define AI performance metrics to measure accuracy, fairness, and business impact of AI systems o Recording of AI decision making processes, model changes and associated governance activities B. Stakeholders for AI Governance Stakeholder Role and Responsibilities Chief Ethics Officer / Governance Lead Manages the ethical application of AI and chairs the AI Governance Committee. IT / Data Science Teams Ensure models are technically robust, monitored, explainable, and secure. Business Process Owners To validate AI outputs against the business goals and customer outcomes. Legal & Compliance To ensure AI systems comply with regulations, data and privacy laws, and any ISO standards and AI frameworks, as applicable. HR & Change Management Conduct training, initiate communication, and change readiness for AI-impacted teams. Internal Audit Regularly review model performance, risk, and controls. C. Balancing Agility and Control - Real time monitoring and Alerts o Use of monitoring dashboards to track live model performance, flag issues and trigger alerts for intervention, thereby closing the gap between operations and governance. - Controlled Pilots and A/B testing o Iteratively test AI models in a secure environment before deployment to track issues during development itself. - Living document and Fact sheets o Document the assumptions, limitations, training and retraining cycles and model versions for transparency and control. - Continuous feedback loop o Use feedback from users and business scenarios into model retraining processes to support continuous improvement and ensure alignment with organizational objectives. Subsequently, we can conclude that an effective AI Governance framework anchors the principles of Transparency by laying down clear guidelines and documentation; Accountability by defining roles and responsibilities and putting in place the required controls and continuous improvement through real-time monitoring, feedback and evolution of the governance framework based on the best practices and stakeholder needs. By adopting globally established standards and frameworks in AI governance, organizations can harness the transformative power of AI without compromising ethical or operational integrity, while achieving its business excellence goals of quality, cost optimization and super customer satisfaction.3 points

-

Are Your Metrics Ready for an AI-Enabled Organization?

Since each one of us works with data and metrics, plus given that AI is increasingly getting integrated in our processes and work, it will be a worth while investment to go through all the answers. You will get ideas on what you need to focus on and what you can let go. Best answer has been provided by Sargun Diwan. Well Done!!3 points

-

What Should AI Governance Look Like in a Business Excellence-Driven Organization?

Below listed are the traits of a good AI Governance Model – 1.) Clear and Fair – Identify and reduce any biases in the model, provisioning options for human interventions for big decisions and ensuring POCs are defined and known to all stakeholders in case something goes wrong. 2.) Strategic Fit – Ensure every AI deployment directly supports the organization's strategic goals and delivers clear value 3.) Managed Lifecycles: AI systems should have a defined route from initial conception to continuing upkeep. This calls for extensive testing prior to deployment, ongoing performance monitoring, and a defined procedure for modifications or even retirement. We require accurate documentation of everything. 4.) Training – Ensuring that relevant teams are well trained to work effectively with the AI. Also, teams need to be aware of what the AI can and cannot do. Stakeholders of an ideal AI Governance Model – 1.) Leadership 2.) AI oversight group 3.) Ethics and compliance team 4.) Internal Auditors Mechanism to ensure both agility and control 1.) Smart Risk Assessment – Design and approval framework aligned with the risk quotient of the deployment. 2.) Use of standardized tools and reusable modules – Provision pre-approved tools and use of reusable building blocks to cut down redundant work. 3.) Build in governance from day 1. 4.) Centralized guidance from the AI oversight team. 5.) Clear and defined RACI. 6.) Provision Human-AI collaboration for high risk decisions.3 points

-

Are Your Metrics Ready for an AI-Enabled Organization?

Traditional KPI metrics such as productivity, quality, cost, delivery, efficiency, and many more should not leave management lenses, rather, targets associated with them should be adjusted accordingly. Customer and employee satisfaction surveys however can be done through AI, leveraging on its capability to detect emotion, interpret facial expression, body language, and many more which is difficult for human eye to decipher and prone to certain biases. To track AI’s real performance and value, I recommend Input Data Integrity, and Bias Detection as two additional KPI metrics that management should add under their lenses. These are crucial for AI’s model creation, accurate training and analysis, impacting AI’s recommendation for business decision-making process.3 points

-

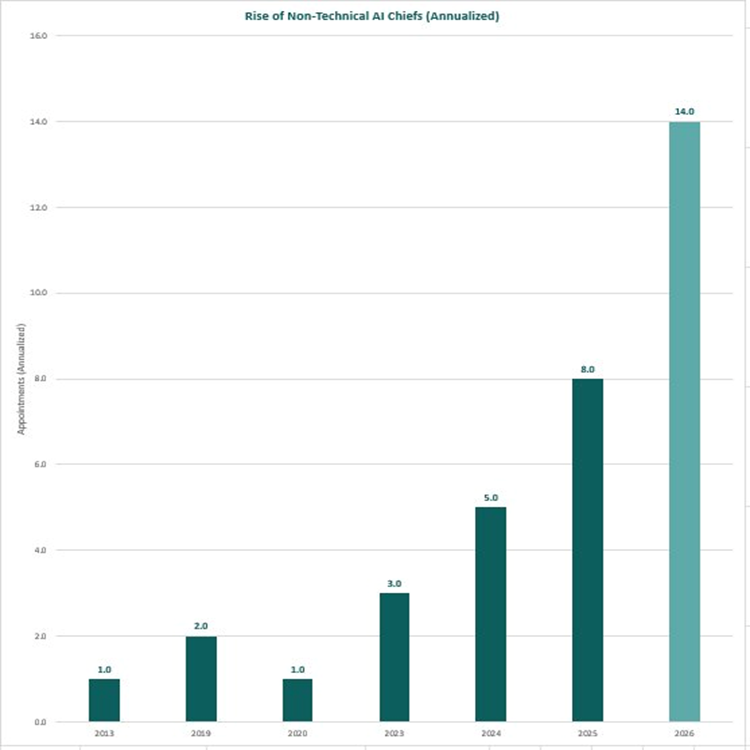

Non-Technical Leaders Are Taking Over AI Chief Roles — And the Numbers Are Accelerating

For years, the assumption was that “Chief AI Officer” meant a machine learning PhD, a data scientist, or a software engineer who could build models. That assumption is rapidly being dismantled. A clear trend is emerging across global enterprises, law firms, governments, and financial institutions: non-technical business leaders — lawyers, consultants, operations executives, economists, and brand strategists — are being appointed to the most senior AI leadership roles. And the pace is accelerating dramatically. The Numbers Don’t Lie Year New Non-Technical AI Chief Appointments Annualised Rate 2013 1 1 2019 2 2 2020 1 1 2023 3 3 2024 5 5 2025 8 8 2026 6 (Jan–Apr only) 14 (annualised) Rise of Non-Technical AI Chiefs (Annualised) In just the first four months of 2026, six non-technical leaders have already been appointed to Chief AI Officer or equivalent roles. Annualised, that projects to 14 appointments for the full year — nearly double the 2025 figure, and 14× the rate seen in 2019. This isn’t a blip. It’s a structural shift. Who Is Actually Getting These Roles?Below are documented appointments of non-technical leaders to Chief AI Officer and equivalent roles across major organisations: Organisation Role Background Date Herbert Smith Freehills Kramer (major international law firm) Global Chief AI Officer Lawyer (tech transactions, leveraged finance, legal innovation); former Senior Counsel at Mastercard, Global Head of Innovation at McKinsey Legal November 2025 WTW (Willis Towers Watson) Chief AI Officer Co-founder and former CEO of Newfront (AI-native insurance brokerage); MBA Stanford; finance and scaling expertise (non-coder) April 2026 Louisville Metro Government Chief AI Officer 25+ years in enterprise transformation and AI upskilling at Intel; former paralegal; English/paralegal degrees November 2025 Microsoft Chief Responsible AI Officer Law, Public Policy 2019 Goldman Sachs Chief Information Officer (AI-led transformation) Business + Tech Strategy (not pure coding role) 2019 / 2022 O.C. Tanner Chief Technology Officer (AI-led strategy) Business Strategy December 2013 Deloitte Global AI Institute Leader Business, Consulting ~2020 NTT DATA ($30B+ global tech services) CEO & Chief AI Officer Former McKinsey Senior Partner (TMT); MS Industrial Engineering (Stanford), B.Tech Mechanical Engineering (IIT Bombay); management consulting June 2024 / September 2025 Anthropic Chief AI Readiness Officer / COO Former founding COO of Google DeepMind; prior roles at Coursera (COO), Kleiner Perkins, Intel; engineering degree ~2026 IFS Nexus Black (industrial AI) CEO Former Chief Product Officer for LegalTech at Thomson Reuters; AI product strategy at GfK and Sage; founded AI for Good UK; MA Advanced Computer Science July 2025 HSBC Chief AI Officer COO of HSBC Corporate and Institutional Banking; nearly 20 years in operational and commercial banking roles April 2026 KPMG Vice Chair / Global Head, AI & Digital Innovation Former Head of KPMG US Consulting (15,000+ people); MBA and Master's in Professional Accounting October 2023 / August 2025 Littler Mendelson (employment law firm) Chief Artificial Intelligence Officer Nearly 15 years of employment law experience; led practice innovation at national employment law firm April 2026 Edelman UK Chief AI Officer, UK Communications and brand strategy executive; led integrated campaigns for global consumer and tech brands; Cannes Lions awards September 2024 LVMH Chief Data and AI Officer Director of Strategy and Innovation for EMEA at Nike; strategy and marketplace operations background March 2024 U.S. Department of Homeland Security Chief AI Officer & CIO Cyber and intelligence operations (U.S. Marine Corps); operational and intelligence background, not AI research March 2025 Wells Fargo Head of Artificial Intelligence (also Co-CEO, Consumer Banking & Lending) Former CEO of Consumer & Small Business Banking; former Head of Wells Fargo Technology; appointed from a business-leader seat November 2025 Mastercard Chief AI and Data Officer Former EVP of Corporate Strategy and M&A at Mastercard; corporate strategy and deals background, not engineering 2024 New York State (Office of Information Technology Services) Chief AI Officer Researcher at United Nations University; founded UN's first AI policy research lab; AI policy and governance background, not engineering January 2026 State of Oklahoma (OMES) Chief Artificial Intelligence and Technology Officer BBA in Management Information Systems; career in technology modernisation and business transformation across Fortune 500 and public-sector; business-and-operations rather than coding background November 2025 U.S. Department of Agriculture Chief AI Officer (also Chief Data Officer) Started in private-sector biotech; led data analytics team providing genomic services; data strategy and analytics leadership rather than ML/coding 2023 U.S. Department of Energy Acting Chief AI Officer Former Director for Technology and National Security at the White House NSC; policy and national security background, not engineering December 2023 U.S. Department of Labor Chief AI Officer Earlier Deputy CAIO at DOL; over a decade at the Bureau of Labor Statistics; operations and program management rather than AI research June 2025 U.S. Social Security Administration Chief AI Officer (also Deputy CIO) More than 20 years at SSA in IT operations and enterprise leadership; agency-veteran operational profile 2024 Morgan Lewis (global law firm) Chief AI & Knowledge Officer Former Chief Administrative Officer at a global law firm; business operations and process design (non-technical) 2025/2026 Generali Investments Chief AI Officer PhD/MSc in international macroeconomics; Professor of Economics; former Director of Research; senior roles at World Bank/UN PRI; economics/policy/research focus April 2026 Why Is This Happening?The role of a Chief AI Officer has evolved. In its earliest incarnation, it was about building — training models, architecting data pipelines, writing production code. Today, in most enterprises, the hard technical work is being done by vendors (OpenAI, Google, Anthropic, Microsoft) or by internal engineering teams. What organisations actually need at the C-suite level is someone who can: 1. Drive adoption — persuading reluctant stakeholders, managing change at scale 2. Govern responsibly — navigating legal, ethical, regulatory, and reputational risks 3. Connect AI to business outcomes — translating capability into commercial value 4. Work across functions — bridging legal, HR, finance, operations, and technology These are leadership and judgement skills. Not coding skills. The lawyers, consultants, and operators being appointed to these roles are not naive about AI. Many have deep domain expertise, years of AI-adjacent experience, and strong track records leading transformation. They simply did not build the models themselves. The Acceleration MattersThe annualised 2026 figure of 14 is not just a data point — it reflects a tipping point. Organisations that once waited for a “perfect” technical candidate are now actively choosing experienced business leaders and structuring the role around strategy, governance, and change management rather than engineering. If this trajectory holds, 2026 will see more non-technical AI Chief appointments than all years from 2013 to 2024 combined. The era of the non-technical AI Chief has arrived. What do you think is driving this shift? Are organisations right to prioritise business acumen over technical depth in these roles? Share your perspective below.2 points

-

When Should People Trust an AI’s Recommendation — and When Should They Override It?

Process Context My team also manages the central master data management for 50+ plants today, and this will grow to 70+ plants by 2027. The entire fleet data management is handled by two people in my team. Every time we commission a new plant or acquire a new plant, we need to align it’s material master with our fleet database, to avoid duplication, planning errors, and wrong spares being introduced into SAP. In a typical post commissioning and acquisition, we review minimum of 5000+ incoming material records against an existing 345000 item fleet master. Practically for my team, each item review takes at least 6 minutes without AI. The AI-enabled Process To solve this, I built a Python + AI solution using a MiniLM semantic model, combined with rule based checks. The program setup classifies each incoming item into three categories, Auto, high confidence match to directly map & upload in SAP. Review, ambiguous match, reviewed by the master data team. Reject, no valid match, program generates a new master data creation template for my team, to directly load into SAP. You can clearly see, AI does not create master data blindly in this case, it recommends, and the team decides. When We Trust The AI I have defined clear rules after testing the model for almost 10 days with millions of lines, semantic similarity is high & critical identifiers (model number, size, rating). It checks if descriptions and attributes are complete and consistent. One more rule I have setup is to keep standard, low-risk categories, and excluding verified MRP items, and these items directly flow straight into Auto category & are uploaded without manual touch. When We Override The AI Team deliberately does the review when similarity scores are close across multiple candidates, technical digits conflict even if text similarity is high. Then we also look at if item is maintenance critical or safety critical. We jump to the poor descriptions as well. In all such cases, team’s priority is correctness, not the speed. Safeguards That Keep The Balance We have built simple controls to avoid blind trust or even excessive overrides, Strict thresholds for Auto classification, mandatory team’s review for all Review cases, spot audits of Auto mappings, tracking & analysis of override patterns to improve program, and we have clear ownership, AI suggests, Team decides. Impact In Real Numbers Now with this program, my team completes 5000 item migration in 10 days in total instead of 2 months. I have a clear breakdown of 10 days, Data setup + AI pre-load + first analysis is done in 0.5 day SAP mapping for Auto category takes 1 day Manual review is done for Review category in 7 days New MD setup for Reject category is done in 1.5 days This has really improved my team’s output and bandwidth, and also reduced the onboarding risk for new plants, and best part is, it is allowing two people to scale this work for our growing fleet. Bottom Line I trust AI where signals are strong & mistakes are low impact, I override it where ambiguity or risk is high. As you can see, we are improving the overall process, idea isn’t to remove people from the process, it’s to make sure people spend time only where judgement actually matters.2 points

-

How Do You Ensure an AI-Enabled Process Continues to Work as Intended Over Time?

Domain: Aerospace MRO - Engine shop for CFM56/LEAP Turbofans for Performance Restoration (€ 220 Million yearly turnover , approx. 1,800 shop visits in a year, AI rolled over since late 2025 to predict HPT module rework needs based on borescope images, oil debris analysis, and in-service data) Specific AI-enabled process: Predictive HPT Blade Rework Forecasting The AI will recommend if the module needs full blade rework, partial (only the tips), or none, all with the goal of eliminating unnecessary shop time and expense without losing the zero escape target on critical parts. It went live on all CFM56/LEAP visits in Q1 2026 and initially deliver an average 18% reduction in TAT on HPT modules. How we ensure & monitor the process continues to deliver intended outcomes We are treating this AI-human decision loop as a live control system and continuing to develop it over time not like one tine install, the focus is on sustainable business value – TAT savings, cost per visit going down, safety and zero quality escapes. What we monitor (daily / weekly / monthly) 1. Leading indicators (daily dashboard – shop floor + engineering) · Prediction accuracy of AI vs. actual rework result (confusion matrix updated every 50 engines). · AI suggestion Override rate by technicians / engineers (accept, tweak, reject AI recommendation). · Confidence score variation (how often is the model <80% sure?) · Data drift indicators, distributional shift of input variables (eg iron particles in oil, borescope crack density, EGT margin so on) 2. Lagging business outcomes (weekly review – operations + finance) · HPT Module: Turn Around Time Variance (target < 35 days). · Rework cost per engine vs. Baseline · Escape rate / quality holds on HPT (target 0) · Spare Parts Consumption vs. Forecast (Over/Under-Stocking Signals) 3. Model health metrics (monthly deep dive – MBB + data team) · Population stability index (PSI) on key inputs (>0.25 = moderate drift, >0.5 = severe). · Calibration plot (predicted probability vs observed rework rate) · Feature importance drift (which inputs is most important to the model now vs at launch) How we react when the going starts getting tough We have a three-level escalation protocol: Level 1 – Minor Drift (Weekly Trigger) · Override rate >25% or confidence <75% on >20% of cases. Response: · Immediate feed back loop i.e. every override by enginers requires 1-click reason (dropdown + optional voice note). · Retrain model based on last 100 engines + overrides justificatipn. · Notify shop team lead, usually fixes within 1-2 weeks Level 2 – Business impact emerging (weekly trigger) · TAT +3 days or rework cost increased +8% vs rolling 4-week average · OR escape / hold on HPT (even one) Response: · Hold AI recommendations - return to manual disposition within 48 hours/ · Root Cause A3 with MBB: Data drift? New failure mode? Change in user behavior? · Temporary rule: AI confidence > 90% required for auto-accept · Full model retrain + validation on hold-out set before re-release Level 3 – Systemic failure (monthly or immediate on escape) · PSI >0.5 on critical inputs OR calibration slope deviates >15% · OR sustained TAT/cost > 15% Response: · Full pause of AI in production · Independent audit: data lineage, labeling drift, concept drift · Notification to the regulator of any escape which occurred · Re-baseline from scratch or switch to a fall-back approach (manual and old rules) · Shared across sites post-mortem – we’ve had one Level 3 (new low-sulfur fuel changed oil debris patterns in Q3 2026) Practical setup we use today · Automated alerts using Teams/Slack when threshold breaches · Monthly “AI Health Review” (30-min standing meeting: MBB, ops manager, data lead) · Quarterly external benchmark against OEM data (CFM/Pratt) · Annual review of AI usage (EASA Part-145 requirement) Bottom line from the teardown bay AI Drift isn’t an ‘if’ but a ‘when’ In MRO, the price of slow degradation can be a long turn-around time, excessive spares, or even a failure in service. The way we monitor our AI is how we would monitor an engine, performing routine checks every day, and only grounding it completely when we have to. The process remains alive since we do not assume model is “set and forget”.2 points

-

How Should Organizations Certify AI Before It Goes Live?

Here are the winners of Q821. 🏆 Winner – Adil Khan (Aerospace Heat Treatment😞 outstanding NADCAP-compliant AI certification plan with controlled trials, Cpk > 1.67, and 4-tier sign-off governance. 🥈 Runner-up – Sattar Mohammad Imran (Complaint Chatbot😞 comprehensive multi-departmental certification and oversight framework ensuring fairness and compliance. 🥉 Special Mention – Arul Palani (AI Code Assistant😞 strong readiness and policy-based certification approach using ISO/IEC 42001 standards.2 points

-

How Can AI Make Every Customer Interaction Feel Personal?

AI is very well capable of interacting with customer and make them feel personal in each interaction by merging data, context and empathy at scale without crossing boundaries of trust. Better customer interactions with less customer efforts and high CSAT scores will definitely help the business expand Customer’s expectation from any service are · Quick response on any queries raised · Receive accurate response on queries raised to avoid asking repeat questions · Faster query resolution with minimum interactions · Easy to interact with and have a sense of personal touch by letting them feel valued, heard and understood · Expect trust on data privacy AI helps in better interaction with customer by: · Collecting the context with consent by pulling required data keeping in mind not using hidden data · Knowing customer better and in depth by building customer profile using data from past purchases, past interactions or support tickets, browsing history, etc · Use smart prompts for Bots that works on prefilling summary and also acknowledge earlier context showing respect and care · Feel customer valued by predicting needs and suggesting next steps that will help query resolution prior customer asks the question · Switch tone basis customer tonality and empathize basis sentiment analysis that reflects customer sentiment For example, Account Payable Helpdesk is the function included in Procure to Pay process that handles queries from vendors, internal or external stakeholders related to invoices processed, payments done and PO created. Below are few AI capabilities mentioned that can be used in AP Helpdesk across PTP: · Build AI Chatbots for automated query handling with 24/7 support and less repetitive tickets · Routes the ticket to required department basis query raised for faster and convenient resolution · Captures invoice data using OCR and NLP from documents or emails · Proactively inform vendors or required stakeholders on invoice status thereby reducing escalations · Resolve complex queries by searching policies, SOPs, etc using intelligent search & knowledge base · Adjust the tone basis customer’s response and urgency thereby building trust · Flags invalid invoices, duplicate payments thereby preventing financial losses2 points

-

Can AI Turn Knowledge Into a Competitive Edge?