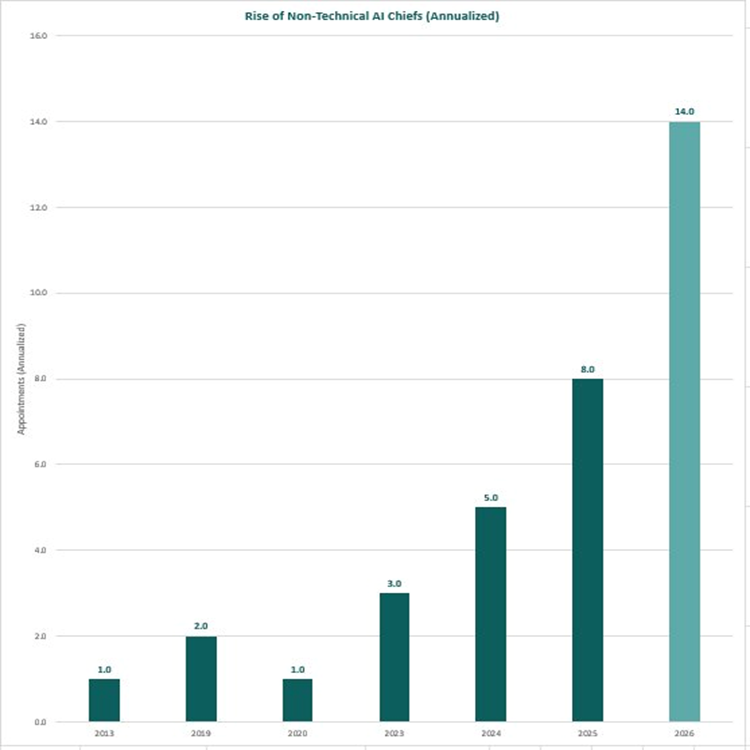

For years, the assumption was that “Chief AI Officer” meant a machine learning PhD, a data scientist, or a software engineer who could build models. That assumption is rapidly being dismantled. A clear trend is emerging across global enterprises, law firms, governments, and financial institutions: non-technical business leaders — lawyers, consultants, operations executives, economists, and brand strategists — are being appointed to the most senior AI leadership roles. And the pace is accelerating dramatically. The Numbers Don’t Lie Year New Non-Technical AI Chief Appointments Annualised Rate 2013 1 1 2019 2 2 2020 1 1 2023 3 3 2024 5 5 2025 8 8 2026 6 (Jan–Apr only) 14 (annualised) Rise of Non-Technical AI Chiefs (Annualised) In just the first four months of 2026, six non-technical leaders have already been appointed to Chief AI Officer or equivalent roles. Annualised, that projects to 14 appointments for the full year — nearly double the 2025 figure, and 14× the rate seen in 2019. This isn’t a blip. It’s a structural shift. Who Is Actually Getting These Roles?Below are documented appointments of non-technical leaders to Chief AI Officer and equivalent roles across major organisations: Organisation Role Background Date Herbert Smith Freehills Kramer (major international law firm) Global Chief AI Officer Lawyer (tech transactions, leveraged finance, legal innovation); former Senior Counsel at Mastercard, Global Head of Innovation at McKinsey Legal November 2025 WTW (Willis Towers Watson) Chief AI Officer Co-founder and former CEO of Newfront (AI-native insurance brokerage); MBA Stanford; finance and scaling expertise (non-coder) April 2026 Louisville Metro Government Chief AI Officer 25+ years in enterprise transformation and AI upskilling at Intel; former paralegal; English/paralegal degrees November 2025 Microsoft Chief Responsible AI Officer Law, Public Policy 2019 Goldman Sachs Chief Information Officer (AI-led transformation) Business + Tech Strategy (not pure coding role) 2019 / 2022 O.C. Tanner Chief Technology Officer (AI-led strategy) Business Strategy December 2013 Deloitte Global AI Institute Leader Business, Consulting ~2020 NTT DATA ($30B+ global tech services) CEO & Chief AI Officer Former McKinsey Senior Partner (TMT); MS Industrial Engineering (Stanford), B.Tech Mechanical Engineering (IIT Bombay); management consulting June 2024 / September 2025 Anthropic Chief AI Readiness Officer / COO Former founding COO of Google DeepMind; prior roles at Coursera (COO), Kleiner Perkins, Intel; engineering degree ~2026 IFS Nexus Black (industrial AI) CEO Former Chief Product Officer for LegalTech at Thomson Reuters; AI product strategy at GfK and Sage; founded AI for Good UK; MA Advanced Computer Science July 2025 HSBC Chief AI Officer COO of HSBC Corporate and Institutional Banking; nearly 20 years in operational and commercial banking roles April 2026 KPMG Vice Chair / Global Head, AI & Digital Innovation Former Head of KPMG US Consulting (15,000+ people); MBA and Master's in Professional Accounting October 2023 / August 2025 Littler Mendelson (employment law firm) Chief Artificial Intelligence Officer Nearly 15 years of employment law experience; led practice innovation at national employment law firm April 2026 Edelman UK Chief AI Officer, UK Communications and brand strategy executive; led integrated campaigns for global consumer and tech brands; Cannes Lions awards September 2024 LVMH Chief Data and AI Officer Director of Strategy and Innovation for EMEA at Nike; strategy and marketplace operations background March 2024 U.S. Department of Homeland Security Chief AI Officer & CIO Cyber and intelligence operations (U.S. Marine Corps); operational and intelligence background, not AI research March 2025 Wells Fargo Head of Artificial Intelligence (also Co-CEO, Consumer Banking & Lending) Former CEO of Consumer & Small Business Banking; former Head of Wells Fargo Technology; appointed from a business-leader seat November 2025 Mastercard Chief AI and Data Officer Former EVP of Corporate Strategy and M&A at Mastercard; corporate strategy and deals background, not engineering 2024 New York State (Office of Information Technology Services) Chief AI Officer Researcher at United Nations University; founded UN's first AI policy research lab; AI policy and governance background, not engineering January 2026 State of Oklahoma (OMES) Chief Artificial Intelligence and Technology Officer BBA in Management Information Systems; career in technology modernisation and business transformation across Fortune 500 and public-sector; business-and-operations rather than coding background November 2025 U.S. Department of Agriculture Chief AI Officer (also Chief Data Officer) Started in private-sector biotech; led data analytics team providing genomic services; data strategy and analytics leadership rather than ML/coding 2023 U.S. Department of Energy Acting Chief AI Officer Former Director for Technology and National Security at the White House NSC; policy and national security background, not engineering December 2023 U.S. Department of Labor Chief AI Officer Earlier Deputy CAIO at DOL; over a decade at the Bureau of Labor Statistics; operations and program management rather than AI research June 2025 U.S. Social Security Administration Chief AI Officer (also Deputy CIO) More than 20 years at SSA in IT operations and enterprise leadership; agency-veteran operational profile 2024 Morgan Lewis (global law firm) Chief AI & Knowledge Officer Former Chief Administrative Officer at a global law firm; business operations and process design (non-technical) 2025/2026 Generali Investments Chief AI Officer PhD/MSc in international macroeconomics; Professor of Economics; former Director of Research; senior roles at World Bank/UN PRI; economics/policy/research focus April 2026 Why Is This Happening?The role of a Chief AI Officer has evolved. In its earliest incarnation, it was about building — training models, architecting data pipelines, writing production code. Today, in most enterprises, the hard technical work is being done by vendors (OpenAI, Google, Anthropic, Microsoft) or by internal engineering teams. What organisations actually need at the C-suite level is someone who can: 1. Drive adoption — persuading reluctant stakeholders, managing change at scale 2. Govern responsibly — navigating legal, ethical, regulatory, and reputational risks 3. Connect AI to business outcomes — translating capability into commercial value 4. Work across functions — bridging legal, HR, finance, operations, and technology These are leadership and judgement skills. Not coding skills. The lawyers, consultants, and operators being appointed to these roles are not naive about AI. Many have deep domain expertise, years of AI-adjacent experience, and strong track records leading transformation. They simply did not build the models themselves. The Acceleration MattersThe annualised 2026 figure of 14 is not just a data point — it reflects a tipping point. Organisations that once waited for a “perfect” technical candidate are now actively choosing experienced business leaders and structuring the role around strategy, governance, and change management rather than engineering. If this trajectory holds, 2026 will see more non-technical AI Chief appointments than all years from 2013 to 2024 combined. The era of the non-technical AI Chief has arrived. What do you think is driving this shift? Are organisations right to prioritise business acumen over technical depth in these roles? Share your perspective below.