-

Fast Tracking vs Crashing

shashankparihar19 replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Several techniques are used in Project management in Schedule management plan for schedule compression like---- 1. Fast tracking 2. Crashing 3. Both 4. Reduce scope 5. Cut quality 6. Resource allocation Out of these techniques resource allocation is the best technique because it does not increase either cost or the risk. Let us see the two above mentioned techniques---- Fast tracking--- during project follow up, there might be several change requests from the stakeholders, analysing their requests based on the importance and influence of the stakeholders, the project team also finds alternate methods, if the change request is accepted then it has to go through integrated change control process. If the customer demands that the project should be completed or the deliverable should be given ahead of the scheduled date due to certain emergency, than the project manager has to take several measures to fulfil the customer demand but while meeting such a demand the probability of occurrence of the risk becomes high because you want to finish it earlier than scheduled so you will have to perform many linear activities in parallel. The most important thing is that the project manager has to keep an eye on time management and perform risk assessment. This might involve compromising on quality and/or scope reduction which has to be verified by the key stakeholders and the project sponsor. Crashing—while performing customer demand of finishing the project work or delivering the deliverable the project manager could visualize the critical path of the project or project network diagram and allocate resources to it to finish the work on critical path. Adding resources on the critical path increases the cost of the project and time management (increase supervision). Activities on the critical path are very important to the success of the project as a whole. Also, we cannot delay any activities on the critical path because there is no float on them. We can delay activities on the non-critical path or add buffer either after the non-critical activity or at the end of the project but this will change the critical path and our project will bear high risk. At some instances both fast tracking and crashing are applied simultaneously.

-

Gage R&R

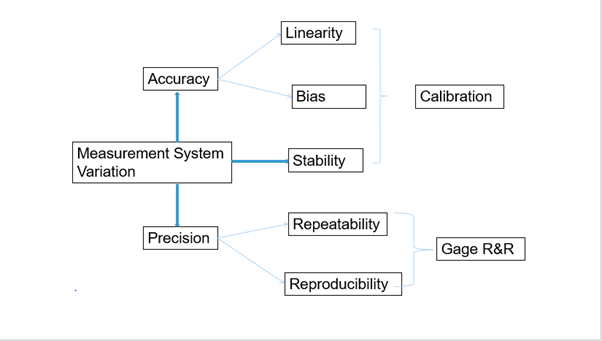

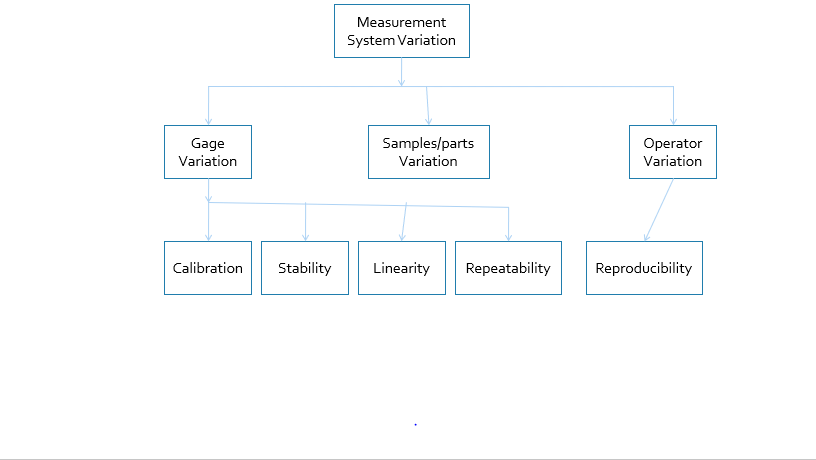

shashankparihar19 replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Measurement System Analysis (MSA) is done to understand the contribution of the variation due to measurement system to the Process variation i.e. variation due to instrument and due to operator. Our aim is to either minimise the variation due to measurement system or totally eliminate it, so that the Process variation should be only be due to variation in parts only. M.S.A is mentioned in “ISO/TS 16949:2009 (E) 7.6.1 MSA” Broadly two types of MSA studies are conducted--- 1. For continuous data- Gage R & R 2. For attribute data- Attribute Agreement analysis Measurement system analysis is the study of--- How much error is created by the gage (instrument or measuring device) itself either due to not proper calibration or worn type or both. How much error is created by the person {operator(s) or appraiser} measuring the part(s) or sample(s) by that gage. Measurement error- is the difference between true value or reference value of the part(s) and the mean of the observed values or measured values of the part(s) measured by the operator(s) with an instrument i.e.— Measurement error = gage variation due to repeatability + operators’ variation due to reproducibility True value or Reference value is provided by an expert. Variation in data or observed results is due to ---- Variation between parts manufactured due to process variation. Variation due to measurement. Process variation = Actual process variation + measurement system variation (we are interested in variation due to MSA) MSA should be performed as mentioned below--- 1. Determine the gage- if there are more than one gauge used for measurement, choose the most appropriate one. 2. Define the procedure of measurement. 3. Are there any standards available? If yes then use them thoroughly. 4. Define the design Intent of the gage or demand from the supplier (calibration report of the gage). 5. Discrimination- (granularity/resolution)- minimum value on the scale that you can measure. 6. Accuracy- it is the closeness of the agreement between a measurement result and the true or accepted reference value.it includes--- i. Bias- this is the systematic difference between the mean of the observed result or measurement result and a true value. ii. Linearity-This is the difference in bias value over the expected operating range of the measurement Gage. iii. Stability-variation in the average of measurements, if the same operator measures the same unit with the same measuring equipment over a extended period i.e. hours/days/weeks/month. 7. Precision-Precision- when an identical item is measured several times, Variation observed between repeated observations of the same unit using the same method either due to operator or due to equipment (how close to each other). The components of precision includes---- i. Repeatability-When variation in the successive measurements of the same part is observed when the same Appraiser is asked to repeatedly measure the same unit using the same measuring equipment ii. Reproducibility- variation in average of measurements is observed when two or more operators measure the same unit repeatedly with the same method with using same equipment. It is often referred as Appraiser variation. A measurement system is reproducible when different operators produce consistent results.

-

Industry 4.0

shashankparihar19 replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Fourth industrial revolution is taken up by German Industrial Engineers and endorsed by The chancellor Angela Merkel Fourth industrial revolution (4.0) focuses on cyber-physical systems. The concept of Industry 4.0 was first used in Hanover meeting in 2011, by a group of people from different fields to enhance German competitiveness in manufacturing industry. we know that humans are prone to committing errors and performing repetitive work again and again brings errors frequently due to fatigue and monotoncity. Cyber-physical systems means physical systems will be connected and communicate with each other through cyber space, every system/machine will have its unique identity over the cyber space through which it will communicate with other systems. each system will be using network relay systems like wireless network card or RFID tags, bluetooth, WI-FI, etc. each system will perform self diagnostics to generate its state, collect and analyse data which was earlier cumbersome to manage because some data are not structured and make intelligent decisions. Industry 4.0 will utilize---- 1. Cloud technology-- intent is sharing of resources 2. 5G technology-- network technology used for communication i.e. voice and data transfer at a very high speed. 3. Block-chain technology- used in maintaining transactions across. 4. Artificial Intelligence & Machine learning- human intelligence mimicking 5. IoT devices technology- mobile & other devices connected through internet 6. Cognitive technology- making human like decisions in complex situations 7. Robotic Process Automation (RPA)- human actions mimicking. at present these technologies are moving fast by automating the processes and systems including machines.systems. machines/ devices/processes will interact with each other using 5G technology, sharing data and knowledge using cloud technology, analyzing and making decisions using machine intelligence, performing self diagnostics and mimicking human actions just as humans do and creating a green & cleaner environment. every technology has its pros. & cons. every industry is gearing with these technologies like we have smart Mobiles, smart consumer electronics ( Smart Tv's, Alexa device- listening and performing actions instructed to it, smart lighting, etc), smart factories, smart stores like Amazon stores- you need not to stand in queues just pick items of your choice and move out of the store rest will be done automatically through AMAZON app using Block-chain technology, smart hospitals where robots will perform surgeries, automotive industry-driver less car. As technology is advancing its vulnerabilities are also coming up like driver less car has met to an accident also it will be accident prone if used on routes where humans commute, Alexa device listens to all your conversations, smart TV if left powered someone might hack your devices and create trouble for you. we need to be careful while using these devices and strictly follow the intended functions and instructions until vulnerabilities are plugged in.

-

Number of Samples for Hypothesis Test

shashankparihar19 replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Hypothesis testing is a procedure of making inferences about the population based on the information derived from the samples. It requires making some initial assumptions and then you try to prove your assumption or hypothesis with the support of statistical tests. You are given a practical problem; you convert that problem into the statistical problem and try to find out a statistical solution of that problem based on samples data and statistical tests. Then you convert this statistical solution into the practical solution. You collect samples from the population under study based on certain assumptions. There are two methods in this regard--- 1. Trial and error method 2. Scientific method Trial and error method is based on making assumptions or hypotheses about our subject under study and the appropriate sampling procedure. Taking samples again and again and drawing inferences again and again until the statistical significance is proved. statistical significance is based on---- i. Type I error rate ii. Type II error rate iii. Selection of the correct type of test based on hypothesis formulation. iv. Value of your test statistic- which is obtained from sample data and applying appropriate statistical test to that data. v. P-value or the critical value If the P-value is less than the level of significance then we reject our assumption or if the value of the test statistic falls in the region of rejection, we reject our initial assumption. In trial and error method, we take samples again and again until statistical significance is proved but we are not sure about type I and Type II errors state. Because, if we take less sample then we will have to compromise with type I error and if we take large enough samples again, we will further type I error rate but any outlier will be detected if present and difference between the population parameter and the test statistic becomes small. While taking optimum samples means fixing type I error rate and reducing type II error rate. We may prove the statistical significance but is it justifiable? How we will justify our conclusion, are our conclusions reliable? Are we able to detect a difference that really exist or we have detected a difference which really does not exists? Initially the cost of conducting such a study is low but as we move forward taking more and more samples successively, the study tends to be costly as taking samples will cost time, money and sometimes samples are destroyed during testing, sampling error might enter our study, due to fatigue, we might make error in sampling selection and/or measurement. Also, how reliable are our estimate (how close our estimate is to the population parameter), we don’t have correct idea. Scientific method is based on several factors like--- i. Type I error ii. Type 2 error iii. Selection of the correct type of test based on hypothesis formulation. iv. Value of the test statistic v. E = margin of error (how much difference we want to look) vi. Power of the test vii. P-value Based on this information, we calculate optimum sample size for our study. Thus, we will be able to control Type I error and type II error rate and will be able to detect a difference if it really exists. The cost of procedure will be optimum and we will get a optimum solution.

-

Internet of Things Security Risks

shashankparihar19 replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!One big advantage of IoT is---- 1. Useful in Monitoring – It provides an advantage of knowing things accurately which are well predicted in advance. With IoT, the quantity of supplies, consumption, energy used, distribution etc. gets collected easily. 2. Useful in collecting data—since systems will be interconnected and used electronics and embedded systems, they will self-collect data ant there will not be data mismatch or data lost. 3. Useful in analysing data—these systems will have self-diagnostics hence will analyse both structured and un-structured data. Manual digging into data and then analysing it will be relived. 4. Useful in making decisions--- due to embedded systems these devices and systems will self-diagnose the faults/errors/ supply quantity, etc. 5. High precision and accuracy—since machines will be preforming the activities themselves, hence the precision and accuracy will be very high. The concept of IoT, self-diagnostic systems, use of Artificial Intelligence, Blockchain technology, Cognitive computing, Robotic process Automation, etc is a new concept for Industry 4.0, ‘Cyber-Physical-Systems”. The concept was first put forward in a meeting in Hanover in 2011, the German industries took the initiative to work forward in this regard. Although there are several benefits of this technology but there are threats & challenges too like--- 1. How to handle unstructured data, although we will be using Artificial intelligence for data capturing & analysing but it will require to train machine learning algorithms to make it better to handle such situations and perform intelligent analytics. AI is the future but world’s renowned theoretical physicist & cosmologist Professor Stevens Hawkins, once told to stay away from use of AI as it will pose a great danger to the human society one day! 2. Security concerns with wireless data--- IoT devices work using network connectivity. Since data will be transmitted through both wired and wireless medium, the concern is with wireless connectivity (Router, individual systems) as company’s data (cloud, Web-NAS; SAN), mobile data is very crucial and loss or theft of data will be very painful. IoT devices might be hacked and data might be subject to theft. They will have to implement better security features like 128-bit encryption, double-authentication & identification, etc. One of the pioneers in network technology has said “it is better to stay wired”. 3. Software updates--- IoT devices might develop lots of vulnerabilities from time to time, it will require to patch& update the system from the threats that pose a great danger due to loopholes in the software system like Operating system, internet security software (malware, ransomware), tracking cookies- they track your movement and send back the data & use pattern, etc. 4. Botnets attack--- since we will be using wireless & automated systems, transactions will also be in cryptocurrency (Blockchain technology). The IoT botnets might attack and gain access to the cryptocurrency which will be an ultimate loss to the business organization. 5. Other invasions—we use smart systems at home like smart LED TV’s, Amazon’s Alexa, Smart phone, medical devices, other communication devices, vital information systems, hackers might get control over these devices which are either non-standard or have some vulnerabilities and can play mischief with us like data theft (brute force attack, denial of service attack, Phishing attack-banking information, personnel information theft), data manipulation, etc. we can save ourselves by disabling universal plug & play feature in the devices, regularly update firmware. Being non-responsive & sensitive to messages and links. Put devices on guest network as it will not allow access to many features from where an intruder can gain easy access to your smart systems or you can create a separate network with user access control features. 6. Legal issues—there are lots of legal & regulatory issues with IoT. Legal liability for unintended use. Technologies have developed faster but the legal & regulatory standards have not come up with the intended pace so there might be conflict between law enforcement surveillance and civil rights. suppose you lost your phone and someone misutilises it then ultimately you will be penalized if you have not informed the concerned agency about the theft or lost. previously many Chinese mobile manufacturer's devices do not had IMEI numbers, so it became vulnerable to trace & track them.

-

Bench and Mark - Cost Cutting

cost cutting using DFSS is a systematic approach involving DMADV (Define, measure, Analyze, Design, validate) methodology. involving QFD in the define stage will incur comparison with competitors and coming up with frugal innovative design in the design phase using new technologies and methods. when DMAIC methodology fails to provide the intended result, we resort to DFSS (Design for six sigma)

-

Robotic Process Automation vs Artificial Intelligence

shashankparihar19 replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Robotic Process Automation is a way of automating (using machines called bots)mundane rule based tasks which do not have any exceptions. Robotic: Robots or Bots are machines that can copy the human actions facilitated either by coding or recording the screen. Process: A series of steps that leads to an output is called a process. Something as simple as making tea or your favorite dish is also a process. Automation: Any process which is done by a robot without human intervention. Automating simple, rule based, sequence of steps that lead to an output is known as Robotic Process Automation. RPA helps us to save our time and resources, what took hours/days/weeks to perform tedious tasks one after the other now they can be performed with speed, accuracy and precision so that we can have time to think, to be creative and pursue new ideas. RPA is probably the fastest path to digital transformation with efficiency and effectiveness. RPA is a technology that enables computer software to emulate and integrate actions typically performed by human interacting with digital systems. The computer software that executes the operation is called a “ROBOT”, RPA Robots are able to capture data, run applications, trigger responses and communicate with other systems. RPA targets processes that are--- Highly manual. Of low-complexity. Stable. Repetitive, Rule based. With lower exceptions rate or without exceptions. Standard electronic readable input. Artificial Intelligence is concerned with design of intelligence in an artificial device or mimicking human actions with intelligence built in the machines. What describes intelligence? 1. Having intelligent behavior like a human. 2. Behaves in a best possible manner. 3. Thinking capabilities. 4. Acting capabilities. There are three types of AI systems--- 1. Artificial Normal Intelligence (ANI)--- it is also known as weak AI. Systems that cannot truly reason and solve problems but can act as intelligent simulating pre-defined human behavior. They do not possess thinking abilities like self-driving cars, Siri, Alexa, Sophie-humanoid, etc. all AI developments currently fall in this category. 2. Artificial General Intelligence (ASI)—It is also known as strong AI. These systems are self-aware, thinking capabilities like humans. No examples as of now. 3. Artificial Strong Intelligence (ASI)—when capabilities of machines will surpass human beings. It is a hypothetical situation. Examples are several movies showing machines gaining control over humans. RPA is just mimicking repeatable human actions based on certain pre-defined rules using AI. Whereas AI is simulation of human intelligence by machines. RPA is a ll about doing whereas AI is a very broad and wide term as it is all about building all human capabilities into a machine. thinking and acting capabilities are not rule-based or repetitive and are rather complex. Both are used for solving-real world problems RPA utilizes very little AI capabilities like cognitive tools (OCR, etc), but AI has a broad spectrum over RPA because it utilizes— Machine learning capabilities, Deep learning capabilities, Natural language processing & understanding, Robotics, Expert systems and Fuzzy Logic, cognitive Science. RPA is process driven, it’s in it name, it automates repetitive activities and, in this pursuit, it interacts with several system through IT systems. It involves process mapping, process documentation i.e. all activities relating to process improvement and/or process automation opportunities assessment and process Design Documentation for implementing RPA. Whereas AI is about good quality of data i.e. helping RPA to complete its work using machine learning i.e. reading data for implementation into the RPA project from sources like invoices, forms, etc. For example, RPA project involves invoice processing. Customer sends an invoice through email, robot will read email; download the invoice; now the AI comes into reading contents from the invoice utilizing machine learning capabilities using human intelligence using ML algorithms or using cognitive tools like OCR (optical character reader). Popular RPA tools are------ 1. Uipath-- its a software which is user friendly and utilities drag and drop activities requiring very little programming skills in visual basic and .Net technologies. 2. Automation Anywhere-- it's also a software but it requires programming skills in C# (c sharp) and .Net technologies. 3. Blue prism-- it also requires programming skills in C# and .Net technologies. whereas some of AI tools are--- 1. IBM Watson 2. Googles TensorFlow (cloud based) 3. Infosys Nia 4. Wipro Holmes 5. Microsoft Azure 6. TCS Ignio Both RPA and AI are growing leaps and bounces, both complement each other i.e. RPA uses AI machine learning algorithms and AI uses Robots, but AI is much broader and wide concept. Mckinsey & Company says, about 22% of the IT jobs will be replaced by RPA in the coming years. FORRESTER Says, RPA market will grow by 2.1 Billion Dollars by 2021. It is expected that in 2021 there will be 2 Lakhs jobs for RPA professionals in India. According to market research firm Tractica, AI software market is expected to grow from around from 9.5 billion US dollars in 2018 to an expected 118.6 billion US dollars by 2025. According to world economic forum, automation will replace 75 million jobs but will generate 133 million jobs worldwide by 2022

-

Y=f(X)

shashankparihar19 replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Various tools that can be used to prioritize potential causes are---- 1. Detailed process mapping 2. CE-Diagram 3. CE-Matrix 4. FMEA 5.Affinity Diagram 6. Interrelationship diagram

shashankparihar19

Fraternity Members

-

Joined

-

Last visited