Everything posted by Mohamed Asif Abdul Hameed

-

Improve Phase

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Picking the best idea is the most critical part during the improve phase, when there is multiple solution available and identified, best way is to go data driven, structured and inclusive approach. We can go one step ahead by choosing the Strategic Best Fit (Best Aligned Option) and not just the Best Idea, reason being most of the time the best solution on the papers is not the best fit operationally. Some of the consideration includes that of: 1) Is this solution rolled out to multiple teams across the organization? - Scalability 2) Will this idea/solution work on long-term? potentially considering on-going, upcoming changes - Organization and Industry wide - Sustainability 3) Does this align with the team preference? considering the resource ecosystem - Team Alignment This in fact should be done with the cross functional stakeholder and leaders to get the broader clarity so that Involving them early and frequently and leveraging the Change Management System in the organization. This will bring down the resistance to change in a significant way. Below is the holistic structured data driven approach that could complement the POV. "The Decision Matrix." "The best idea is the one that scores high not just in creativity, but in feasibility, impact, and alignment with organization needs."

-

AEIOU framework

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!

- Control Charts for Continuous Data

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Control charts provides us powerful insights into process performance, variability, and stability, and help us in making data-driven decisions to improve the quality of our outputs. Typically, control charts help us in getting below insights Identifying process stability Detecting process variation Predicting future process performance Comparing different processes Communicating process performance We have 2 charts, the upper chart typically displays the process data in the form of a line graph or scatter plot, while the lower chart displays a measure of the variation in the data. The two charts are typically complementary and together help to monitor the performance of a process over time, and to detect any unusual variation or changes that might be occurring. Using two charts together helps us in understanding the comprehensive picture of the performance of a process. In Xbar-R, the Xbar chart can help us see whether the process is stable and centered around a target value, while the R chart can help us see whether the variation within subgroups is consistent over time. If the variation in the process is consistent, the R chart should show relatively small and consistent ranges. If the variation is not consistent, the R chart may show large and/or inconsistent ranges. In I-MR, the I chart shows the individual data points plotted over time, and the MR chart shows the moving range between each successive data point. By using both charts together, we can monitor both the average level of the process and the variation in the data. In Xbar-S, Xbar chart shows the average value of the process data within each subgroup, and the S chart shows the variation within each subgroup. To conclude, using both control charts together can help us identify potential problems or changes in our processes, and take corrective action before the process goes out of control.- Workload Balancing

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Workload balancing is a crucial component of lean manufacturing as it helps to bring down the lead time, increase the productivity, and enhance the overall quality. It refers to the process of allocating work among the team members to ensure each one is being utilized efficiently and effectively. This is done to eliminate any bottlenecks or waste in the production process. There are three things that have an obvious impact on balancing the production workload: Amount of work content at each operation involved in the overall process. Variations in customer demand, which deplete or overload the production process. Ability to implement “Heijunka” or “production smoothing” to overcome these problems. To simplify, by referring to the above diagram, we can see that operator A’s tasks add to 55 minutes, operator B’s 45 minutes, operator C’s 30 minutes, operator D’s 15 minutes. We can simply give operator D’s tasks to operator C and redeploy operator D to where they are needed more. In this case we have a 25% direct reduction. Below are some of the top considerations for workload balancing in manufacturing setup: Capacity of Workstations Type of Product Skillset of Workers Production Schedule Below are some of the considerations for workload balancing in service industry: Service Capacity Type of Service Customer Needs Service Schedule AI can play a vital role in workload balancing by automating tasks that can be optimized and to analyze data to make better decisions. Listed few examples of leveraging AI and ML for workload balancing: Predictive Analytics Forecasting Task Automation Resource Optimization Real-Time Monitoring Decision Support Personalization Examples of workload balancing in Service industry: Restaurant Staffing Call Centre Management Healthcare Staffing Retail Staffing Hotel Staffing In all these examples, workload balancing is used to optimize the allocation of resources and staff, ensuring that customer needs are met while minimizing employee stress and improving overall efficiency. Similarly, in a manufacturing setup, workload balancing can be applied in following areas, ensuring that production demand is met while minimizing machine downtime and improving overall efficiency. Assembly Line Staffing QC Inventory Management Maintenance Scheduling Production Planning We can use any of the below formulas to calculate and manage the work load better: Capacity Utilization: Capacity Utilization = Actual Output / Potential Output. Workload Index: Workload Index = Workload / Staffing Levels. Efficiency Rate: Efficiency Rate = Actual Output / Standard Output. Staffing Ratio: Staffing Ratio = Staffing Levels / Production Demand. Lead Time: Lead Time = Total Processing Time + Wait Time. These formulas can be adapted and customized to fit the specific needs of an organization or industry. The goal of workload balancing is to optimize resource allocation, reduce workload imbalances, and improve overall efficiency and productivity. In general, by leveraging artificial intelligence and machine learning, we can improve efficiency, reduce errors, and improve employee satisfaction and organizations can improve their competitiveness and better meet customer needs.- Batch Processing vs One Piece Flow

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!The decision to choose between batch processing and one-piece flow depends on several factors, including that of: 1. Product Characteristics: The nature of the product that is being manufactured can greatly influence the choice between batch processing and one-piece flow. If the product has a high volume and is repetitive in nature, batch processing may be more efficient. On the contrary, if the product has a low volume and requires customization, one-piece flow may be more effective. 2. Demand: The level of demand for the product is also an vital factor. If the demand is high, batch processing may be more efficient as it can produce a large volume of the product rapidly. However, if the demand is low, one-piece flow may be more effective as it can produce the required quantity without creating excess inventory and avoids waste - Pull rather than Push. 3. Equipment: The type and capacity of the equipment used in the production process can sometime influence the choice between the two. If the equipment is designed for batch processing, it may be more efficient to use this method. However, if the equipment is flexible, meant for customization and can handle small batches, one-piece flow may be more effective. 4. Labour: The availability and skill level of the workforce can also influence the choice between batch processing and one-piece flow. If the workforce is highly skilled and can work efficiently in a one-piece flow environment, this method may be more effective. However, if the workforce is less skilled, batch processing may be a better option as it requires minimal skill and training. 5. Cost: The cost of production is one of the most important factor to consider. Batch processing may be more cost-effective as it can produce a large volume of the product quickly. However, one-piece flow may be more cost-effective in terms of reducing inventory, reducing lead times, and improving quality. Overall, the decision to choose between batch processing and one-piece flow should be based on a careful analysis of the above factors and should be aligned with the organization's goals and objectives. The selection between batch processing and one-piece flow can have significant impacts on quality, productivity, and lead time in a manufacturing process. Quality: Batch processing can result in higher defect rates as it can produce a large volume of defective products before the defect is identified and corrected. One-piece flow, on the other hand, allows for immediate detection and correction of defects, resulting in higher quality products. Productivity: Batch processing can result in longer setup times and longer processing times, which can reduce productivity. One-piece flow, on the other hand, can reduce setup times and processing times, resulting in higher productivity. Lead Time: Batch processing can result in longer lead times due to the time required to produce a large volume of products before they can be shipped. One-piece flow, on the other hand, can reduce lead times as products can be produced and shipped in smaller quantities and with shorter processing times. All in all, one-piece flow can result in higher quality, higher productivity, and shorter lead times due to its ability to reduce defects, setup times, and processing times. However, batch processing may still be a viable option for high-volume, repetitive products, or when equipment is not flexible enough to handle small batches. Eventually, the selection between batch processing and one-piece flow should be based on a careful analysis of the product characteristics, demand, equipment, labour, and cost, and should align with the organization's goals and objectives. Batch processing examples: Food and beverage industry - producing large batches of canned or bottled products, such as soft drinks or canned vegetables. Bakery: Most bakery uses batch processing to produce large volumes of bread. The dough is mixed in large batches, which are then divided into smaller batches for shaping, proofing, and baking. Pharmaceutical industry - producing large batches of medications or supplements, such as tablets or capsules. Textile industry - producing large batches of fabrics or garments, such as a batch of 5000 t-shirts. One-piece flow examples: Automotive industry - producing individual car parts or subassemblies, such as engines or transmissions. Aerospace industry - producing individual airplane parts or subassemblies, such as wings or landing gears. Electronics industry - producing individual circuit boards or electronic components, such as microchips or resistors.- Defect Types and Process Capability

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!DPU is measure of average number of defects per unit. DPMO is the measure of number of defects per million opportunities. Yield % is the measure of proportion of products that pass the assessment or QC stage. DPMO or DPU are used when the defects are discrete in nature and when it can be counted on a unit basis. Yield% is used when defects are assessed on a pass/failure, true/false basis, mostly in quality control or during quality inspection. Appropriate metric depends on the specific context, specific goals and the requirements of the processes in the organization and can differ from case to case basis. Minor, major and critical defects may be weighted differently in terms of their impact on the overall quality of the prod or service. So, it is recommended to use weighted metrics that accounts the severity of each defect. Software development example: When we are tracking number of defects in the code and when we identify 100 defects out of 10000 lines of code. DPMO would be: DPMO = (100 / (10,000 * 1,000)) * 1,000,000 = 10,000 For DPU, when we have identified 50 defects in the user interface and when the mobile application has been downloaded and installed on 1000 devices. The DPU would be: DPU = 50 / 1,000 = 0.05, meaning on average there are 0.05 defects per mobile device. Yield %, during QC, when we run tests on 100 instances with 90 passing the test. The Yield% would be: Yield % = (90 / 100) * 100 = 90% To conclude, when we track both defects and defectives, we can use DPU or DPMO to measure the number of defects per unit or per opportunity and over an above use yield% to measure the proportion of defect free units. So, choice of metric depends on: Specific context / goals Type of defect and Impact on overall quality- Overproduction vs Overprocessing

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Over Production is producing more than what is required, producing earlier or faster than when is required to be used by the end customer. Over Processing is a non-value-added processing step and the efforts add no value to product or service from clients and customers standpoint Over production examples: Producing more than client’s demand of good, service, product, detail, or any information for that matter. • Production of excessive number of quantities than required by the user. • Production of reports which are not used. • Buying before the need is specified. • Huge snacks in bars • Passenger trains with more wagons than necessary • Overstaffed sales stores • Too many meetings or the wrong folks in meetings • Printing all forms instead of obtaining the information in a laptop • Multiple forms with same data • Staff meetings held when it could have been communicated in an electronic mail. • Unstable production scheduling. • Incorrect forecasting model How can we eliminate overproduction? Better planning to understand customer demands. Sending out survey to find out how many people will attend the meeting to plan for snack boxes. Clarity on – How much, when, what can avoid over production situations. Over Processing Examples: Each pointless activity which is required to produce a service, goods based on customer’s expectations. Doing the same thing for the second time (e.g., double check of computations). • Signs, forms, manager’s approvals which are not required to complete the task • Re-writing of data which was already inserted (e.g., from pdf to the system) • Correction of previously performed work. • Software features that no one ever accessing it. • An MRI when an X-ray would be adequate. • Complex purchasing procedures with multiple approval levels. • Reports reviewed by multiple people or multiple signoffs. • Passing customer calls around. • Painting / polishing on unseen/hidden areas. • Doing unnecessary quality check when not required How can we eliminate over processing? Getting to know about customers expectation and converting them to meet the precise specification. We can use, VSM to identify VA, NVA and can essentially reduce the over processing.- Control Limits vs Specification Limits — Why Confusing Them Leads to Bad Decisions

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Starting with the basics, Control limits are the process's voice (what the process does) and Specification limits represent the customer's voice (what we want the process to do). Lower and upper control limits are LCL and UCL, respectively. Lower and upper specification limits are LSL and USL. In general, these limits represent our process variation and help highlight when our process is out of control. x̄ - center line for the data is the sum of all the input data divided by the total number of data points LCL – is 3 process standard deviation below the average and UCL – is typically 3 process standard deviation above the average If the data point falls within ±3SD of its average, it is considered as “EXPECTED” behavior for the process and thus is a common cause variation. Something special happened to those data pointers outside these control limits and can be special cause variation. Let’s consider real-time data from the 2022 Japanese Grand Prix. The qualification session usually determines the starting order and the pole position of the race. To occupy pole position, the fastest driver must ensure that their performance is the quickest, that is, with the lowest lap timing. Below are the lap time/s (actual performance) for the qualifying session. LCL 01:29.304 UCL 01:31.511 Five of the slowest cars are eliminated. Now comes the specification limit, which is set based on the 107% rule. For instance, if the fastest lap time was 100 seconds, each driver who is eliminated in the session must complete at least one lap within 107 seconds to guarantee a race start, which is the USL. Often, only one specification limit is used as in this example. Control limits are applied to summary statistics, whereas specification limits are applied to individual measurements. Control and specification limits are extensively used in control charts and can give us an early warning if a process is showing irregularities, giving us the opportunity to take remedial steps before the situation becomes a problem. Let’s consider another example from the customer contact center: if the average handling time is 4 minutes with a standard deviation of 1 minute, then the control limits are UCL = 7 minutes and LCL = 1 minute, respectively. Specification limits are the targets for the process and defined by the customer or based on the performance of the market. It is desired that those control limits be within the specification limits so that, in case of special causes, the customer will not be impacted. Application of control limits in control chart: The position and scattering of data points plotted on the control chart assist us in identifying process behavior. Process behavior includes that of identifying the stability and understanding the pattern of process variation from a special and common cause viewpoint. Let’s consider one more example based on the below referred Shewhart chart of a manufacturing unit of Prod X. If we could notice that none of the data points are outside the specification limits, and usually the production management team will be OK and glad about this type of run, it is worth noting that if the process were managed statistically, these patterns would assist the line engineer in adjusting and retargeting the process. However, the effectiveness of re-targeting will be determined by the usefulness of the process gain factors. Consider the following scenarios to better understand process performance: Scenario 1: The specification limit is within the control limits. Scenario 2: The specification limit is the same as the control limit. Scenario 3: The specification limits exceed the control limits. In scenario 1, part of the process (natural variation) may function outside of customer-defined targets, leading to rejections and defects. In scenario 2, the process may meet customer specifications, but it can produce defects when there is an uncommon source of variation. In scenario 3, which I would call an optimal scenario, the production is stable, within its capability, produces no defects or rejections, and of course meets the customer specification. To summarize, Control limits are calculated from the process data, so they are the voice of the process, and specification limits are defined by the customer, so they are the voice of the customer. Control limits emphasize location, spread, and width, whereas specification limits focus on meeting the requirements. Control limits are applied to subgroups, and specification limits are applied to items. Control limits help reduce internal rejections in the process, and specification limits help reduce customer rejections. Control limits are displayed in the control chart, and specification limits are displayed in the histogram and probability plots.- Six Sigma Proficiency Levels

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Various levels tell us, who can perform what role, and usually when they can be dealt with the projects. Professional skills, expertise and the technical lexicon vary accordingly as the expert levels up. It starts with White Belt, who has the basic understanding of six sigma concepts, more from an awareness perspective and then into Yellow Belt, were the expert participates in the project and supports green belts and black belts in achieving various milestones. Green Belts, extensively assists with data collection and statistical analysis and leads green belt projects and teams. Black belts have advanced lean six sigma expertise and most of the time coaches, mentors, teaches, monitors, and leads projects. Master Black Belts works with leaders to identify gaps and select projects and is responsible for Lean Six Sigma Implementation and cultural change in the organization. White Belt: Has basic understanding of six sigma concepts and methodologies. Yellow Belt: Aware of Six Sigma Principles. Green Belt: Uses analytical tools, DMAIC, LSS and focuses on Lean Principles. Supports black belt projects. Black Belt: Full time project Leader. Trains and coaches green belts Master Black Belt: Advises on Six Sigma and trains black belts and green belts. Acts as six sigma technologies and an internal consultant to the organization.- Impediments (Agile)

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Agile team's one of the commonly used practice is Daily stand-ups, which is short and regularly held to aid team coordination. Impediment can be anything that keeps the team from getting the work done and that which usually slows the velocity. Scrum Master, as a servant leader, helps and enables the team to reach their full potential and capabilities by being an impediment remover. However, everyone on the team shares equal responsibility for identifying impediments and all of those identified need to be flagged in daily scrum. To make effective progress, scrum master should consider and make conscious decision about removing impediments, some examples include that of: Do we essentially have to remove the stated impediment? What is the real problem here? Is it considered as impediment or something which the team can fix themselves? Some common impediments that the team can face are listed below: • Product owner (PO) inaccessibility • Indecisive PO • Internal conflict between team members • Poor health of team member • Unforeseen changes in team structure • Shortage of required skill set • Loads of technical debt • Issues with suppliers • Undesired pressure from top management • Problem with tooling of development team • Lots of trivial meetings that the development team need to attend • Restriction to team setting Best practices and tactics to remove impediments: • Understand the organization • Collaborate with PO • Stop spending time in solving wrong problems • Be transparent – Use Impediment board • Have clarity between blocks and impediments • Use sprint goal • Do not wait for daily stand-up meeting to raise an impediment • Keep track of fixed impediments • Be brave in removing impediments If impediment does not go and reoccur, which means team has not effectively identified the root cause and it is suggested to start with the A3 process to eliminate the barriers. It is important that the team continually identify new impediments which is part of the key concept in scrum continuous improvement and as the team matures ideally the long term goal for the scrum master is to empower the team to remove identified impediments by themselves.- Bowtie Analysis

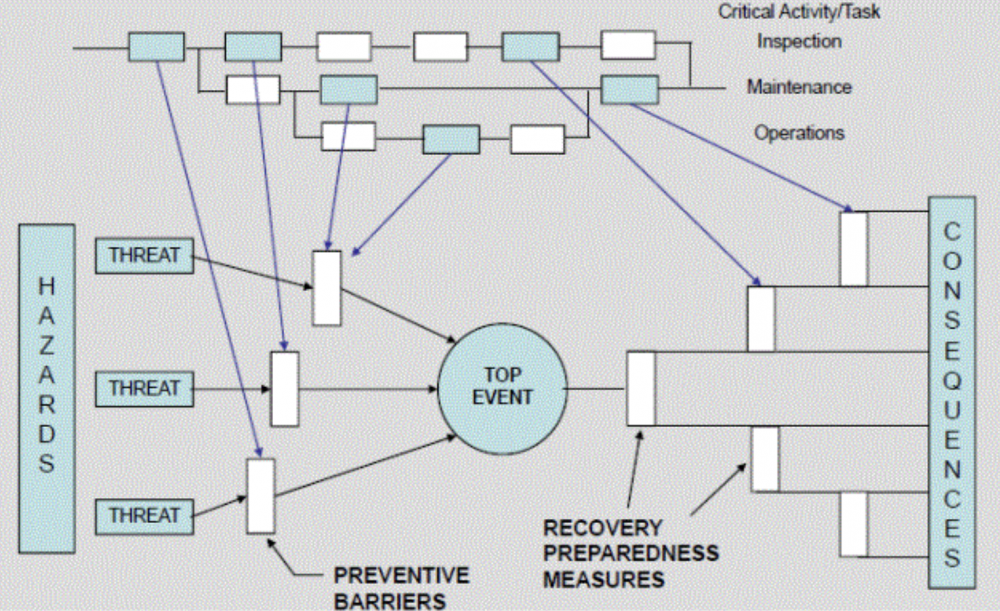

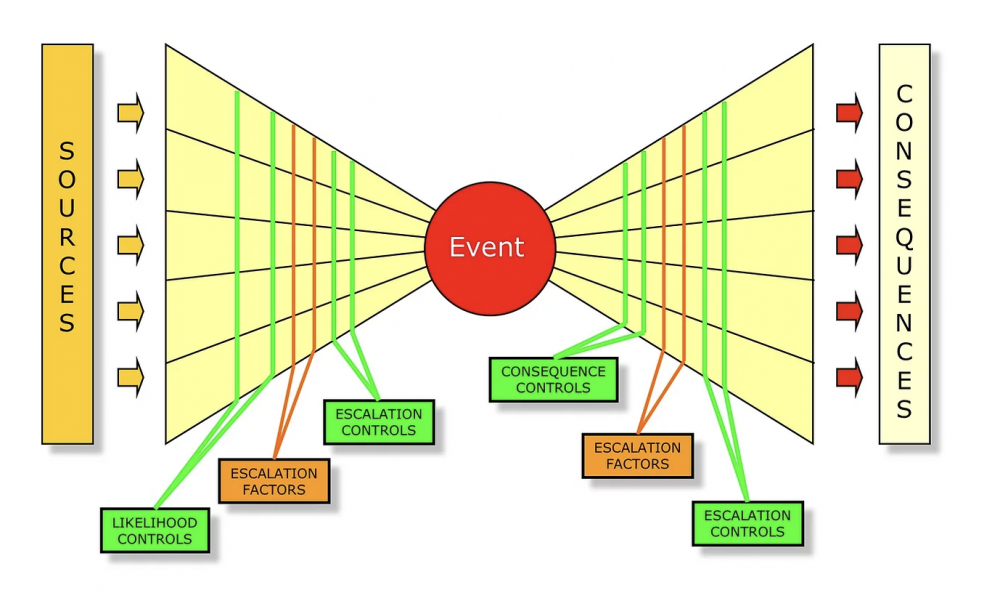

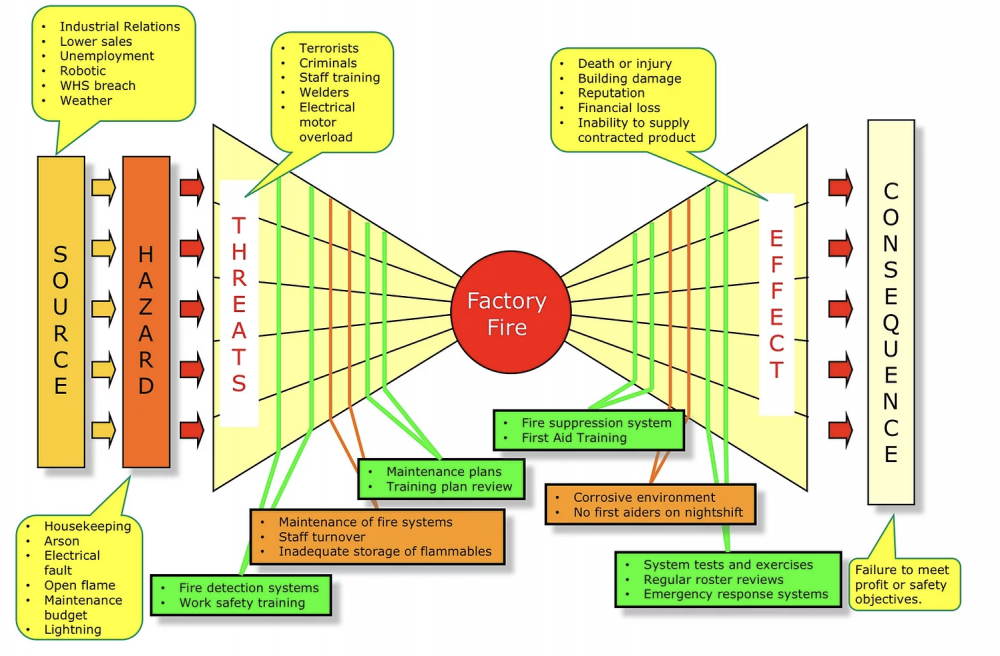

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Bowtie Analysis is used for risk assessment, risk management and risk communication. By using bowtie diagram we can visualise risk. It shows the causes and consequences of an event. More over the control measures to mitigate the risk is also shown on the diagram. Having potential causes and control barriers on one side and having potential outcomes and defense causes the other side of the hazardous event gives this analysis the shape of "Bow Tie". It simply combines Fault Tree (FT) and Event Tree (ET). Bow-tie Shape reference Bow-tie Modelling Examples: Factory Fire Bow-tie Example Financial Product Bow-tie Example Car Crash Bow-tie Example Bow-tie use cases: Risk Analysis Reliability Engineering Safety Assessment Mining FMEA use Cases: Chemical Aerospace Military Automobile Electrical Mechanical Large scale industries Difference between FMEA and Bowtie: FMEA is Bottom-up and Bowtie can be constructed using both bottom-up and top-down approach FMEA is quantitative whereas Bowtie is qualitative In general, risk analysis helps an organization to identify risks and potential threats to its internal operational processes and provides severity and likelihood of those occurrences. Below is the list of risk analysis methods and techniques. Qualitative: Bowtie Analysis Delphi Technique SWIFT Analysis Fly Analysis Risk Register Probability-Impact Matrix Risk Categorisation Expert Judgement Quantitative: Monte Carlo simulation Decision Tree Analysis Sensitivity Analysis Three-Point Estimation FMEA Scenario Analysis Latin Hyper Cube Simulation Conclusion: Even though there are many risk assessment methods like Bowtie, FTA, FMEA, etc., they focus with single threats and most of the time struggle to represent multiple simultaneous failures. FMEA systematically identifies the effects/consequence of Failure mode and used to remove/reduce the possibilities of failure and Bowtie, predominantly gives hazard insights and its management and helps to represent the influence of safety system on the shop floor accident scenario. Integrating them, both Bowtie and FMEA can be used together for hazard analysis and risk assessment. Detection rating and RPN calculation based on FMEA along with corrective measures to improvement RPN and subsequently using bowtie to identify safety critical barriers and associates actions can improve the effectiveness drastically.- Synchronous vs Asynchronous Process

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Synchronous (dependent) is sequential and happens one at a time, whereas in Asynchronous (independent) concurrent parallel operations are possible. In the below reference, total time taken to complete all 4 tasks in Asynchronous system is just 20 seconds compared to that of 45 seconds in the Synchronous sequential process execution. For instance, zoom meetings happens sequential, which is Synchronous, whereas email communication, online posts can be asynchronous, and be concurrent to keep the target audience engaged for different roles, functions, regions, and programs. As referred in the above example, request stacks in synchronous system, and typically referring from a web service request scenario, clients have to wait on the queue until the previous loop is executed and most of the time, what they see is a timeout response. Asynchronous system allows multi-tasking, has better resource utilization, with fewer wait times and is more adaptable and the leading contribution is the enhanced throughput that we get out of asynchronous systems, on the contrary synchronous system performs function one at a time and follows rigid sequence. Thus, it is advantageous to use the asynchronous system, especially in an agile, multi-request system environment, however, it is wise to use synchronous in reactive systems. So to conclude, It is better and suggested to evaluate and identify the dependencies in the processes to select the best optimal approach that works for the organization.- Network Diagrams — Activity on Arrow vs Activity on Node Explained Through a Practical Choice

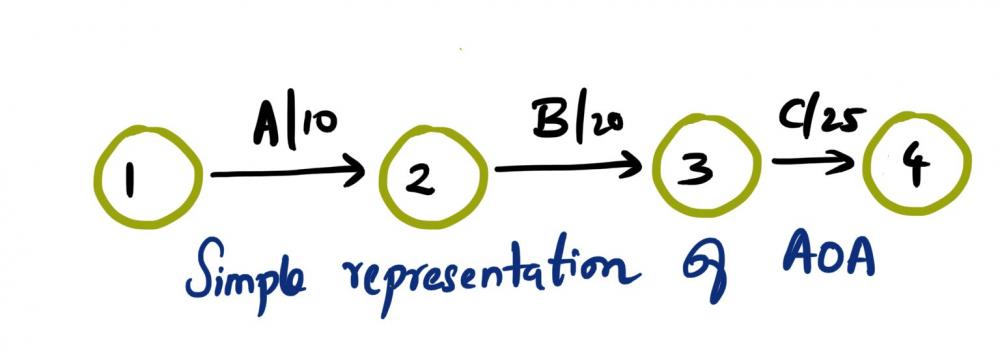

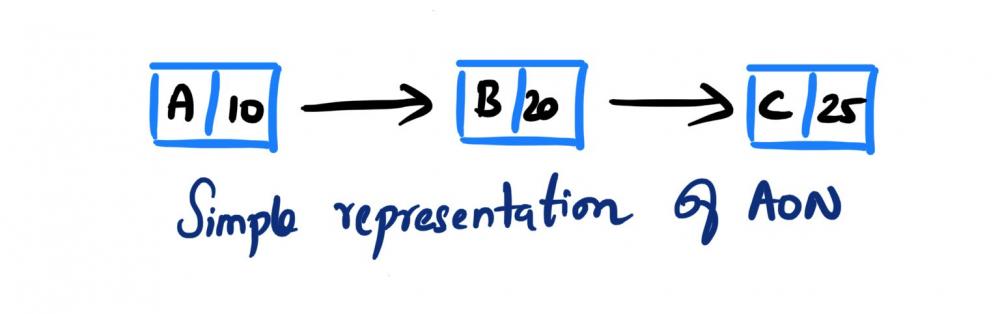

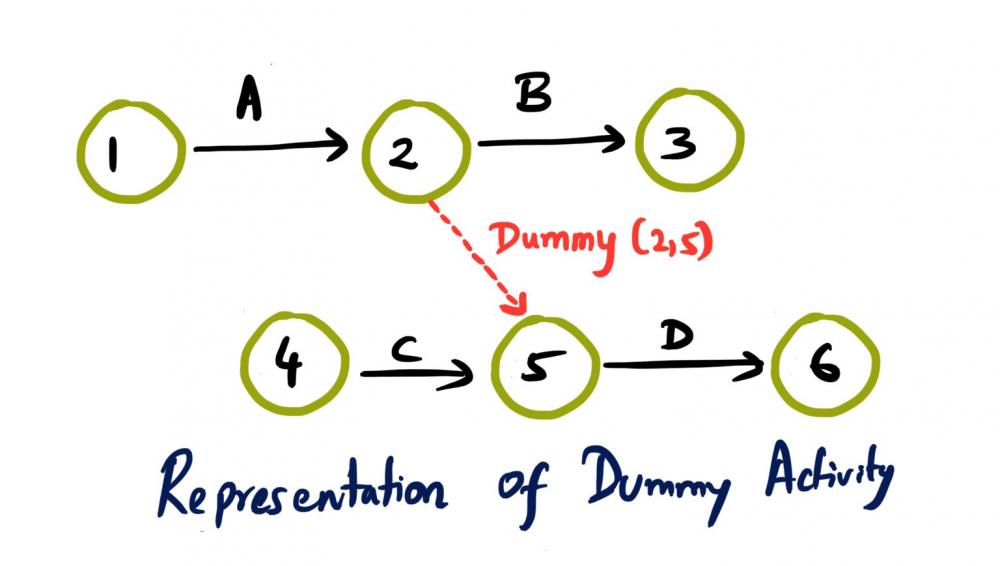

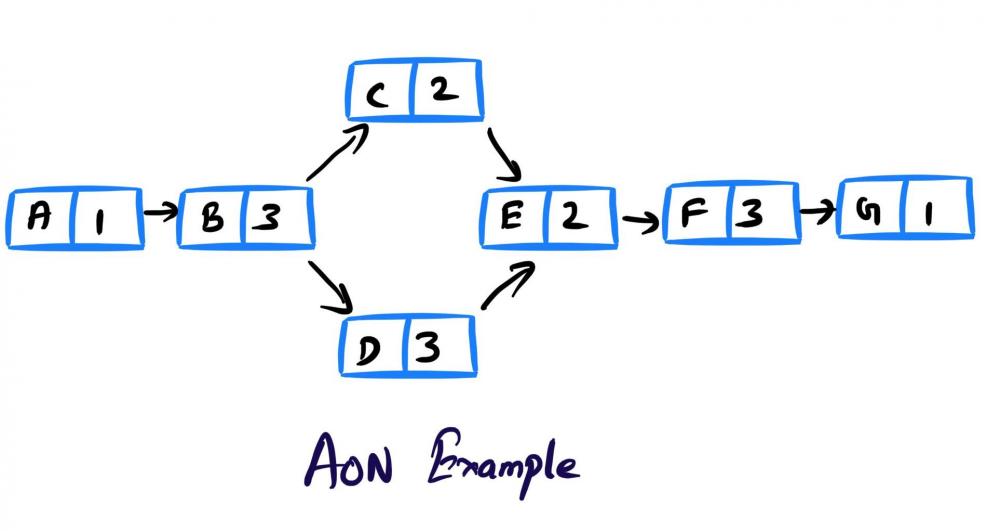

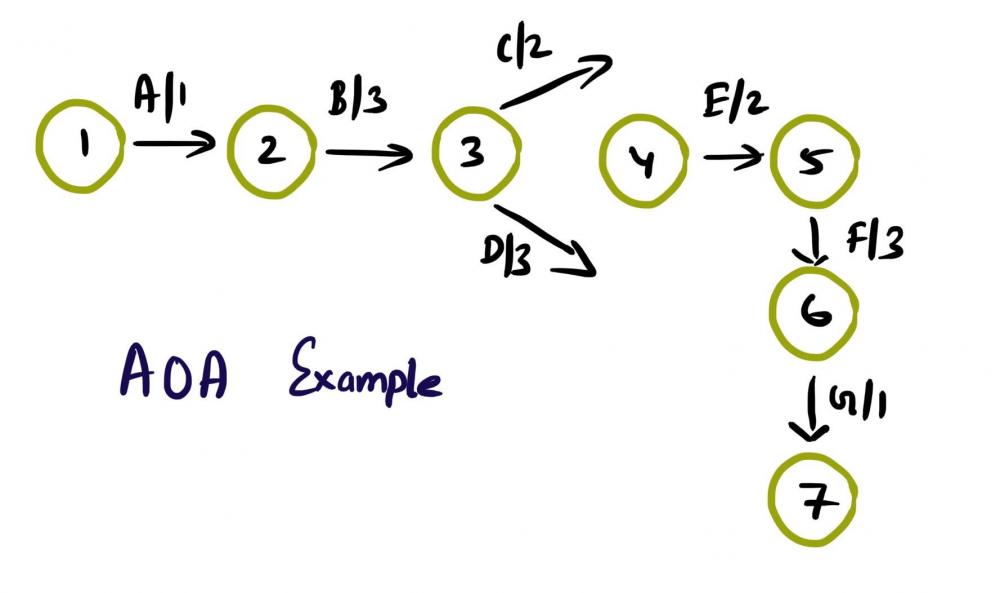

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Activity on Arrow (AOA), as the name implies, these network diagrams denote each activity as an arrow. Here the nodes denote the start and end of an activity. Activity on Node (AON), this method is also referred as Precedence Diagram Method (PDM), and here the arrow represents the logical relationship amounts the activities and the nodes denote activities. Decision: Even though both methods accomplish same results, practitioners prefer AON over AOA, as it does not require the use of dummy activities. Dummy activity (connecting link) is an imaginary activity, which does not require any time not any resource, still used to identify the dependence among operation. Whilst we can maintain the network Logic and avoid opacity (difficulty in interpretation). In AON, as activities are represented by nodes and its interdependency can be directly denoted by connected arrows, accordingly there is no need of dummy activity. Considerations: AOA, can have several separate possible networks illustrating the same project, contra AON representations are unique AON diagrams are comparatively easier to create and interpret When it comes to amendments, design changes are easier in AON, then doing the same on AOA structure AON focuses on tasks, whilst AOA focuses on events Leaders every so often, get confused with AOA networks and prefer to see more of AON representations Lets consider the below example and design the respective AOA and AON structures. Choose Project, A, 1 Discovery, B, 3 Get Go ahead, C, 2 Data Collection, D, 3 Assemble team and Kickoff, E, 2 Finalize actionable, F, 3 Leadership Summary, G, 1 Although AON is advantageous, it becomes challenging under the below listed situations: Path tracking by activity number is hard When there are multiple dependencies, drawing and interpreting becomes difficult Ruling: Certain Planning and Optimization techniques precisely require AOA network structure, and some might require AON format. It is hard to prefer one, yet opting AOA over AON and conversely, is solely based on specific project requirement. Nevertheless, the advantages of AON become more apparent and takes the upper hand over AOA.- Dark Data

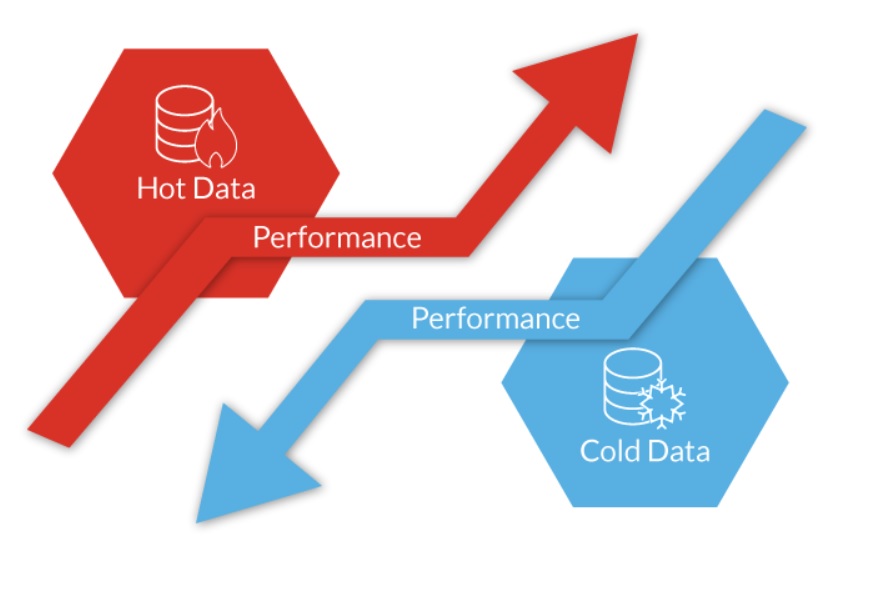

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!“Data is more valuable than Oil”, nevertheless are we leveraging it to the extreme capacity? The answer is simple, it is "No” and it simply becomes dark data! Gartner, coined this term ‘Dark data’ and defines it as “The information assets organizations collect, process, and store during regular business activities, but generally fail to use for their analytics, business relationships and direct monetizing” Dark data can be generated by organization’s systems, devices, and interactions and typically most of the time it is the CRM, ERP, SCADA, HTTP, IoT and even WIFI systems which collects the data. It can be stored physically or on the storage peripherals or in cloud. While most of the data is unstructured, some of the examples of Dark data includes that of below, but not limited to the list, Application logs Customer records Geolocation Survey data Financial statements Customer Address Contact details CCTV footage Emails Chat messages Medical records Zip files Archived web content Code snippets Biggest challenges with regards to dark data is with regards to: Security dangers (hacks) Compliance issues Data authenticity and High Storage cost Brand Reputation Opportunity Cost Risk associated with the dark data can be easily mitigated by adhering to audit and retention policies defined by the organization. However, some best practices can have high impact to manage the risk associated with the dark data. The below model typically shows how the data is collected, stored, retained and deleted, more from an analyze, categorize and classify approach. Model Explained: Starting from Data classification (Public, Internal, Restricted) While we classify, it is vital to bucketize based on few critical factors, viz., Critical data? Permanent document? Proprietary Intellectual Property? Document/data serves the current needs of the operations? Legal and regulatory requirement? (For instance, w.r.t HIPAA, 6 years minimum retention. In contrary, GDPR allow data storage for an extended period, however, solely should be used for the purpose of public interest, statistical analysis and for historical research only) Hot Data or Cold data? (hot data is accessed frequently and used for quick decision whereas cold data is old data and are not frequently used) Based on the classification, then deciding whether to store or delete. If we wanted to store what is the retention period and how it will be useful. When we follow this approach, along with Regular data Audit and internal Data Life Cycle Management (DLCM), we can make the maximum utilization of the data from the data pool. Ways to leverage Dark data: Text Mining / Word mining Data mining methods Voice to Text analytics Data analytics Prescriptive analytics Behavior analysis, which can be used to train AI models for prediction Big data analytics and visualization (SAP HANA) Data Forecasting Trend Analysis Investigate past complaints Google’s approach to data management: “Some data you can delete whenever you like, some data is deleted automatically, and some data we retain for longer periods of time when necessary. When you delete data, we follow a deletion policy to make sure that your data is safely and completely removed from our servers or retained only in anonymized form.” Apple’s approach to data storage: Apple uses personal data to power our services, to process your transactions, to communicate with you, for security and fraud prevention, and to comply with law. We may also use personal data for other purposes with your consent. Final say: Data violations have earned a lot of notice in recent years as businesses become more dependent on digital data, cloud computing, and remote working. As a result, compliance and regulations have emerged as a requirement for ensuring information security. Using data analytic application suites can manage unified unstructured data effectively and can provide intelligent identification of data sets in the organization which can be in line with the industry legal and regulatory requirements.- Berkson's Paradox

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Berkson’s paradox is a special case of collider bias. In simple terms, this bias results from conditioning on a common effect of at least two causes. In more easy terms: This happens when 2 variables appear to be negatively correlated in the sample data yet they are actually positively correlated with regards to the overall population For instance, let’s consider, two ancestors namely, exposure (E) and disease (D) and a common descendent (C). Here conditioning on C leads to a distortion in the association between E and D. That is Berkson's fallacy. In the below example, if we condition on the collider ‘hospitalization’, we can notice a reversal in the association between Smoking and Covid This is very much similar to that of the Berkson's original work in 1946, where he observed a negative correlation between cholecystitis and diabetes in patients, in spite of diabetes being a risk factor for cholecystitis. One of the best methods to prevent the bias is to collect simple random samples from population and that itself will reduce the errors in data gathering. Ensuring to properly define the population and then examine statistically whether the sample is the unbiased representation of the population.- Dimension vs Measure

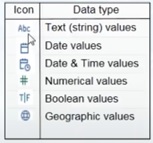

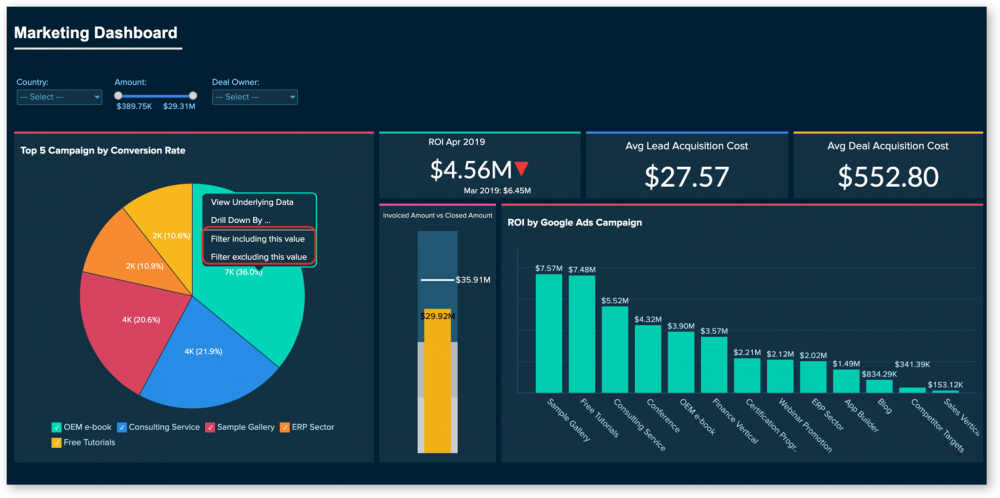

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Dimension can have names, dates which are qualitative in nature, whereas measures can have numeric, which is quantifiable. Possible combination of discrete and continuous is viable with dimensions and measures. So, we can have discrete dimension, continuous dimension, discrete measure, and continuous measure as possible data types. In both continuous measure and discrete measure, aggregation (sum, average, count, min, max, percentile, std.dev, variance etc) is possible. However, aggregated value is shown as continuous data value in continuous measure, whereas in discrete measure, aggregated value is shown as categorical value. Dimension Examples (descriptive field): Client Name Client Segment Client ID State City Country Postal Code Measure Examples (numeric field): Profit Unit Cost Orden Quantity Sales Salary Most of the data visualization tools, auto detects data types, for instance, Tableau automatically detects as well as represents data types as symbols. Differences: For Instance, I have considered Tableau Data Visualization tools for reference to give elaborate difference between Dimension and Measure Below are some of the examples of effective usage of dimension and measure in terms of overall data visualization dashboard. Example - Sales Dashboard Example - Marketing Dashboard Example - Revenue and Customer Distribution Overview Dimension and Measures are the key point of any data visualization tool as it plays a major role while driving with data sets.- Krippendorff’s Alpha vs Fleiss’ Kappa — When Is Alpha the Better Choice?

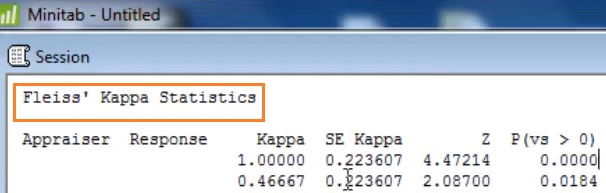

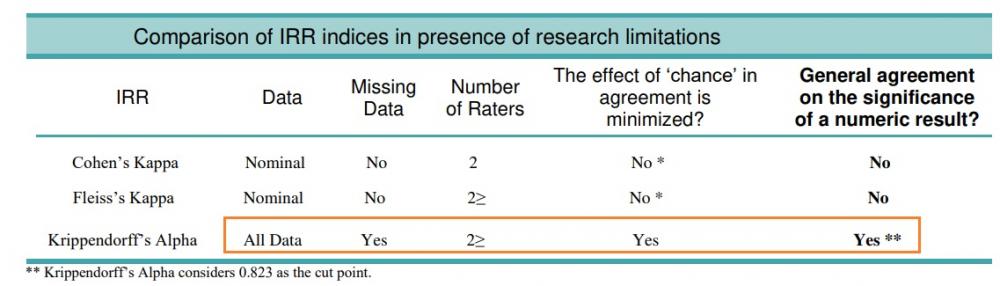

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Kappa is defined as the ratio of proportion of times that the appraisers agree to max proportion of times that the appraisers could agree. Kappa ranges from -1 to 1 The larger the kappa, the more agreement in that category For instance, Kappa value of 1 represents Absolute agreement Below table represents commonly accepted values for reliability measures: Cohen’s kappa Value Interpretation: 0.91 - 1.00 - Almost perfect 0.80 - 0.90 - Strong 0.60 - 0.79 - Moderate 0.40 - 0.59 - Weak 0.21 - 0.39 - Minimal 0.00 - 0.20 - None Krippendorff’s alpha Value Interpretation: 0.80 - 1.00 - Reliable value 0.67 - 0.79 - Acceptable for tentative conclusions 0.00 - 0.66 - Not acceptable Take Away: With caution, Stat practitioners should primarily examine the marginal distribution and not uncritically interpret the kappa value whether it is high or low. As prevalence, odds, raters independence, and the impact on diagnosis and other additional factors can have significant influence on the kappa statistics. Kappa statistics represents the degree of absolute agreement amongst ratings and popular statistics includes that of, Cohen’s kappa – Measures assessment agreement between two raters Fleiss’s kappa – Generalization of Cohen’s kappa (>2 raters) In most of the statistical tools, such as Minitab, by default Fleiss’s kappa is calculated for AAA (Attribute Agreement Analysis) As we could note here, Fleiss’s kappa is based on the theory that the observed agreement is corrected for the agreement expected by chance. However, on the contrary, Krippendorff’s alpha is based on the observed disagreement corrected for disagreement expected by chance. Key Differences: Fleiss’s kappa: Cannot handle missing values Expected agreement sample size is infinite Best suited for Nominal data Krippendorff’s alpha: Can handle missing values Actual sample size is considered Can handle all data types Both Fleiss’s kappa and Krippendorff’s alpha can be likewise recommended in the circumstance when the data is nominal and when there are no missing values. However, Krippendorff’s alpha statistics is preferred in below situations, viz., Whenever the data is missing Higher than nominal order (ordinal, interval, ratio) When there is bias in the distribution of disagreements (even strong bias will not have any distorting effect) When different participants have different number of raters (usually when the number of raters is more than 2 and can be applied to any scale level) When there is incompatibility in obtaining observation ratios by pair counting in the small samples Summary Table: Final Take Away: Before deep diving into the reliability data, it is recommended that based on the context, practitioners should select the index of Inter Coder Reliability based on data properties and assumptions, including the level of measurement of each variable to calculate the agreement and the number of coders. Most of the times, it is difficult and complex to compute Krippendorff’s alpha statistics compared to Fleiss’s kappa, however Krippendorff’s alpha provides higher reliability, particularly when there are no perfect conditions for research.- Cobots

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Cobots are similar but smaller when compared to that of industrial robots. It is also comparatively cheaper in price and much user friendly. For a large-scale mass production, industrial robots can provide best efficiency. However, for small and medium scale businesses, cobots can be much more effective when it comes to automation on the shop floor. Cobots are Collaborative Robots. It is more of a collaboration between Human and Robot in a shared space and can optimize human work in various aspects. International Federation of Robotics (IFR) defines multi-level of collaboration viz., Coexistence, Sequential, Cooperation and Responsive Collaboration. Traditional Robots are best fit for: Large batches, small variability Complex deployment Consistent environment Human monitoring Focus on Robot Automation Big Investments Longer ROI Alternatively, Cobots could be a best alternative for: Low-volume, high-mix Fast and Easy deployment Agile and adapts to environment Collaborative Focus on End-Of-Arm-Tooling (EOAT) Lower upfront cost Faster ROI Cobots in Service Industry: It is often referred as RaaS (Robots-as-a-Service) and few of the utilities includes that of, Robotic-Assisted Knee Surgery (robotic arm assistance) Food Robots - Packaging (Wrapper, Vacuum sealer) Food Robots - Other Applications (Palletizing, Pick-and-place, Logistical automation) Product Quality Inspection (Cobot arms for visual inspection using 3d Cameras) Aviation (Cobot co-pilot mainly for Military UCAV (Unmanned combat aerial vehicles)) Agriculture (Farming - Once Cobot identifies flowers, fan gets activated for effective pollination (Smart Farming)) Diary (Robotic Milking) Restaurant Cobot These cobots are identified and selected based on critical factors such as, Reach (500 - 900 mm) Payload (2kg - 16kg) Footprint (Ø 128 - 200 mm) Weight (10kg - 35kg) Technology Advancements like IoT features with loaded capabilities such as heat sensors and thermal cameras help the cobots to perform more accurate tasks based on their use cases. Anticipating the rise of 5G, could lead Cobots to get fully automated and to perform tasks with greater accuracy.- Sandbox

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Sandbox is one of the testing environments. Each environments have particular purpose. It is critical, that the tester knows all the aspects of environments which could lead to better testing and QA strategy in the organization. Types of environments: DEV (Development) QA (Testing) SANDBOX (Isolated Virtual Environment) STAGING (Pre-production) PROD (Live) DR (Disaster Recovery) Sandbox-evading malware is a known type of malware (malicious software). This malware can identify if it is within virtual machine environment or not. Sandbox is a highly controlled environment that can be used to test unverified programs that may contain malicious codes with no loss to host device. However, these sandbox-evading malwares don’t perform their malicious code until they are out of the controlled environment. There are several recent instances in the industry, where AI algorithms are used to these malwares for evading virtual environments. Malware writers (aka cyber criminals) are users of sandbox environment themselves and to the fact, that there are more than 500 evasion techniques to avoid the detection and analysis. There are many, to list few common Evasion Techniques includes that of, Human-like behavior (Interaction detection – Like scrolling, mouse clicks) System interaction detection (Shut down by payload, self-debugging) File systems (Specific files, directories, strings) Hooks (mouse hooks) Generic OS Queries (Specific username, computer name, host name) Global OS objects (Specific global mutexes, virtual device, pipes and objects) Windows Management Interface (WMI) (Win32_Process Class, Task scheduler, Last boot time, last reset time) Timing-based evasion – delayed execution (stalling, dropper, logic bomb, extended sleep) Obfuscating internal data (encrypting API Calls, Domain Generation Algo (DGA)) Firmware tables (Specific strings – SMBIOS table) UI Artifacts (class names) Registry (registry paths, keys) OS features (debug privileges, unbalanced stack) Processes (Specific running processes, loaded libraries) Network (Specific MAC address, adapter name, anti-emulation) To address evasion/dodging, organization need to deploy strong systems (typically SaaS) that can bypass anti-sandbox strategies for evading detection and that can evaluate and continuously monitors the trend of threats which should potentially include that of vulnerabilities, exploits, active attacks, viruses and further malwares, such as spam, phishing, and malicious web content. Further factors that need to be leveraged while monitoring should include categories such as, Behaviors exhibited Data Reputation (whether hosted on a suspicious IP/URL) Digi Certificate (Correctly signed?) Total Virus (Known sample?) Industry reputation (popular application?) Alongside, deploying detection mechanisms, such as, below list can effectively control and counter evasion. Changing sleep duration dynamically Human interaction simulation Adding real environment and hardware artefacts Apart from dynamic analysis, perform static analysis as well Using fingerprint analysis Using behavior-based analysis Customizing the Sandbox Adding kernel analysis Implementing ML Considering content disarm and reconstruction (CDR) – Extra Sec layer These measures when combined and deployed can result in effective security solutioning for countering malware evasion.- 2x2 Matrix

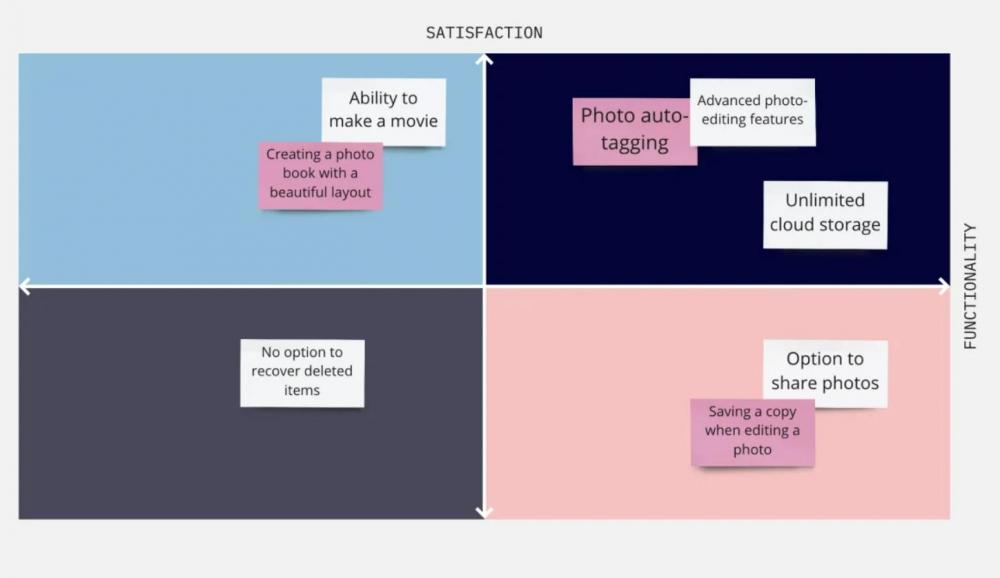

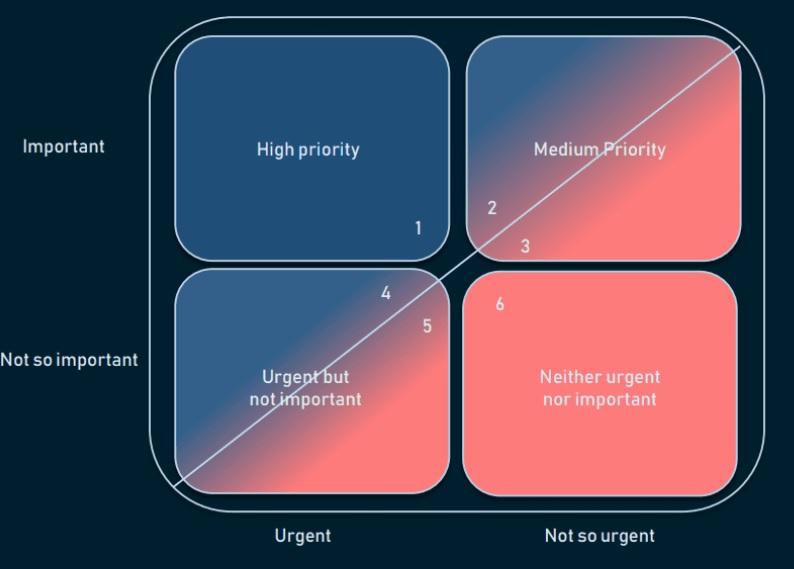

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Prioritization Matrix is an essential and useful tool which assists in breaking down tasks and activities when there is too much in the plate. This facilitates decision making and help leaders to consider those activities which are most relevant, urgent, important and required for project and process sustenance. There are many variants of 2x2 Prioritization Matrix. Frequently used few are listed below. RVCE Matrix (Risk, Value, Cost and Effort) Eisenhower Matrix (Urgent, Important) MoSCoW - Value Based Prioritization Techniques (Must Have, Should Have, Could Have, Won't Have) WSJF (Value and Effort) Kano (Performance, Must-be, Attractive, Indifferent) RVCE Matrix: Decision Criteria: Risk, Value, Cost and Effort Decision/Outcome: Consider, Avoid, Investigate and Prioritize Eisenhower Matrix: Decision Criteria: Important or not, Urgent or not Decision/Outcome: Do, Decide, Delegate, Delete MoSCoW: Prioritization based on Value/Features Decision: Must have, Should have, Will not have, Could have WSJF: Decision Criteria: Value and Effort - High and Low Decision/Outcome: Do now, Do Later, Do Next, Don't Do Kano: Decision Criteria: Satisfaction and Functionality Other applications/variants of prioritization models includes that of: Lean Prioritization, where the effort is compared with ROI/Degree of Impact to consider the outcomes as Low Priority, Just Do it, Reconsider and Complex but worthwhile. Value and Risk Value and Effort Value and Complexity Benefits: Allows to analyze and compare results It removes bias Allows to objectively rank the priorities Determines most critical focus area Keeps progress of the project Better Time management Depending upon the type of project and consideration we can select any of the above mentioned models to Focus on the right project and better manage our time. My personal favorite model is Eisenhower Matrix, which is kind of, has the combination of essence from all the prioritization models available.- Hanedashi

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Hanedashi: Auto-Eject / Auto Unloading / Automatic Ejection This technique provides automation for machines to remove finished parts from the process. Below is a typical example of manual and automatic unloading of the finished product. Manual Unloading: Automatic Unloading: Manual effort is just put in loading and not in unloading. Hanedashi is crucial for "Chaku Chaku"/"Load-Load" Line. In Chaku-Chaku, operator picks and loads the finished part from Machine A to B, to complete the cycle. If Hanedashi is used, machine can itself unload and load the parts with out operators effort. Thus Hanedashi can effectively eliminate the below wastes in a lean manufacturing setup. Transportation: Wasted time in moving materials unnecessarily Motion: Wasted time and efforts due to unnecessary movements by operators Waiting: Wasted time in waiting for finished product to complete next steps Some of the benefits of Hanedashi: At same time, operations in Multiple machine is possible Operator productivity improvement Improved working condition due to better ergonomics- A/B Testing

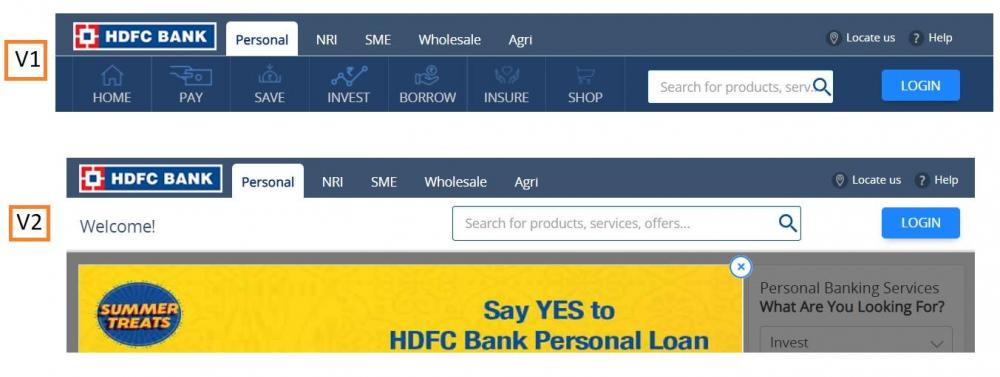

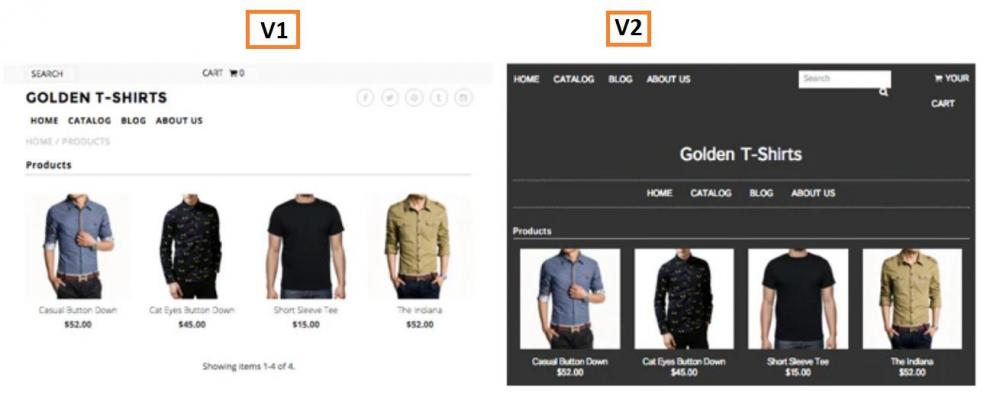

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!A/B Testing (Split Testing, Bucket Testing): A/B Testing lets marketers better understand which key formatting of a website or any piece of content makes the customer and clients more engaging. It simply compares two versions of webpage (sometimes more) to identify which variant appeals for more clicks. Predominantly for Web pages, nevertheless also used for comparing Emails, Application Interface and Advertisements. Testing process is simple, below are few of the milestones Collecting data Identifying Conversion Goals Generating Hypothesis Creating Variations Running Experiment Analyzing results Example 1: Example 2: Statistical analysis is performed to identify which variation/version better performs and in-line with the conversion goals. Search analytics tools like Stats Engine, Bloomreach, SiteImprove & Semrush uses build-in advanced statistical models which can throw out results real time. Is A/B Testing similar to that of Multivariate tests? A/B compares 2 pages with entirely different headlines, Text and Images. Multivariate test compares identical pages, however different fonts and sizes are compared. Below are some of the essential considerations of A/B Testing Variables: Layout CTA’s (Calls-to-Action) Content Offers Color Size Email Subject Line Headlines Email Sender Pricing Scheme Copy length Landing page Tone Images Timing Frequency Video Vs Text Sales Forms Targeting and Personalization Sales Copy Data Visualization Mostly used in the below industries: Media Travel E-commerce Banking Fin-Tech Technology Benefits of A/B Testing: Helps in conversion goals/sales Helps in making data-driven decision Improved user engagement Reduces bounce rates Ease of analysis It would be wise to use both A/B Analysis and Multivariate analysis together. First A/B can be used to determine which layout and design converts well and then using multivariate to fine-tune formatting the page to attract widespread traffic.- Correlation vs Covariance

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Both Correlation and Covariance measures the linear association between two variables. To be specific and make it apparent, let us understand the key difference, Correlation measures the strength of a relationship between two variables. Covariance measures the direction of a relationship between two variables. Specific comparison: Values: Correlation: Standardized Covariance: Unstandardized Units: Correlation: Has Units Covariance: Does not have units Scale: Correlation: Change in scale does not affect the value of Correlation Covariance: Change in Scale will affect the value of Covariance Range: Correlation: -1 to +1 Covariance: -∞ to +∞ Why Correlation value lies between -1 and +1? Correlation is nothing but Covariance divided by standard deviation of the variables, hence the value lies between -1 and +1. Which means, it is scaled down version of covariance. Inferences from Analysis: Covariance Inference: Positive - Both the variables increase or decreases together - Directly Proportional Negative - Inverse, if one variable increases, the other decreases - Inversely Proportional Correlation Inference: +1 - Perfect Positive linear relationship 0 - No linear relationship -1 - Perfect Negative Linear relationship Some more examples: Correlation Examples: Pearson r Relationship 0 No relationship 0.466 Moderate positive relationship 0.95 Large positive relationship -0.96 Large negative relationship Covariance Examples: Covariance Relationship 0.0036 Positive 0 No variance -0.007 Negative -0.0376 Negative Covariance, typically can take any value and it is toilsome to interpret the number. Sample Data Set: G Price CO Price 49000 95.17 48600 98.4 48600 98.4 48600 98.4 48250 97.17 48000 97.16 47800 101.24 47800 101.24 47800 101.24 47950 103.66 Based on the same data set, below is association summary: Correlation(R) -0.74682 Covariance(G,CO) -744.37 There are numerous applications of Correlation and Covariance, some are listed below: Data science: one of the frequent used measurement is Covariance. Insights from covariance analysis can help us to get more clarity on Multivariate data. Stock market: Investors, traders and analyst often use correlation and covariance. Specifically, to understand the hidden correlation on the stock returns of one company to other, which could potentially bring down and minimize the investment risks. Implied Correlation Index by CBOE (Chicago Board Options Exchange): This tracks the correlation between implied volatilities of options and weighted portfolio of options Banking and Insurance: Exploratory analysis can give more insights on the variable relationship which assists in customer churn and retention.- Screening Design

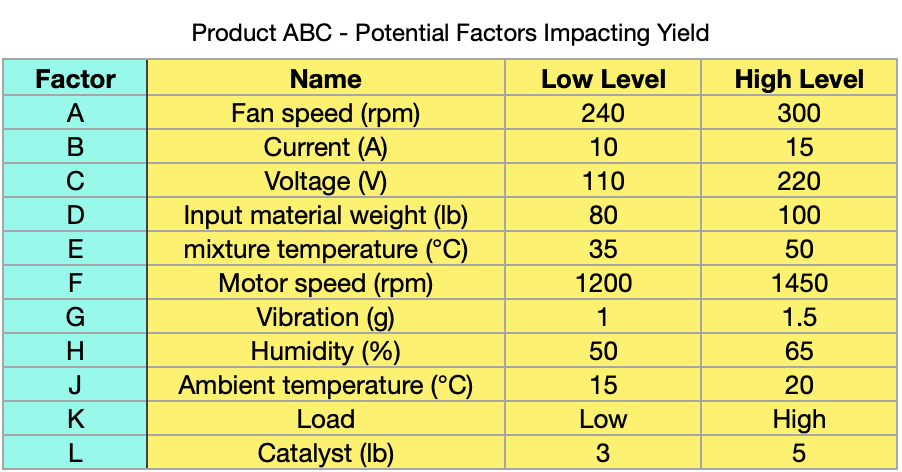

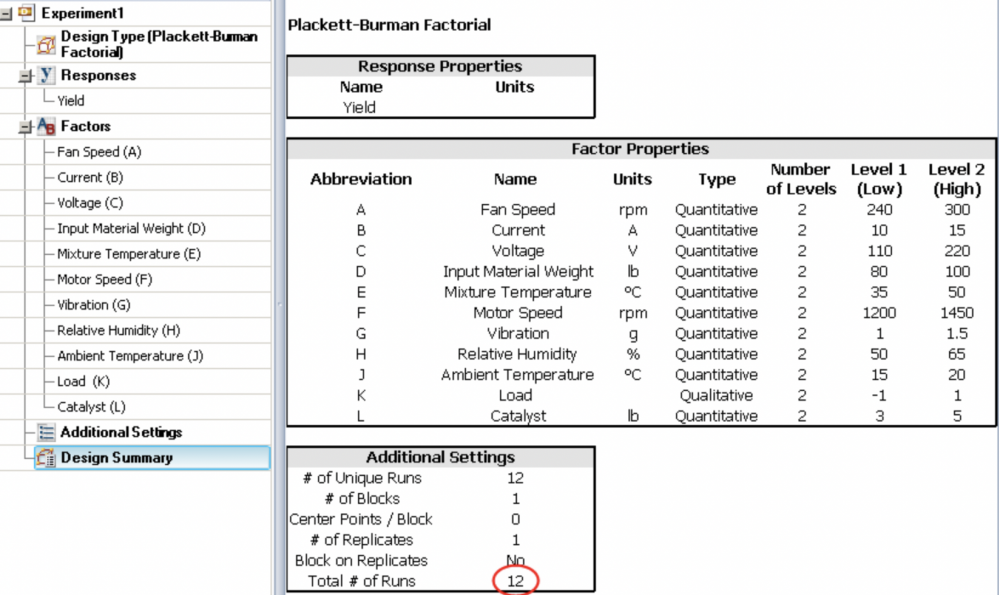

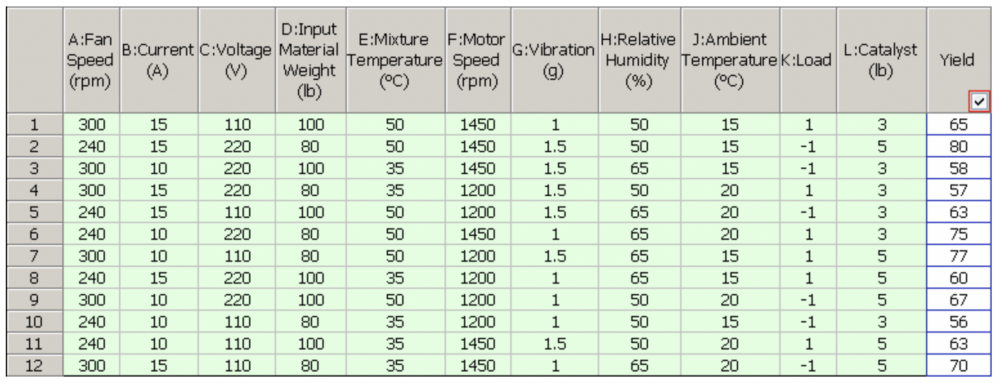

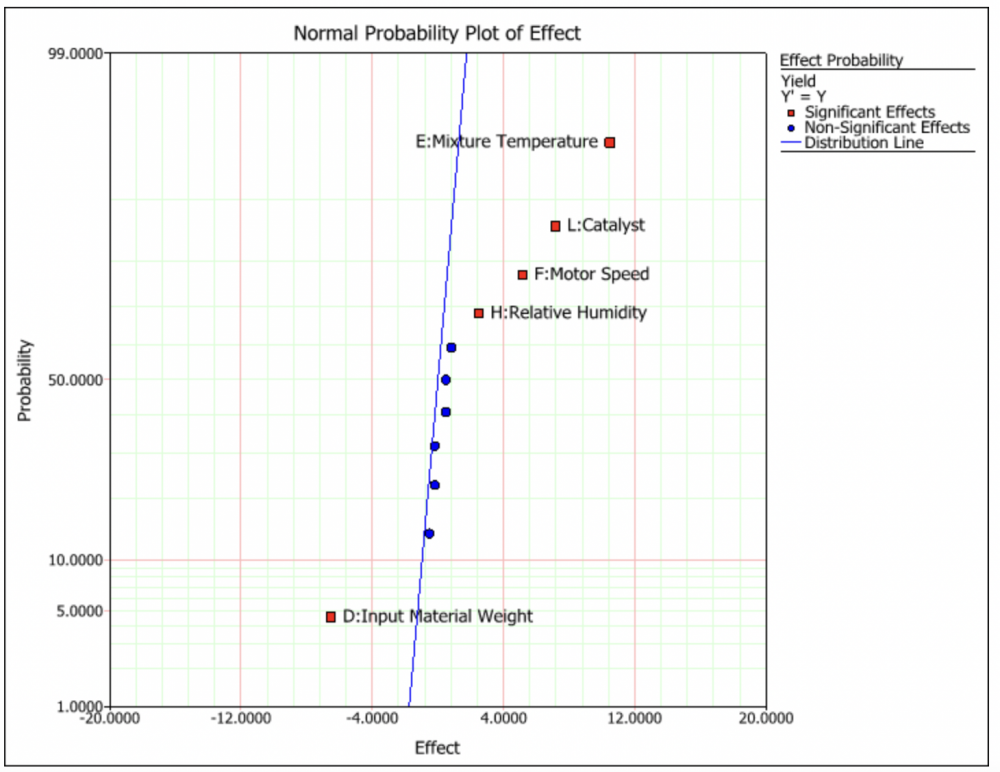

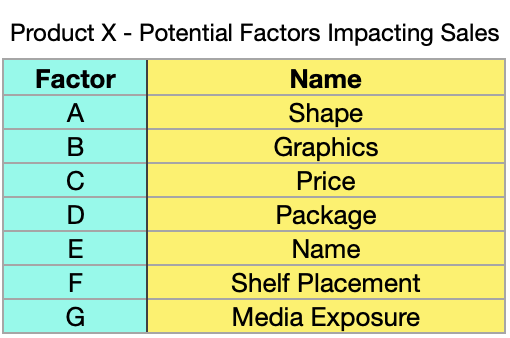

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Screening Designs: Screens factors that are not statistically significant Intention: Used for exploratory analysis. Focus: Estimates main effects in presence of negligible interactions. Most suitable for Industrial experimentation during early states of design. When there are many significant factors, screening design can be used to condense the list to fewer once. Time and again, it becomes tedious to study all the factors in detail. Screening design can be used effectively and compared to traditional design methods, screening design just require fewer experimentation runs. To say in short, experiments are “Small and efficient”. Often used screening designs include: Fractional Factorial Design (2-Level), Full Factorial Design (2-Level), Definitive Screening Design, & Mixed-level design Below are few Specific design: Plackett-Burman design, Taguchi methods, Cotter Design However, there are many different screening designs, some of the considerations for best design fit are listed below: Questions that we can, before finalising the design method: What's is the Overall Goal What are the specific response types How are these responses measures What are the factors that need to be considered What should be the range for the factors Do we have block factors Are we working on a Split plot problem Do we have problematic combinations of the factor settings Answers to the above questions, can effectively let us know in picking the perfect design model for the screening. Lets take a case of a Chemical Product ABC as an example and use Plackett-Burman (2 Level Fractional Factorial design). Based on preliminary analysis, it was identified 11 potential factors might impact the yield of the chemical product, which is listed in the below table. Simply if we want to run 2 level full factorial design, Total number of runs would be 2 Power 11 = 2048 Runs. Some of the interactions between the potential factors might have trivial importance, Plackett-Burman design can be effectively used here. Lets run the design by just setting the Base number of runs as 12. Below is the summary of the design settings: Factor Combination and its Yield for 12 runs is shown in the below table: Inference from Effect probability plot can be used to identify the significant few (Important Factors in the experiment), which is shown in red squares in the plot graph. This brings down the potential factors from 11 to 5. Properties of the design generated can also be effectively evaluated by various other different output metrics, viz., Power Analysis, Prediction Variance Profile, Effect Probability Plot, Fraction of Design Space Plot, Prediction Variance Surface, Estimation Efficiency, Alias Matrix, Color Map on Correlations, & Design Diagnostics Screening design might not be limited to industrial experimentation, however the applications of it can also be used in other functions as well. For Instance, lets consider Marketing example. 2^7 Experiments or 128 Runs could be too much for a tight delivery time period of Product X, however screening designs based on preliminary analysis can help the product to touch new sales success stories. Benefits of Screening Designs: Relatively Inexpensive (Saves $'s) Efficient approach for process improvement Can run the experiments with limited resources Effective simulation- Measles Chart

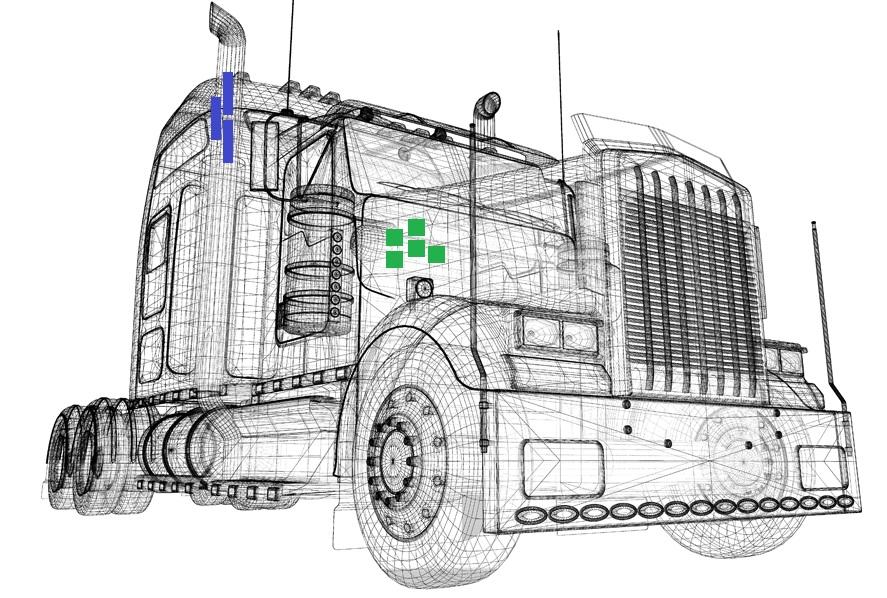

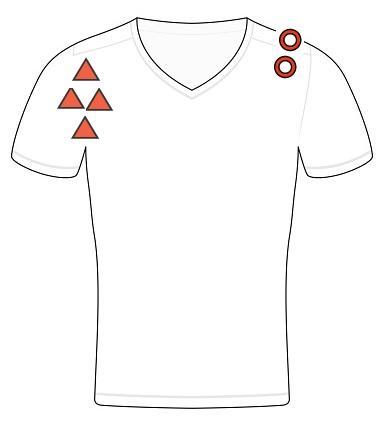

Mohamed Asif Abdul Hameed replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Measles Chart commonly referred as Defect location check sheet/map is a graphical analysis tool. It can be closely compared to a scatter plot (from clustering viewpoint) in combination to a Defect Concentration diagram. To use, Measles chart, what we require is the Image of the subject of interest. Whenever, there is an issue, we must mark the location of the issue on the Image. Depending upon the issue category type, we can use different symbols. Based on the cluster, we shall be able to identify where exactly the issue is frequent and recurring on the Image. This indicates True failures, handling failures and test errors. Thus assisting us in getting into category wise solution mode rite away with a quick turnaround. Below images on the examples can help the operators to quickly identify where defects are happening and could turn their focus to get into root cause of the issue to take actions. Undoubtedly, this helps in defect reduction. Example 1: Defects in Side by Side refrigerating unit Example 2: Defects in Adventure touring motorcycle Example 3: Manufacturing defects in Heavy load transport truck Example 4: Stitching faults in Shirt manufacturing Example 5: Casual shoe adhesive faults Benefits of Measles Chart: Provides visual indication of defects Easy to deploy Effective defect prevention based on the application Quantify defects and issues by category and location Helps to identifying the frequency of the defect occurrence Can be performed at factory level - production floor. Precisely, measles charts is a structured analysis that helps in locating, diagnosing and correcting frequent issues / problems on the work floor to improve the operational efficiency. This analysis can help expert teams to retrospect and get into upstream processes to identify and fix the issue enduringly. More reads and a comparative study on Defect concentration diagram, similar subject to that of Defect location map can be seen in the below link: - Control Charts for Continuous Data