-

Prashanth Datta changed their profile photo

-

Waterfall Chart

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Waterfall diagram aka Bridge Chart in its simplest form is a graphical representation of how you will achieve your desired goals in a systematic (incremental or decremental based on the goal KPI) way. It helps with a neat visualisation of 'WHAT' and 'BY HOW MUCH' variance you will achieve your goal KPI. For example, if a BPO wants to improve its current CSAT say from 80% to 85%, post a thorough Measure and Analyze phase (with ground rules of Define and Measure addressed here to improve CSAT by 5%) with identified tangible and actionable root causes we can attribute which action item can yield what percent improvement and plot a neat Water Fall/ Bridge Plot. In this example, post analysis we can say the bride to 5% can be say 2% from Bottom Quartile Agent Management, 1% from Knowledge Management, 1% from Customer Handling skills and Communication and possibly another 2% by stacks realignment. This approach will give a focused action plan and will help us focus on 'critical few' actions' with a timebound improvement plan. This can be revisted at planned intervals to see if the action plan is working before moving the same to Control phase. A downward trend bridge can be Attrition Management

-

Pareto Analysis

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!The Pareto Principle or the 80/20 rule essentially means 80% of the Output comes from 20% of the input. This no doubt is a very important tool in Six Sigma Analyze phase to identify those critical root causes which can make some significant changes to my output Y. Given most of our Six Sigma projects are time bound, we need to work with the approach on "Not to Boil the Ocean". As per Pareto Principle, we identify those top 20% of the inputs which when addressed can bring about the desired changes for my Output Y i.e. either shift the mean or reduce the variation. We then try to develop solutions during our Improve Phase for those identified X's. However, Pareto Principle comes with it's limitations. To name a few... Pareto Principle holds good when the frequency of occurrence of any issue is of significant. However it doesn't cover the severity of the issue. The 80/20 rule shouldn't be comprehended to add to a 80+20 logic. This is not a 100% pie that we are working on to split into a 80% and 20%. It only shows that 20% of the inputs has a significant impact on 80% of the output. In-fact based on the business the inputs % can be changed from 20% to 30% or 40% as long as the inputs identified can bring about the desired changes basis project requirement. 80/20 doesn't shouldn't stop you looking other X's which can have it's impact. As the X's which fall under 20% are those critical X's which when addressed brings the desired changes to Y, the one which falls outside can still make a difference when addressed. These X's outside the 20% bracket can have a "Just Do It" solution or Low hanging fruits which still can be worked out while we take a statistical approach to address the critical Xs in Improve phase. A basic cognizance of when and where to apply Pareto principle should also be considered. For example if I look at my talent building capabilities or saving money, while I identify 2-3 potential capabilities which can enhance my skill sets or investment potential, we shouldn't ignore the other aspects which brings in those incremental benefits. Bottom line is to stay Agile - while you focus on your core X's to bring about the desire changes, don't ignore the small X's which can give you incremental benefits. Despite these limitations, for most of our regular projects Pareto is still a good tool to Identify our Critical X's.

-

Root Cause

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Six Sigma summarizes its problem statement as a mathematical equation in the form of Y=f(X1,X2,...,Xn). The Y here represents the Dependent, Output or the Effect and X is the independent, Input or Cause. In order to improve the outcome Y, which could be either shifting the mean or reducing the variation in the process, the systematic approach is to control your X's which are your causes. Now the question comes will all X's make an impact on Y. Let's look at an example. I am a 2 Wheeler manufacturing company and see my Sales are gradually declining quarter on quarter. I get my Quality Management Team to review the situation and come up with an action plan. The QM team, post completing the define and measure phase, initiate the analyze phase and using techniques like brainstorming sessions gather all the causes for the decline in Sales. Listed below are the pointers 1. Increased Government Tax structure having bearing on my overall price. 2. Poor promotional offers vs. Competition 3. No Proper Customer Service / Demos 4. Increased rains and bad weather forecast in major cities making customers to speculate 5. Poor feedback on Post Sales Support. 6. Design Issues - Not appealing to youth Now let us categorize each of the above inputs into 3 categories, a. Noise Inputs (N) - Inputs(X) that impact output variable (Y) but are difficult or impossible to control. In our case we can keep the Increased Government Prices and Bad Weather forecast under this bucket. As a company, I don't have any say on both these things. b. Controllable Inputs (C) - X's that can be changed to see the effect on Y's. Also know as Knob variables, these inputs has the ability to change the output within the process setup. In our case, Design Issue is a Controllable Input. However, this needs some time as it has to go back to design team, researched, developed and implemented. c. Critical Inputs (X) - X's that have been statistically shown to have a major impact on the output Y. Also, in our Pareto it can be on top call driver list where the likelihood of occurrence is most likely and controlling the same would be Somewhat Easy to control. We can now see how the overall Cause has systematically broken down into Noise, Controllable and Critical Cause and my Critical Cause can be termed as Root Cause.

-

Sample Size

Simply stating, the One-Sample T - test compares the mean of our sample data to a known value. For example, if we want to measure the Intelligence Quotient [IQ] for a group of selected people in India, we compute their IQ using a set of predefined tests (mapping to global standards). With the results we get the average IQ for the team selected as well as their individual IQ scores. This average IQ score of the group can always be compared to a known value of 82, which is the average IQ of Indians [which is already computed by accredited testing organizations]. Further, an average score of < 70 means poor IQ and the lower threshold is also computed and made available through global studies. In this case, we can see two sets of averages that can be compared to your teams evaluated IQ score to draw some meaningful conclusions i.e. if group scores <70, they are poor, close to 82 maps to Indians average IQ scores and greater than 82 implies the group has some really intelligent folks. While you may want to strengthen your argument by further statistical analysis, it serves as a starting point of discussion. One Sample T-test is used when we don't know the population standard deviation. Like any other statistical testing, One Sample T-Test also works on certain assumptions. To sum up the assumptions, Dependent variable Y, should be a Continuous data type Data analysed should be independent No significant outliers in data as we are keeping mean as reference here Data is normally distributed. Further, as we are aware that one of the technique that we use to identify critical X's are statistical hypothesis testing. An interesting question that we will land up is how much of data in sample size should be analyzed to arrive at a meaningful conclusion i.e. data leading to root cause identification, so as we can build more effective solutions during our Improve Phase. The sample size calculator at https://www.benchmarksixsigma.com/calculators/sample-size-calculator-for-1-sample-t-test, is a good place to start with. Before I delve into the calculator specifics, let me take an example. We have a Diabetic Clinic where they keep HbA1C readings as their baseline measurement of their patients. While I am not getting into the technicalities of how HbA1C readings work, a global average of 8 is kept as acceptable. The clinic has been running tests for their patients and computing the results. Their sample data gave an average HbA1C reading of 8.04 with a Standard deviation of 0.34. As expected, the Clinic had introduced a new alternative diabetic drug when the computed the sample average of 8.04. The clinic now wants to run an hypothesis if the new drug has really helped them to bring diabetes under control. While we want to define the problem statement and use Hypothesis analysis to see if the change in drug has resulted as a critical X, the first step is to really see what amount of data needs to be evaluated before proceeding further. The calculator will help us with this critical step. Looking at the parameters of the calculator, Confidence Level - Preventing Type I error - This implies rejecting null hypothesis while it remains true. As a rule of thumb 5% rejection is acceptable which means 95% probability we need to prevent this Type I error. Lets keep this value to 95% Power of the Test - Preventing Type II error - This implies accepting null hypothesis while it is false. By rule of thumb we keep it at 10%, which means 90% we need to prevent this error. [ Type and Type II error can be subjected to change based on risk appetite of producer and consumer] Reference Mean Value - we will keep it 8 as it is defined HbA1C globally accepted value as normal. Sample standard deviation - we will keep it at 0.34 basis the samples. Sample mean value - 8.04 as arrived from the tests. With the above data, we see we need 759 samples to check if our mean is similar to reference mean. Anything less than this will not give us any meaningful inference. An interesting thing to observe is, if we compromise on our Type I and Type II error i.e. accepting more Producer and consumer risk, the sample size will fall. Again, it depends on the industry where you are analyzing the data. While Medical, critical research industries will not accept high allowances other industries may allow some tolerance. In summary, this calculator can help to identify sample size when sample mean is available, especially in service sectors to start with some basis analytics in analyze phase to come up with some good inferences for solution building activities.

-

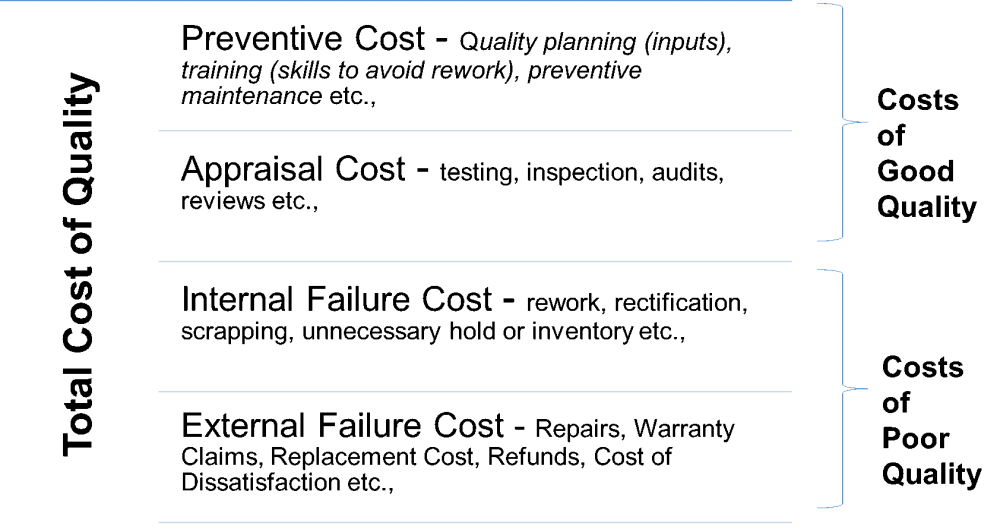

Cost of Poor Quality

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Before analyzing the COPQ calculator, let us quickly understand what COPQ is all about. As an organization, when we commit to deliver a product or service to our customers, it is deemed that the quality of this product or service meets and/or exceeds our customer expectations. Hence, right from the Design or Planning phase itself, it is important to give a lot of emphasis to the identified quality parameters associated with the product or service. When it comes to the financial planning part of your project, it is extremely important to assess the budget that needs to be allocated towards meeting the quality criteria. The two primary costs that will be allocated first are Prevention Cost and Appraisal Cost. Prevention Cost generally includes the budget allocated for quality planning (inputs), training (skills to avoid rework), preventive maintenance etc., Appraisal Cost generally includes the budget allocated for testing, inspection, audits, reviews etc., In real world scenario, it is often seen that, once the product or service is developed and ready for deployment, there are possibilities that we identify certain defects and post release to market, we can see a set pattern of complaints reported by our customers which needs to be fixed. The former, termed as Internal Failure or Defects still needs to be fixed before we deploy the product or service for which we will need to spend money. Latter, which comes from customer, termed as External Failure or Defects, obviously needs to be addressed but has a larger cost implication. We can categorize these two as secondary costs as below Internal Failure or Defects which generally includes rework, rectification, scrapping, unnecessary hold or inventory etc., External Failure or Defects which generally includes Repairs, Warranty Claims, Replacement Cost, Refunds, Cost of Dissatisfaction etc., While the Primary Cost associated with Prevention and Appraisal Cost are considered as Cost of good quality, as it strives towards achieving the required quality parameters as committed to the customer, the secondary cost of Internal and External failure are considered as Cost of Poor Quality as it has a negative impact owing to a defective and inefficient product or service. Summary as below. Cost of poor quality is generally referred to as non-conformance cost and acts as a good indicator to the company quality policies and programs. A fairly less trending COPQ indicates a strong governance around Quality Management Programs. Reducing the Cost of Poor Quality in itself is a very strong trigger and provides a good business case for your Lean Six Sigma Projects in the organization as it definitely calls for a systematic DMAIC approach to reduce the cost of non-conformance. Let us now look at a very basic hypothetical example to compute Cost of Poor Quality. Company AAA manufactures kids play area products – mainly gears such as Swings, Slides, See-Saw etc., Company JKL is a newly formed Kids Play Area Institution and have placed an order for 100 slides for all their offices across India. There is a SOW signed between both the companies with terms and conditions which includes Safety clause (as used by kids), strict delivery timelines, penalty clauses for delayed service etc., Each Slide was priced at INR 6000/- all inclusive (including installation, maintenance etc.,) Post production of 100 slides, it was realized that inclination of the slides were not good enough to give a smooth glide down. Given the core production part was completed, it was not possible to reassemble the set. Post some calculations, it was found that using a tension spring, they can slightly pull back the slide towards the ladder steps (each step is separately assembled to side bars) to give the required further inclination for an enjoyable slide down. The team now had to include a tension spring into the set-up and have the same incorporated with additional efforts. In summary, there was one Internal Defect found and had to include the cost of rework i.e. cost of tension spring, required adjustments on both slide and ladder set up and labor cost to make the adjustments. Also, this was the only workable option that company AAA could opt to meet the delivery timelines. With products delivered, JKL Kids Play area installed these slides across their 5 offices (20 each). While they realized the inclusion of tension spring which perhaps had led to some dissatisfaction owing to losing the aesthetics of the set-up, they kind off ignored it as the overall requirement was met. External Defect Synopsis - Within a Span of 3 months, 2 branches reported issues about steps 5 and 6 loosening on the ladder side where tension springs are pulled towards the side bars, due to the stress called by the pull by tension springs. While JKL reported the issue to AAA, within next 15 days all branches flagged the issue and nearly 70 out of 100 slides had this issue. Given it was steps to ascent, they expressed their anxiety as kids were using it and asked for quick fix. While JKL insisted for complete replacement of units, AAA assured of a quick work around. AAA had to send their technicians to replace the two steps firmly to side bar and use additional clamping to support through extended bars connecting the slide and ladder. Infact, they had to take additional steps to clamp all 10 steps to avoid any future issues. An external defect was called out here by customer and had to be fixed but more importantly left the customer unhappy. Let us now look at the cost of poor quality using our calculator at https://www.benchmarksixsigma.com/cost-of-poor-quality/ a. Internal Defects Identified = 1 per slide [No proper sliding experience]. So for 100 units, I need to input 100 defects in he calculator b. Average cost of internal rework = Cost of tension spring + additional labor cost effort = INR 75/ + INR 150 = INR 225/- c. External Defects Identified = 1 [Steps on Ladder sides loosened]. Issue was found with 70 units only. So in calculator, I need to input 70 units. d. Average Cost of external rework = Cost of replacement of 2 steps+ additional bars to hold the steps+ additional clamps to hold all steps + cost of technician = INR 100 + INR 300 + INR 500 + INR 400 = INR 1300 e. Penalties / Liabilities = INR 25000 claimed by company JKL for lost revenue As per the calculator, Total Cost incurred due to internal and external defects are INR 138,500. AAA had sold 100 slides for INR 6000/- each i.e. INR 600000/- all inclusive. Let us assume that AAA had planned for 30% profits with no investment on Preventive and Appraisal costs. So profits made were INR 180,000/ - With the Cost of Poor Quality, they lost around INR 138500/- and net effective profit is around INR 41500. ************************************************************ Taking my example above and mapping to calculator, below few call outs 1. Internal Defects Identified – In my scenario, it was 1 defect per piece so for 100 units sold, it would be 100 defects. In case if it was more than 1 defect in my example, it would have been number of defects per piece * number of pieces. My feedback on calculator - If it is made more explicit in the calculator to input total number of defects across all units or have two separate fields like Defects/Unit and Total number of units which will land up with right number of defects for which the cost has to be estimated. For Services industry, we can have multiple defects while the unit can still be 1. 2. Average Cost of Rework [Both Internal and External] – In my scenario, I have tried to assess the individual cost that goes into the rework for both Internal and External scenarios. My feedback on calculator – While it clearly states average rework cost per defect, it will be helpful to include the type of costs that can go it as it will help the user to appropriately think, sum and input into the calculator. 3. External Defects Identified – In my scenario, it was across 70 units we found the issue. My feedback on calculator - If it is made more explicit in the calculator to input total number of external defects across all units or have two separate fields like Defects/Unit and Total number of units affected which will land up with right number of defects for which the cost has to be estimated. For Services industry, we can have multiple defects while the unit can still be 1. 4. Penalties / Liabilities / Recall Costs – In my scenario, I have included a Penalty in the form of loss of revenue. My feedback on calculator – It’ll be helpful if more specific inputs are given in relation to this cost. While Penalties and Recall costs are direct, Liabilities if expanded further will be really helpful. If the magnitude of the excursion is large, sometimes, you may have to engage a Third Party Support all together to handle the volumes of your issue. This it will be a huge Opex cost. So being explicit will be helpful. In summary, this calculator is a good start to proactively look at the possible cost of non-conformance and plan your overall cost of quality as well as ensure to keep your cost of poor quality at minimum through appropriate planning. While meeting customer expectation is at focal, managing profitability is at equal importance to stay competitive in the market.

-

Sensitivity Analysis

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!As we have already seen and understood, Root Cause Analysis focuses on identification of all those independent variable X's (further narrowed to critical X's) deemed as input, which has an impact on the dependent output variable Y. In other words, identification of all causes which influences the effect. In a Sensitivity Analysis, also referred to as "What-if" or "Simulation Analysis", we determine how the output-dependent variable (Y or effect) varies when each of the independent variable (X or causes) are varied under a predefined set of assumptions. Simply stating, how different values of each independent X, will have an impact on Y. Once we have the critical X's identified using the relevant Tools & Techniques of Root Cause Analysis, applying Sensitivity Analysis on these Critical X's will be extremely helpful to see how the focus metric Y, behaves by changing the values of each X under a set of predefined assumptions. This paves way to develop solutions in a more scientific method within the identified X's and a combination of this approach across all input causes will help us with a more comprehensive solution that can be implemented during the Improve phase. Let us now see an example. I am running a small coffee shop and below are my financial workings as on date Cost/Cup of Coffee - INR 12 Number of Cups of Coffee Sold per month - 4000 Operating Expense (incl. Rent, Salary, Milk, Sugar, Coffee power etc.,) = INR 40,000 Based on above workings my Monthly Income is INR 48,000 [12 per cup x 4000 cups per month]. My Profit after deducting the Operating Expense is INR 8,000 [Opex INR. 48,000 - Monthly Income 40,000]. I will now but a problem statement with a business case that I need to improve my Profits from this coffee shop. At an high level, if I want to put this in a mathematical format for my business case in Cause and Effect method Y=F(X), using Root Cause Analysis techniques, at an high level, we can say profits are influenced by price per cup, number of cups sold and the operating expenses. Profits = f(Price per Cup, Number of Cups Sold, Operating Expense, ...) I need to work on each of these levers either increase or decrease to improve my profits. While at an high level, going by thumb rule, we always say to reduce the Opex and it in itself can lead to another root cause analysis on what we can vs what we cannot. But looking at the other two levers of cost per cup and number of cups to be sold will form an interesting strategy to plan around and the Sensitivity Analysis will help me take a decision. With Sensitivity Analysis, I can play around by increasing or decreasing the price or increasing or decreasing the number of cups sold and its impact on my profit. As per the rules of Sensitivity Analysis, we make some assumptions and in this case we make an assumption that my Operating Expense will remain fairly at same price or allowed to go by not more than 10%. With this ground rule you can see the below table which helps you draw conclusion. I can now make some quick comparisons now. If I retain my current price of INR 12, but build strategies to increase sales to 5000 cups a month (increase in 1000 cups) I will make INR 20,000 profit vs my current profit of INR 8,000. Even an increase in Opex by 10% should still yield me INR 16,000 which is double my current scenario. Like wise if I want to retain the sale at 4000 cups and increase the price to INR 15 , I will have a similar story. In summary, with Sensitivity Analysis applied on Root Cause Analysis, it shows, how within each Inputs, you will have options to explore to arrive at a desired stable Output with Voice of Customer at crux.

-

Measurement System Analysis (MSA)

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Attribute Agreement Analysis typically involves a binary decision making around one or more attributes of the item being inspected i.e. accept or reject, good or bad, pass or fail etc., So, in this context we are referring to discrete data type. The key call out here is the human factor (known as appraisers) who typically make these assessments. With this condition in place, it is all important that the appraisers are consistent with themselves (repeatability), with one another appraisers (reproducibility) and with known standards (team accuracy). A poor repeatability implies the assessor himself is not clear on the measurement system analysis and hence needs a thorough training, understanding and alignment else the entire objective of the assessment will not make sense. On the other hand, in Gage R&R, we deal with continuous data type and there is a Gage or measuring instrument which plays a significant role to measure the data. Each appraiser will measure each item multiple times (repeatability), and their average measurement of each item will be compared to the average for the other appraisers or measurement tools (reproducibility). It is primarily the measurement tool that plays primary significance in Gage RandR and what is important is to ensure the equipment calibration is taken care off so as the repeatability factor of appraiser measuring the item is more Gage dependent rather than the appraiser himself.

-

Turing test

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!YMy two cents on this topic... Artificial intelligence takes it's significance at those areas where repetitive tasks or scope of self help is at abundance scope. While it helps business from cost optimization stand point, for customers it is more of quicker time to resolve. The need to check the intelligence of machines are very high where we have sensitive data. For example, banking system AI has to be smart enough to interpret complete query and present answers as the output in such cases could be personal finance data. If the machine picks up only keywords and presents output the risk of presenting one customer financial to other will be high and can be disastrous. We have heard last issues on machines where private conversations were sent on emails to the distribution list as it picked key words and misinterpreted the instructions. Checks and balances should ensure risk free application.

-

Lean Tools that allow for improvement in themselves

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!As suggested, Lean focuses on continuous improvement in processes by eliminating the non value adds. Lean tools which enables this activity manifests it's capability in such a way that some of the tools have gone beyond industry restrictions and hence play a critical role even in Six Sigma approach, especially during Analyze and Improve Phase. I will list 5 such tools which I use and believe in its effectiveness irrespective of the process improvement projects domain. 1. Value Stream Mapping 2. Kaizen 3. Cause- Effect Diagram, Fish Bone Analysis 4. 5 Why's 5. Poka-Yoke (Mistake Proofing) Value Stream Mapping --- Irrespective of industries, in order to improve any process, you need to understand how the process works first. While flow charts are good starting point, a step ahead is breaking down the process into minute details using VSM which help break down steps with in each process, time taken for each activity, it's dependency etc., This would nearly help you identify your areas of opportunities which you can zoom in and work. This VSM approach holds no bar or restrictions and helps all industries. I get to hear all Big Data discussion meeting rooms are filled with flow charts Kaizen - Most often in our day to day operations experience, we see issues but also possible solutions. Our work force, basis their work expertise will suggest multiple solutions and cross functional owners who can help to fix issues. With too much information and solutions, you need to put a method to madness and thy name is KAIZEN. In this approach you bring your problem statement, your experts across teams, stipulate time, get a structure to discuss, evaluate and finalize solutions, have a action tracker on agreed actions, owner and timeline and implement to see your process improve. Cause- Effect Diagram, Fish Bone Analysis --- This tool systematically helps you list all possible causes on your left for the possible problem in focus. It not only captures all problems but also helps group under different categories viz Material, Man, Machine, Mother Earth, Method, Measure etc., These logical groupings in way will help to focus on areas where actions are possible. This then leads to improve phase solutioning. 5 Why's -- This is the most simpler and logical tool, forget Lean Six Sigma, is also applicable to our day to day life. Even Six Sigma despisers would be using it without their knowledge Every question, you ask Why and it has been established that mostly by 5th Why you will have a fair solution in hand. Poka-Yoke -- A true value add which enables mistake proofing thinking while you implement your solution to make it most robust. This has its relevance in today's world where we have a lot of sensitivity involved i.e. finance, personal data etc where relevant stakeholders take adequate precautions for errors to happen in first place. Few basic examples are your banking window timeout, currency cards locked for incorrect pins after stipulated attempts etc., Even in aspects of Physical Security and other Risk related activities, we see Poke Yoke playing significant roles. While there are other significant tools, I have listed those which I used and have found extremely useful.

-

Evolution of Six Sigma

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!What keeps your business engine running amidst tough competition in today's environment? I strongly believe in 4 things that acts as a differentiator a. Customer Centric Approach - Have customer in focus in whatever you do. Historical studies have always shown it costs more to acquire new customer and effective management of existing customer assures you of continuous business which in turn benefits to invest in better ways to gain new customers. b. Productivity and Efficiency - Key mantra is to do more with less within committed timelines with the focus on better OpEx management. c. Drive a balanced scorecard - A successful organisation always drive a balanced scorecard, when it comes to cost, quality, productivity and customer satisfaction. It is not one at the cost of another. d. Motivated work force - who make a difference in driving the results for your organisation Organisations today adopt multiple buzz words (mapping to the mood in the market) to drive their organization business charter which some times can befuddle the very purpose of its existence and pull your objectives in different directions rather than supporting it. You need to have a "Pivotal Program" in your organisation which can give clarity to your business charter and help position all other programs around it. I see Lean Six Sigma as this pivotal program. Every organization today broadly categorizes its work into two parts - Run the Business and Change the Business. While Run the Business focuses on delivering day to day operational commitment, Change the Business acts as an enabler to support its customer and work force. For companies today to stay competitive, they need to be profitable. They need to ensure their Run the Business operates within the KPIs as demanded by the customers and the Strategic Initiatives of Change the Business should focus on addressing the root cause that is impacting my KPIs. A simple example is, giving better experience to your customer once he calls your customer care team (run the business focus) vs. enabling simple self help to resolve his query in few steps (change the business initiative). While it is rather easy said than done, an organization may have multiple programs, tools or professionals but if it is not structured, packaged and adequately blended, we will conveniently loose out on the core objective and digress from the goal which can lead to not only financial losses but also losing their valuable customers. Even a decision not to invest in any changes, still needs to go through a methodical approach to establish the fact on why not to make an investment. Lean Six Sigma - the pivotal program, not only fulfills all objectives as a metric, method, management and philosophy but also the 4 points I mentioned above. The outcome of a methodological Six Sigma effort can help reduce cost, improve profits, drive focus keeping customer requirements, create a knowledgeable workforce and above all use a data-driven scientific approach to arrive and sustain your results. I strongly see that other programs can be positioned around Lean Six Sigma as it opens up avenues like using a Project Management approach to implement a solution, Robotics to simplify tasks, Data science for advanced analytics etc., What is key to note is that these programs need to have a trigger and Lean Six Sigma is the trigger. Finally on my concluding note, for folks who are in a denial mode to accept the benefits of Lean Six Sigma, forget organizations, I personally see the existence of Lean Six Sigma concepts in our daily life. Be it to better time management, health management or wealth management, the concepts of Lean Sigma has its contribution.

-

Applicability of Hypothesis Testing in DMAIC

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!In order to answer above question, let me break down the question into two parts... 1. What is Hypothesis testing? 2. What is the most desired "output" from each phase of a DMAIC project? While we understand that there are few tools and techniques that can be applied across Phases, what we need to caution exercise is not to force fit tools as it can result in experimentation and possibly delay the project timelines. Now let's look at what Hypothesis testing is all about. Hypothesis testing is a part Inferential Statistics which is used to predict the behavior of the population by analyzing the sample data (two or more sets) to primarily determine if there is any "true" difference between the sample OR there are no differences in parameters between compared samples. The inferences are made through Null and Alternate Hypothesis. In a Null Hypothesis, we state that there are no differences or impact or the status remains status quo. However in Alternate Hypothesis we say that there is an impact or difference. Further, the type of Hypothesis testing is determined by the type of input(x) and output(y) data type ie if it is discrete or continuous data. Let's now delve on the second part of the question which is desired output from each phase of a DMAIC project a. Define Phase ----: Desired output is Approved Project Charter b. Measure Phase ---: Desired output is Baseline - Current As Is performance of identified focus CTQ c. Analyse Phase ---: Desired output is Validated root cause(s) or Critical Xs d. Improve Phase ---: Desired output is Selected Solution leading to improved results e. Control Phase ---: Desired output is Control Plan. Given significant usage of data analytics happen in Analyze and Improve phase it will be more appropriate to use the Hypothesis testing during these two phases to validate critical x(s) and to confirm if implemented solution(s) have really made the desired change respectively. Given the desired output for Define, Measure and Control phase focuses on setting up Project Charter, drawing the Baseline performance of to be improved metric(Y) and have the Control Plan in place to ensure the desired results are performing within limits, it could possibly be a force fit if Hypothesis testing is implemented in these Phases.

-

Validation with Small Samples

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Six Sigma gains it's edge over other Quality Management System as it uses data driven approach for problem solving. Statistics forms an integral part of Six Sigma methodology as many of it's tools refers to statistics for logical conclusions. We essentially have two branches in Statistics - Descriptive and Inferential. Descriptive Statistics helps work on collecting, analyzing and presenting information as mean, standard deviation, variation, percentage, proportion etc. While Descriptive Statistics helps with description of data, it will not manifest itself with any inferences. Inferences about data is very important for decision making and it is Inferential Statistics which helps us with the same. To answer above question on the approach for decision making using few samples, it is Inferential Statistics that helps us analyze sample data and predict the behavior of population. Further, Inferential statistics helps us establish the relationship between independent variables (X, Cause) and the outcome (Y, Effect) and also help identify the critical X which needs to be focused to improve the Y. Inferential Statistics is strongly associated with Hypothesis testing. Hypothesis testing is performed on Sample and whenever we do a Hypothesis testing, we ask below questions on whatever we saw in the sample Is It True? Is it Common Cause? Is it Pure Chance? Let us see how to perform a Hypothesis testing which is key for Inferential Statistics. Step 1. Define the Business Problem in a data driven format i.e. Y=f(X) Step 2. Select and appropriate or apt Hypothesis Test that we need to perform on the problem. We will see this in detail in next section. What drives the selection of test is basis the type of data defining both X and Y i.e. if the data type is discrete or continuous. Step 3. Make the Statistical Hypothesis Statement ; H0 = Null Hypothesis = No Change, No Impact or Difference; HA=Alternate Hypothesis = New argument holds good basis the business case. Step 4. Run the test on Sample data using tools like Minitab Step 5. Calculate the "P" value - which will be an output from the tool Step 6. Compare "P" value with "alpha" [Alpha is called as Type I error and acceptable level is generally kept at 5% or 0.05] Step 7. Do Statistical conclusion i.e. if P is greater than alpha, your Null Hypothesis holds good else your alternate hypothesis will hold good. Step 8. Do a Business Inference i.e. if Null Hypothesis holds good than the input sample is treated as non-critical x. Alternatively, if your alternate hypothesis holds good, we should treat the input as critical x. W.r.t Step 2, on selecting the apt test, below inputs should serve as guiding pointers Output Y is Discrete and Input X is Discrete in 2 categories, we need to use 2 proportion test Output Y is Discrete and Input X is Discrete in multiple categories, we need to use Chi-square test Output Y is Continuous and Input X is Discrete in 2 categories, we need to use 2-sample t-test Output Y is Continuous and Input X is Discrete in more than 2 categories, we need to use ANOVA Output Y is Continuous and Input X is Continuous we need to use Regression Analysis. In summary, Inferential Statistics is used draw conclusions on the larger population by taking a sample from the same and also try to establish relationship between the input and output.

-

Lean Sixsigma in Healthcare

Dear Vishnu, While I am not a health care expert, for my academic interests and basis my understanding w.r.t Emergency Services, I am putting forth few pointers for your perusal. You may treat these as "Voice of Customer" as some of the inputs presented are basis my observations and experiences as heard for a desired better "To-Be" state. While we see huge expenditure associated with Emergency Services, be it Capital Expenditure to set up the services or Operating Expenditure to run the services, which in itself provides opportunities on cost saving projects, we shall treat that as secondary, as the primary objective of any Emergency Service is to Save Life by providing appropriate health saver services on time. To begin with, we may want to clearly define the scope of what Emergency Services means i.e. Is it Simply moving the affected individual to the next available definitive care OR Revive/Stabilize the affected individual using required level of life support (basic, intermediate or advanced) while on move. While this scope can be majorly driven by the local law of land or legal framework, you may use the "SIPOC Diagram" for either of the scenarios to clearly outline the required parameters which in turn can help you define your "Critical to Quality [CTQ]" metrics. Further, on a high level, for any Emergency Service to Operate in it's basic level, given my understanding, we need the following infrastructure Ambulance Services A Call Center or a Command Center set up to facilitate ambulance allocation, tracking and control Definitive Health Care Centers or Hospitals with required specialization and infrastructure Trained staff to handle Emergency across all layers i.e. right from Ambulance Drivers to support staff in the Ambulance, Doctors at Hospitals etc., Awareness among Public on how this Service works and how to use the same i.e. where to call and how to avail the services etc., An effective governance model to ensure all the above pointers are functioning properly. What stands key to above pointers are a very strong legal framework to drive accountability and a strong Information Management System. What is the role of Information Management and Knowledge Base System in Emergency Services? Information Management and Knowledge Base system should typically help define the standards, basis expert inputs from industry SMEs and medical experts along with using bench-marking techniques to set some goals and guidelines. For example Set goals for Ambulance commutation time to next available hospital basis the nature of incident e.g. An heart attack should not exceed 30 minutes, an accident with minor injuries should not exceed 60 minutes etc., Help list ambulance available from all sources (public, private, charitable trust, companies etc.,) by locality - list all health care centers by localities and by area of specialization ... PS - Numbers stated above are hypothetical to just serve as explanation Parameters like above should help the Command Center to map ambulance from which location needs to be sent to the incident site to support the affected individual and direct to which hospital basis the nature of incident for survival or support of the affected individual at the shortest possible time available. This exercise in itself can be a good food for thought for concepts like "Design of Experiments". Now let us look at how we can use Lean Six Sigma on some of the infrastructure use to support the Emergency Services as listed above Ambulance Services - Possible CTQs to focus - On Time Arrival of the ambulance to the incident spot, Transportation time of the Ambulance to the relevant Definitive Health Care center etc., Command Center - Possible CTQs to focus - Average Speed of Answer, Average Call Handle Time, Average Queue Wait Time, % Accuracy of dispatching right ambulance services etc., Definitive Health Care or Hospitals - Possible CTQs to focus - Average Response time for emergency treatment, % redirects to other hospitals etc., While I have listed few CTQs by areas basis my limited understanding, each of these would clearly require a DMAIC approach or in some cases a DMADV. Trust this serves as some pointers and thank you for this question.

-

Process Controls

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Control Phase is that critical stage of Business Process Improvement where the selected solution(s) which are implemented to achieve the desired output [Y] are monitored for it's effectiveness. In other words, the performance of the process which needs to be maintained at the desired level as per the Voice of Business or Voice of Customer has to be sustained through the actions implemented. An effective Control Plan a. Acts as Risk Assessment mechanism on the new improved process. b. Ensures process stays within control and helps identify any out of control variations due to any special causes and calls for appropriate action as required c. Continues to be a living document to monitor and control the new process d. Doesn't replace the Standard Operation Procedure derived during the improved phase but adds to its effectiveness by monitoring it. e. Resides with the process owner for continuous review and documentation. Now knowing the importance of Control phase, let us look at some of the types of Process Controls and their effectiveness. I have kind of arranged these controls in order of their effectiveness basis my understanding and judgement 1. Mistake Proofing - Poke Yoke 2. Risk Mitigation Methodologies like FMEA 3. Process documentation / Inspection and Audits 4. Statistical Process Controls 5. Response and Reaction Plans 6. Process Ownership 1.Mistake Proofing - Poke Yoke - Mistake Proofing is a technique wherein the input's or causes are so well controlled by making it impossible to for a mistake to happen at the process level itself. A simplest example that happens in our day to day life is using a spell check on our email. Unless all spellings are checked and corrected, the controls implemented in the form of a dialog box will not allow the email to be sent. 2. Risk Mitigation Methods like FMEA (Failure Mode and Effects Analysis), wherein every solution implemented to drive the Process Documentation / Inspection and Audits - I given an equal weightage to both these controls as an inspection or audit is done against a set standard and that standard has to be well documented in your process documentation. A well documented Standard Operating Procedure provides detailed instructions at every stage and what needs to be done at each stage which implies the required controls for possible deviations would also have been included in the document. While Inspection and Audits are an additional layer in the system which can add some additional lead time, it is still a desired quality control which helps to ensure the end results are controlled internally rather than treating as an escalation from customers. 3. Statistical Process Controls, like control charts helps as visual aids and paves way for analytics. It helps determine if a process is stable and within statistical controls. It serves as good trigger points to catch and deviation, however further deep dive has to be conducted to understand the causes for save. 4. Response and Reaction Plans - As suggested, the plans are reactive which is a response to an issue i.e. it comes into effect once an issue is identified. While it is not a top preferred option, but still is a control plan as it sets directions to have a response plan in the event of an issue trigger. 5. Process Ownership - Typically a control which is people dependent i.e. the process owner will define the rules to control the process outcome.

-

Annapareddy Siva started following Prashanth Datta

-

Design Risk Analysis

Prashanth Datta replied to Vishwadeep Khatri's topic in We ask and you answer! The best answer wins!Before delving into the tools used for Design Risk Analysis, let us try and break down this question further to understand, What “Design Risk Analysis” means, Understanding what “Risk” is, and Common tools used for Design Risk Analysis. What is “Design Risk Analysis”? As we are aware, we have two methodologies in Six Sigma 1. DMAIC – Define, Measure, Analyze, Improve and Control --- Typically used for improving existing processes or products 2. DMADV – Define, Measure, Analyze, Design and Validate/Verify – Typically used for developing or redesigning new products or processes While performing a DMAIC methodology on an existing product or service, post Analyze phase, it is quite possible that the potential solution could call for a redesign of existing product or process in order to meet the Voice of Customer or Voice of Business. In such a scenario, it is extremely important for the project team to meticulously work on the design process, as it is the expected solution and hence it needs to be made full-proof. One of the key focus areas in making the design full-proof is to anticipate the possible failures, threats or flaws of the proposed new design. In summary, we need to determine the potential risks associated with the revised design and build mitigation plans in advance, so as the product or process under the new design fulfills the VoC or VoB. Design Risk Analysis helps achieve this objective. What is Risk? Any variable that has the potential to negatively impact your (re)design of a product or service which in turn can affect your project deliverables or output. Further, these risks, if unmitigated can have subsequent impact on various parameters like company brand, revenue, legal or statutory compliance etc., depending on the final deliverable or desired response / output (Y) of the project. Common Tools used to identify Design Risks. We can categorize these tools under two buckets a. Qualitative b. Quantitative Qualitative tools for Design Risk Analysis Documentation Review – In this approach, we try to identity risk by reviewing project related documents such as risk lessons learnt from similar projects, whitepapers or articles pertaining to the scope of project etc., Information Gathering Techniques - In this approach, we use tools like Brainstorming, Delphi technique, Interviewing etc., Essentially, with the planned (re)design scope, we gather inputs on potential risks from individuals, project team, stakeholders, subject matter experts either through 1x1 discussions, group discussions or anonymous feedbacks. Simple root cause Analysis technique like “5 Whys” can also help identify risks as we try to narrow down the root causes leading to new design. Diagramming Techniques – Using tools like Cause and Effect diagram or Process flow charts help us break down the process in detail to identify potential risks. SWOT Analysis – Doing a Strength, Weakness, Opportunities and Threat analysis of the (re)design, will help come up with associated risks of the design. Expert Judgment – Leverage expertise of Subject Matter Experts within the project team or across stakeholders to identify the risks. FMEA – Anticipating failure at each stage, its effect which in turn helps us to come up with potential mitigation plans. Quantitative tools for Design Risk Analysis Modelling Techniques – Develop models to capture Risks using critical inputs like probability of occurrence, severity levels, controls, vulnerabilities and come up with Risk Priority Numbers, Probability and impact matrix, Expected monetary value analysis etc.,

View in the app

A better way to browse. Learn more.

.jpg.cf7c755139fc107b2145b49148196369.jpg)